NVIDIA Updates on G-Sync HDR: 4Kp144 Monitors On Sale at End of May, Other Models Coming Later This Year

by Nate Oh on May 16, 2018 3:00 PM EST

While NVIDIA's upcoming ultra-premium G-Sync HDR monitors have been in the public eye for some time now, the schedule slips have become something of a sticking point, prompting the company in March to state that 27” 4K 144 Hz models would be shipping and on the market in April. Needless to say, those displays are yet to launch, though preorders for the Acer Predator X27 and ASUS ROG Swift PG27UQ were listed in Europe in mid-April.

Putting some amount of speculation to rest, NVIDIA has indicated the end of May for shipping and e-tail availability of the Acer Predator X27 and ASUS ROG Swift PG27UQ, though ultimately this decision is in the hands of Acer and ASUS. On that note, Acer stated that they had no updates on availability at this time. Both models were first showcased as reference prototypes during CES 2017, and as part of the larger G-Sync HDR lineup, the Predator X27 and PG27UQ will be the first monitors on the market.

| NVIDIA G-SYNC HDR 2018 Monitor Lineup | ||||||||||

| Acer Predator X27 |

ASUS ROG Swift PG27UQ |

Acer Predator X35 |

ASUS ROG Swift PG35VQ |

Acer Predator BFGD |

ASUS ROG Swift PG65 |

HP OMEN X 65 BFGD |

||||

| Panel | 27" IPS-type (AHVA) | 35" VA 1800R curve |

65" VA? | |||||||

| Resolution | 3840 × 2160 | 3440 × 1440 (21:9) | 3840 × 2160 | |||||||

| Pixel Density | 163 PPI | 103 PPI | 68 PPI | |||||||

| Max Refresh Rates | OC Mode |

144Hz (4:2:2 chroma subsampling) |

200Hz | 120Hz | ||||||

| Standard |

120Hz (4:4:4 chroma subsampling) |

Unknown | Unknown | |||||||

| Over HDMI |

60Hz | 60Hz | 60Hz | |||||||

| Variable Refresh | NVIDIA G-Sync HDR Scaler/Module | NVIDIA G-Sync HDR Scaler/Module | NVIDIA G-Sync HDR Scaler/Module | |||||||

| Response Time | 4 ms | 4 ms? | Unknown | |||||||

| Brightness | 1000 cd/m² | 1000 cd/m² | 1000 cd/m² | |||||||

| Contrast | Unknown | Unknown | Unknown | |||||||

| Backlighting | FALD (384 zones) | FALD (512 zones) | FALD | |||||||

| Quantum Dot | Yes | Yes | Yes | |||||||

| HDR Standard | HDR10 Support | HDR10 Support | HDR10 Support | |||||||

| Color Gamut | DCI-P3 | DCI-P3 | DCI-P3 | |||||||

| Inputs | 2 × DisplayPort 1.4 1 × HDMI 2.0 |

DisplayPort 1.4 HDMI 2.0 |

DisplayPort 1.4 HDMI 2.0 Ethernet |

|||||||

| Price | TBA | TBA | TBA | |||||||

| Availability | May/June 2018 | Q4 2018? | Summer 2018? | Fall 2018 | ||||||

NVIDIA did not mention the comparable 27" and 35" AOC models (AGON AG273UG, AGON AG353UCG) were not mentioned but are presumably operating on a similar release timeline. There was also no mention of Acer's Predator XB272-HDR.

While the 27” 4K 144 Hz models were originally slated for a late 2017 launch, Acer and ASUS made a surprising announcement last August on delaying their G-Sync HDR flagships to Q1 2018. Even with NVIDIA’s current end-of-May assessment, ASUS remarked offhand that a June launch was more likely, as firmware and other development work was still ongoing. Not that those were the only G-Sync HDR displays announced – at Computex 2017, Acer and ASUS unveiled 35” curved ultrawide 200 Hz G-Sync HDR displays originally for a Q4 2017 release. Meanwhile, at CES 2018 NVIDIA had already pushed forward with revealing 65-inch smart TV esque G-Sync HDR monitors with integrated SHIELDs, dubbing them “Big Format Gaming Displays” (BFGDs). No update was provided on the schedule for the 35” and BFGDs, only that they were due to come later this year.

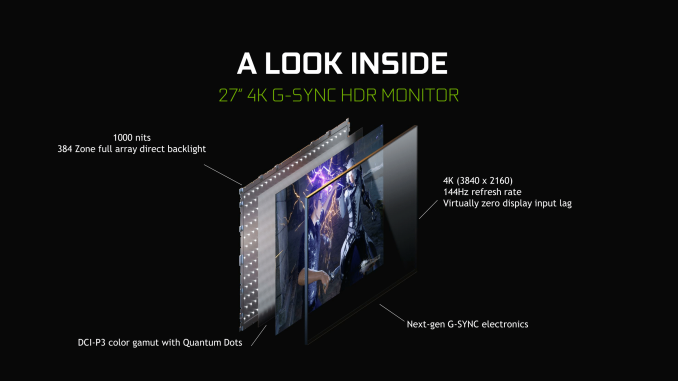

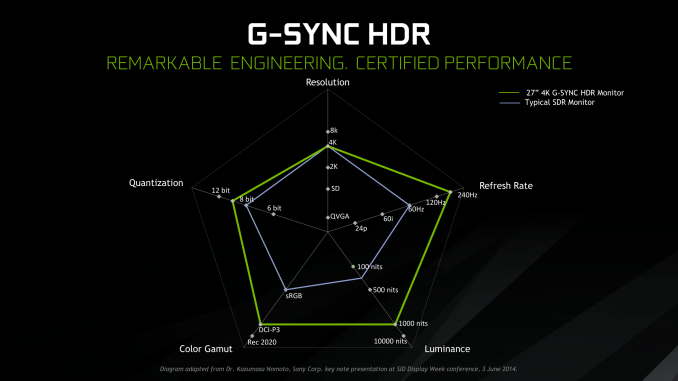

Despite the launch on the horizon, full specifications and pricing have yet to be published. The features and specifications remain the same as we have known earlier: utilizing AU Optronics’ M270QAN02.2 AHVA panel, the 27” G-Sync HDR monitors bring 3840×2160 resolutions with up to 144 Hz refresh rate (at half chroma) and quantum dot film, and offering DCI-P3 color gamut and HDR10 support, a peak brightness of 1000 nits brightness, and full array local dimming (FALD) functionality with a 384 zone direct LED backlighting system. On top of that, Acer and ASUS include their own monitor features and OSDs; for the actively cooled ROG Swift PG27UQ, this includes Tobii eye-tracking and ultra-low motion blur (ULMB).

For the few refresh rate asterisks, the amount of bandwidth needed for HDR at 10-bit 4Kp144 with 4:4:4 chroma subsampling exceeds DisplayPort 1.4's capability, and so setting the refresh rate above 98 Hz will have the monitor drop to 4:2:2 chroma and use dithering with 8bit and frame rate control (FRC). Though this bandwidth bottleneck is largely out of ASUS/Acer and NVIDIA’s hands, with DisplayPort 1.5 and even HDMI 2.1 very much too new to be incorporated in these models.

That being said, a straight conversion of European preorder prices sans VAT puts the price range at $2500 to $3000, though this does not directly implicate US pricing. As G-Sync HDR flagship gaming monitors, they have essentially every feature of ultra-high-end consumer/gaming monitors. But additionally, the AU Optronics AHVA panel is pricier as it is only purchased as combined LCD and backlight unit, and with the cost of the upgraded G-Sync HDR scaler and module, adds a cost premium that is passed onto consumers.

Ultimately, the HDR situation itself is also a little murky. Outside of HDR10 support, NVIDIA’s G-Sync HDR certification mandates and requirements are kept between them and the manufacturers, and so the specific dynamic range of G-Sync HDR isn’t clear. Presumably there are quantized public guidelines with minimum peak brightness, localized dimming capability, minimum percentage coverage of DCI-P3 gamut, and the like. In other words, much like VESA’s open DisplayHDR 400, 600, and 1000 standards that are both directly linked to numerical performance metrics but also can be independently verified by consumers themselves with VESA’s open test tools and test benchmarks.

At the time, NVIDIA did not express any standardized HDR specifications outside of their G-Sync HDR certification process, reiterating that G-Sync HDR represented a premium gaming experience and expecting OEMs and monitor manufacturers to list any HDR specs relevant to their models. In any case, these 27” monitors adhere and thus carry the UHD Alliance’s Ultra HD Premium logo, which is 4K specific. For the publicly-announced G-Sync HDR displays, it appears that only three types of AU Optronics panels are involved, and so capabilities and featuresets will naturally align closely. NVIDIA also stated that G-Sync HDR had no particular focus on Windows HDR support.

As HDR monitors are a burgeoning market segment, NVIDIA brought up the need for consumer education on the wide spectrum of HDR performance. For consumers looking for a high-end variable refresh rate monitor with HDR, an HDR brand not strictly attached to performance parameters isn’t quite as elucidating as "4K", "IPS", "144 Hz refresh rate", or "4ms response time". As new panel technologies are developed and mature, it will be interesting to see how these changes would be conveyed through the “G-Sync HDR” brand.

Source: NVIDIA

46 Comments

View All Comments

Manch - Wednesday, May 23, 2018 - link

Variable refresh rate aka Adaptive Sync aka Freesync is about a decade old now. AMD may have expanded it but they are not the sole inventor/proprietor of variable refresh rate tech.In regards to the scaler chips in said monitors. No, special HW was not required. Did the HW need to meet a certain spec? of course. There is no additional component like G-Sync. It only need to be fast enough and capable of accepting a variable rr command from the GPU which some unsupported monitors at the time were already capable of doing. GSYNC and *Sync may accomplish the same thing relatively, but they take different approaches which is borne out in their respective capabilities.

Yojimbo - Tuesday, May 29, 2018 - link

Please link me with a source that Adaptive Sync is a decade old rather then being included as an extension to DisplayPort 1.2a, which the rest of the world seems to be under the impression is true: https://www.anandtech.com/show/8008/vesa-adds-adap...Yes, special hardware is required and I linked you with something say that it's so. A special scalar chip is required to support the standard.

You are just ignoring my evidence and asserting your own "facts" without providing your own evidence for these "facts". Stop wasting my time.

Diji1 - Thursday, May 17, 2018 - link

G-sync is better than Freesync technically and has much tighter standards. There are no low quality G-sync monitors.Yojimbo - Sunday, May 20, 2018 - link

Yes I agree, I am just questioning whether the differences in quality and features are worth the price difference.Krteq - Wednesday, May 16, 2018 - link

Cmon nV, what are G-sync ranges? Even AMD have FreeSync ranges listed on their site for supported monitors!Sttm - Wednesday, May 16, 2018 - link

Doesn't GSync work from 0hz to Max? At least that was what I always thought.DC_Khalid - Wednesday, May 16, 2018 - link

The beauty of Gsync is that all monitors is certified to work from 30hz-Max. Ranges are for untested products such as Freesync where each manufacturers can put as much or as little range confusing consumers.Manch - Friday, May 18, 2018 - link

That no min verifiable specs are released to the public is disconcerting.rocky12345 - Wednesday, May 16, 2018 - link

In their top picture it looks like they washed out the picture for SDR side for sure. On the HDR side the colors look way over done as cartoon like on the character.With that said I use my Samsung 60" HDTV for movie watching and gaming for now until I decide to go back down stairs & use my 125" projector screen again. I found on the Samsung TV that if I set Dynamic Contrast to low (level1) turn HDMI Black to Normal (low makes the black squash the greys.) Set Color input to "Native" mode and set it on the PC to RGB 4,4,4 color Full Spectrum (PC Full color). I get really deep blacks and the areas in the shadows are also there (Just like HDR) does it. The colors are full and bright but not cartoon like or over done & like I said the blacks are pure black even when watching movies and you get those top and bottom bars they are totally black and no light bleed or a bit grey and the whites on the screen are a pure white not cream color or dark.

I am not sure why but when I do Native color on the TV and set it to RGB 4,4,4 Full PC Color spectrum in the video card the AMD software switches my color bits to 12 bit instead of 10 bit. I have not ventured to look it up as to why it did that I just left it alone since the software put it to that setting. Anyways my point is my SDR TV is giving great color non washed out look and pure blacks & rich colors so I see no need to upgrade until I go 4K or higher down the road in the near future now that 4K is within reach of the pocket book. Then again my Sapphire TRI-X 390x 8GB even though it is OC'ed to the max won't handle 4K gaming very well I am sure unless turning the settings down but then whats the point if it looks like crap. I see a Geforce 1180 or AMD Navi in my near future for sure.

Hxx - Wednesday, May 16, 2018 - link

Time to save up for that Acer x35. It should be hopefully a significant upgrade over my current X34 although i'm not sure how i feel about "upgrading' from an IPS to a VA panel hopefully with the curver being more pronounced, i won't notice the smaller viewing angles.