Arm Announces Neoverse N1 & E1 Platforms & CPUs: Enabling A Huge Jump In Infrastructure Performance

by Andrei Frumusanu on February 20, 2019 9:00 AM EST

Anybody following the industry over the last decade will have heard of Arm. We best know the company for being the enabler and providing the architecture as well as CPU designs that power essentially all of today’s mobile devices. The last 7-5 years in particular we’ve seen meteoric advances in silicon performance of the mobile SoCs found in our smartphones and tablets.

However Arm's ambition goes widely beyond just mobile and embedded devices. The market for compute in general is a lot larger than that, and looking at things in a business sense, high-end devices like servers and related infrastructure carry far greater profit margins. So for a successful CPU designer like Arm who is still on the rise, it's a very lucrative market to aim for, as current leader Intel can profess.

To that end, while Arm has been wildly successful in mobile and embedded, anything requiring more performance has to date been out of reach or has come with significant drawbacks. Over the last decade we’ve heard of numerous prophecies how products based on the architecture will take the server and infrastructure market by storm “any moment now”. In the last couple of years in particular we’ve seen various vendors attempt to bring this goal to fruition: Unfortunately, the results of the first generation of products were less than successful, and as such, even though some did better than others, the Arm server ecosystem has seen a quite a bit of hardship in its first years.

A New Focus On Performance

While Arm has been successful in mobile for quite some time, the overall performance of their designs has often left something to be desired. As a result, the company has been undertaking a new focus on performance that is spanning everything from mobile to servers. Working towards this goal, 2018 was an important year for Arm as the company had introduced its brand-new Cortex A76 microarchitecture design: Representing a clean-sheet endeavor, learning from the experience gained in previous generations, the company has put high hopes in the brand-new Austin-family of microarchitectures. In fact, Arm is so confident on its upcoming designs that the company has publicly shared its client compute CPU roadmap through 2020 and proclaiming it will take Intel head on in PC laptop space.

While we’ll have to wait a bit longer for products such as the Snapdragon 8CX to come to market, we’ve already had our hands on the first mobile devices with the Cortex A76, and very much independently verified all of Arm’s performance and efficiency claims.

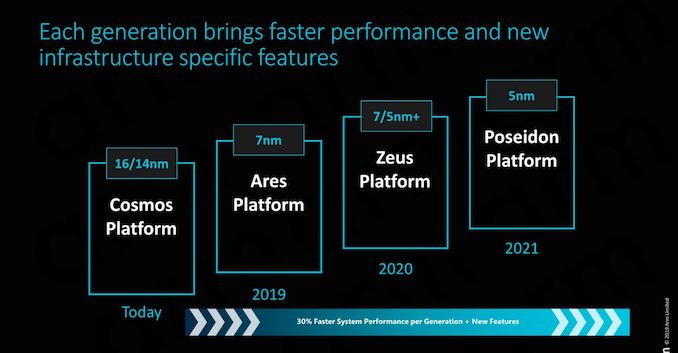

And then of course, there's Neoverse, the star of today's Arm announcements. With Neoverse Arm is looking to do for servers and infrastructure what it's already doing for its mobile business, by greatly ramping up their performance and improving their competitiveness with a new generation of processor designs. We'll get into Neoverse in much deeper detail in a moment, but in context, it's one piece of a much larger effort for Arm.

All of these new microarchitectures are important to Arm because they represent an inflection point in the market: Performance is now nearing that of the high-end players such as Intel and AMD, and Arm is confident in its ability to sustain significant annual improvements of 25-30% - vastly exceeding the rate at which the incumbent vendors are able to iterate.

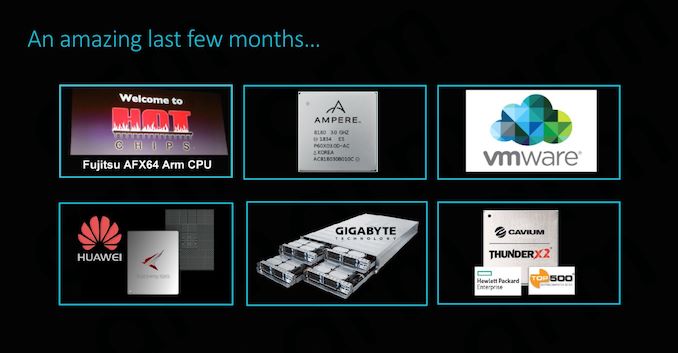

The Server Inflection Point: An Eventful Last Few Months Indeed

The last couple of months have been quite exciting for the Arm server ecosystem. At last year’s Hotchips we’ve covered Fujitsu’s session of their brand-new A64FX HPC (High performance compute) processor, representing not only the company shift from SPARC to ARMv8, but also delivering the first chip to implement the new SVE (Scalable Vector Extensions) addition to the Arm architecture.

Cavium’s ThunderX2 saw some very impressive performance leaps, making its new processor among the first to be able to compete with Intel and AMD – with partners such as GIGABYTE offering whole server systems solutions based on the new SoC.

Most recently, we saw Huawei unveiled their new Kunspeng 920 server chip promising to be the industry’s highest performing Arm server CPU.

The big commonality between the above mentioned three products is the fact that each represents individual vendor’s efforts at implementing a custom microarchitecture based on an ARMv8 architectural license. This in fact begs the question: what are Arm’s own plans for the server and infrastructure market? Well for those following closely, today’s coverage of the new Neoverse line-up shouldn’t come as a complete surprise as the company had first announced the branding and road-map back in October.

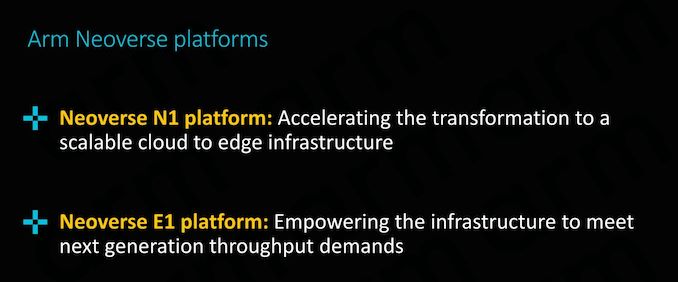

Introducing the Neoverse N1 & E1 platforms: Enabling the Ecosystem

Today’s announcement is all about enabling the ecosystem; we’ll be covering in more detail two new “platforms” that will be at the core of Arm’s infrastructure strategy for the next few years, the Neoverse N1 and E1 platforms:

Particularly today’s announcement of the Neoverse N1 platform sheds light onto what Arm had teased back in the initial October release, detailing what exactly “Ares” is and how the server/infrastructure counter-part to the Cortex A76 µarchitecture will be bringing major performance boosts to the Arm infrastructure ecosystem.

101 Comments

View All Comments

lightningz71 - Thursday, February 21, 2019 - link

This is one I can answer. My computer engineering professors fielded this exact question. Essentially, when profiling code that was being used in modern software, the major CPU vendors realized that a small portion of the x86 instructions were rarely used. So rarely, in fact, that it was an absolute waste of silicone to try to implement them in hardware as it would be so rarely used. Add in that a lot of those instruction are not executed in isolation, but have some sort of dependency on fetching a piece of data, or waiting on the resolution of multiple intermediary steps during their execution, that going with full hardware implementations would not have resulted in a major boost in their performance. Instead, they elected to implement them in micro-code and execute them on the highly tuned circuits that they used to implement the more common instructions in the back end. So, while you loose some performance having to load and run the microcode sequences, its actually executing those simplified sub-instructions very rapidly, and can do other things while waiting for various tasks to complete.so, while there is a case to be made that a full, tuned and optimized hardware implementation of the more complex instructions can be done, and perform more quickly than the micro-code sequences, the actual speedup for the overall performance of the systems in question would be minimal because of how rarely those actual instructions are used in practice. You're talking about shaving off a few tens of cycles per instance on a processor that is running at around 4Ghz these days. The real performance impact would be minimal, but the development cost and circuit budget consumed would be significant for not much gain.

FunBunny2 - Thursday, February 21, 2019 - link

"Essentially, when profiling code that was being used in modern software, the major CPU vendors realized that a small portion of the x86 instructions were rarely used. "not to do too much what-about-ism, but IBM was doing that with COBOL applications, in real time monitoring (allowance to do so was embedded in the lease agreement), at least as early as the 360.

naturally, I didn't remember that lower brain stem memory until reading your comment. my shame. (:

but... I do wonder about all those 'extensions' to the original 8086 instruction set. weren't they created to support 'necessary' functions? here: https://en.wikichip.org/wiki/x86/extensions

or are they, too, not used enough?

Wilco1 - Thursday, February 21, 2019 - link

Well when did you last use MMX? Or x87 floating point? There are large numbers of instructions which are hardly ever used.FunBunny2 - Thursday, February 21, 2019 - link

HLL coders don't, at least directly. but I'm old enough to remember when adding a '87 (before FP was moved to the '86) put a rocket under 1-2-3.Wilco1 - Thursday, February 21, 2019 - link

The point is both have been superceded by all the SSE variants which itself is now being replaced by AVX. Intel has posted patches to change HLL MMX intrinsics to use SSE instructions instead of MMX.zmatt - Wednesday, February 27, 2019 - link

Usually you don't invoke those yourself. The compiler does.nevcairiel - Wednesday, February 20, 2019 - link

The desktop and notebook market will face adoption problems simply from having your software run (fast). Of course they can use emulation layers, but that once again costs you efficiency/performance.Mobile was an entirely new space, so no pre-existing software to really worry about, and servers are a far more managed space so that software is often more readily available in the variants you need. Desktop usages on the other hand are full of legacy software that has to work.

ZolaIII - Wednesday, February 20, 2019 - link

In it's core (integer base instruction set) it is more efficient but that doesn't mean much nowadays. Main factor is design of actual core as such.ballsystemlord - Wednesday, February 20, 2019 - link

But, and here's the kicker, the binary nature of proprietary SW means that switching arches will require many fixes to programs and many more will never be ported. Emulation, which is slow for CPU arches, is the only way that such SW could continue to exist.Gee, Stallman was wright!

wumpus - Thursday, February 21, 2019 - link

Put it this way: the effective means to convert a "CISC" architecture to internally* "RISCY" operation could be included on a CPU core effectively in the mid 1990s. This pipeline step is sufficiently small to make no difference nowadays (although Sandy Bridge and later use caches to store pre-decoded micro-ops). The RISC/CISC wars died a long time ago, and now we only have Intel vs. ARM vs. AMD (and don't forget IBM).* (Internally RISC). Oddly enough, the more "internally RISCy" a 1990s-era chip was the less successful it was. The AMD K5 was internally a 29k derivative (a real RISC) and failed miserably. Supposedly IBM had a PowerPC/X86 hybrid that never made it out of the lab. Transmeta did its translation in software, but fell into the "single device power trap". Nextgen was probably more successful than all of these (especially in convincing AMD to buy them and producing the mighty Athlon), and had the ability to execute native code (supposedly. I don't think anyone ever did. Presumably involved 80 bit instructions). Pentium Pro, K6, Pentiums 2&3, Athlon all executed "native microcodes" but don't appear to slavishly copy RISC dogma.