The ADATA Ultimate SU750 1TB SSD Review: Realtek Does Storage, Part 1

by Billy Tallis on December 6, 2019 8:00 AM ESTWhole-Drive Fill

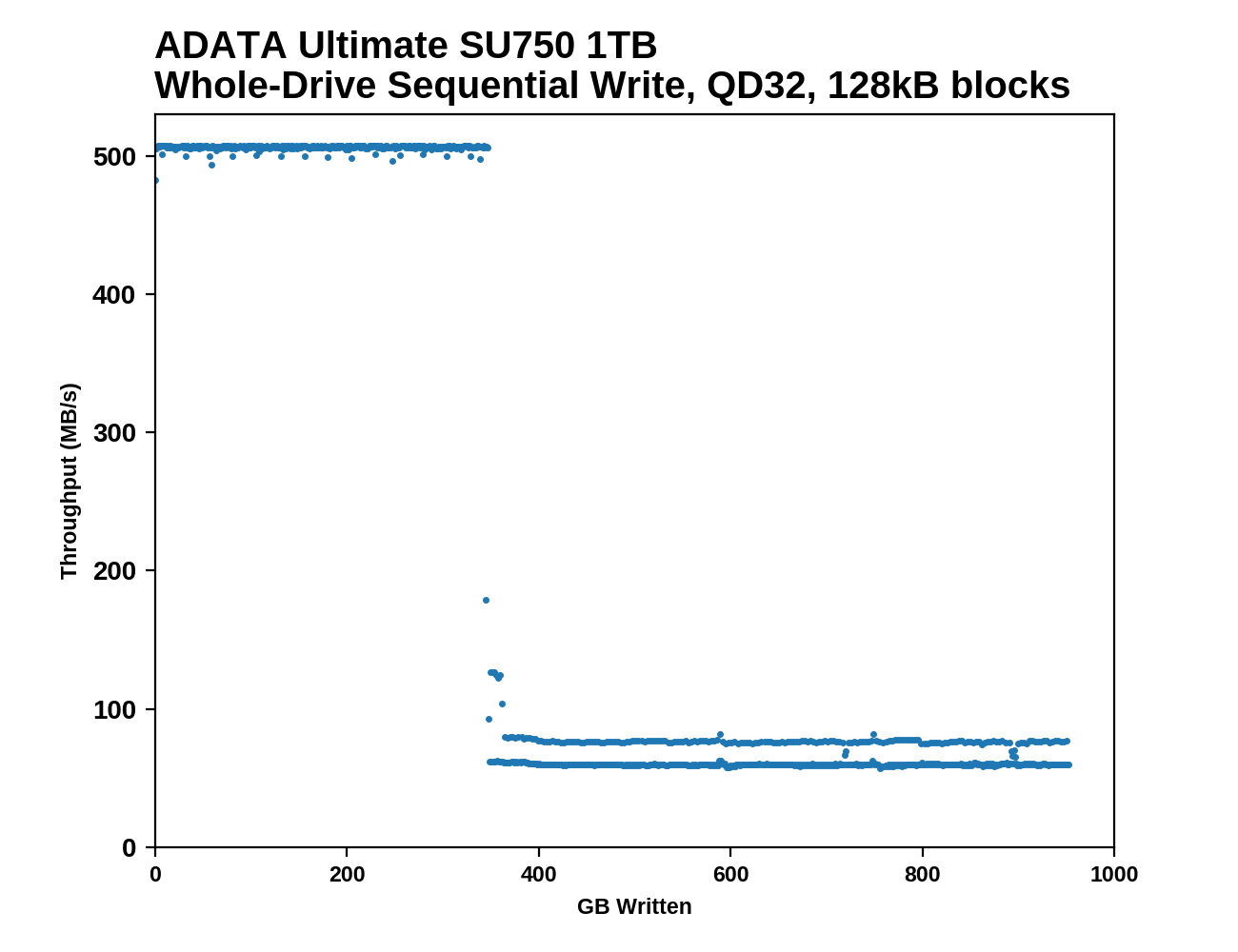

This test starts with a freshly-erased drive and fills it with 128kB sequential writes at queue depth 32, recording the write speed for each 1GB segment. This test is not representative of any ordinary client/consumer usage pattern, but it does allow us to observe transitions in the drive's behavior as it fills up. This can allow us to estimate the size of any SLC write cache, and get a sense for how much performance remains on the rare occasions where real-world usage keeps writing data after filling the cache.

|

|||||||||

The SLC write cache on the 1TB ADATA SU750 is quite large, lasting for about 345GB of sequential writes before performance drops down to QLC-like speeds. In both phases, the performance is very consistent, and the transition when the SLC cache fills up is abrupt.

|

|||||||||

| Average Throughput for last 16 GB | Overall Average Throughput | ||||||||

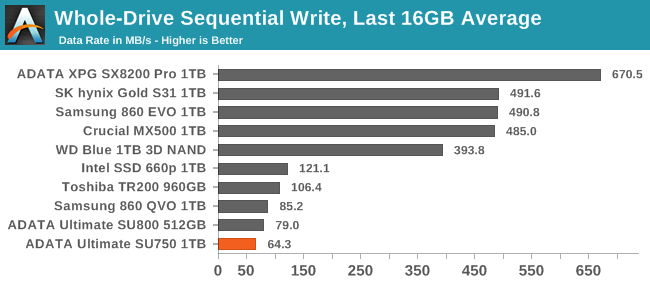

The post-cache write speed of the SU750 is actually even slower than the QLC-based Samsung 860 QVO, but the much larger cache on the SU750 means its overall average write speed across the entire drive filling process is slightly faster.

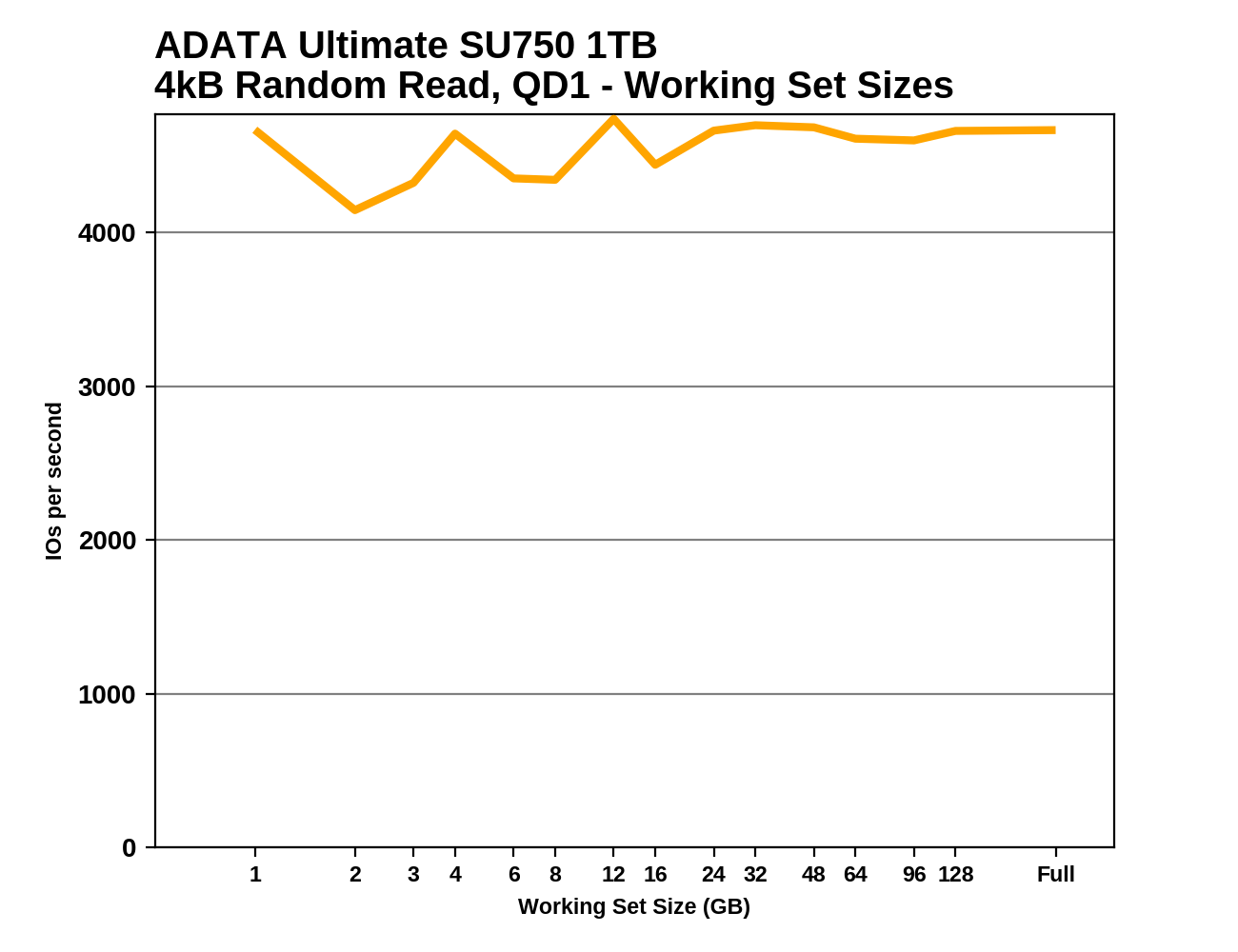

Working Set Size

When DRAMless SSDs are under consideration, it can be instructive to look at how performance is affected by working set size: how large a portion of the drive is being touched by the test. Drives with full-sized DRAM caches are typically able to maintain about the same random read performance whether reading from a narrow slice of the drive or reading from the whole thing. DRAMless SSDs often show a clear dropoff when the working set size grows too large for the mapping information to be kept in the controller's small on-chip buffers.

|

|||||||||

The QD1 random read performance of the SU750 is fairly low regardless of the working set size. There's no clear indication of performance being affected by the size of the controller's caches for mapping information. Even when the random reads are confined to a mere 1GB slice of the drive, performance is no better than when reading from the entire drive. The Intel 660p is the only drive in this bunch that does show a clear performance drop, caused by it having a 256MB DRAM cache instead of the more typical 1GB for a 1TB drive.

54 Comments

View All Comments

Billy Tallis - Saturday, December 7, 2019 - link

SSDs need to keep track of what physical location each logical block address is stored at. This info changes constantly because flash memory needs wear leveling, and this info needs to be accessed for every read or write operation the host system issues. Most SSDs use a flash translation layer that deals with 4kB chunks, which means the full address mapping table requires 1GB for each 1TB of storage. Mainstream SSDs use DRAM to hold this table, because it's much faster than doing an extra flash read before each read or write operation can be completed. DRAMless SSDs can cache a small portion of that table (typically a few MBs or tens of MBs) within the controller itself or using the NVMe Host Memory Buffer feature.DRAMless SSDs can work as a boot drive, but they're slower than mainstream drives that have the full 1GB per 1TB DRAM buffer.

PaulHoule - Tuesday, December 10, 2019 - link

The block size of an SSD is usually larger than the block size presented to the OS. The SSD can only erase a large group of blocks at once, so it has a flash translation layer that needs to keep track of things like "Block X seen by the OS is really stored in Subblock Y of Physical Block Z". It has to access that data every time it reads or writes, so it helps for that data to be in DRAM.DRAM is also good for write caching; under ordinary circumstances it is a big performance win to buffer writes to RAM before you really do them so you can bundle writes so the SSD can do them efficiently.

Current DRAMless SSDs keep the lookup tables on the SSD itself, which is slower than RAM.

There is a standard for an NVMe device to steal some RAM from the host, which might be a good option. Also there is a standard for NVMe zoned namespaces which would let the host manage the drive more directly, put that together with a revolution in the OS and you could get something which is simple, high performance, and cheap, but that revolution is happening in the data center now, not at the client.

Goodspike - Saturday, December 7, 2019 - link

What's with these brand names?To me Adata means no data. Sandisk means without disk. These are not good names for storage devices!

Gills - Saturday, December 7, 2019 - link

Realtek abandoned me on Windows 10 sound drivers for the many Toshiba POS terminals I'm tasked with updating from Windows XP and 7, so I'm not jumping onboard with anything they do anytime soon.supdawgwtfd - Saturday, December 7, 2019 - link

So going from one unsupported EOL O/S to another soon to be?That doesn't seem like good management.

Gills - Saturday, December 7, 2019 - link

Worded that poorly, sorry - we're upgrading everything to Windows 10.FunBunny2 - Saturday, December 7, 2019 - link

"So going from one unsupported EOL O/S to another soon to be?That doesn't seem like good management."

spend some time as the 'IT manager' at any small business; this sort of driving the infrastructure into the ground is SoP.

PeachNCream - Monday, December 9, 2019 - link

To be completely fair to RealTek, if your company's point-of-sale hardware originally shipped with XP, writing Win10 drivers for that audio hardware was probably not high on anyone's list of priorities. For point-of-sale computers manufactured and shipped with Windows 7, that might be more of a problem given the less obsolete nature of the equipment and yes, I understand that POS systems are expected to have a long service life, but XP was first up for sale in late 2001 and extended suport ended in 2014.Billy Tallis - Monday, December 9, 2019 - link

FYI, extended support for the last POS edition of XP only ended 8 months ago.PeachNCream - Tuesday, December 10, 2019 - link

Ah thanks. I didn't realize that POS variants had a longer support lifespan.