The Intel 12th Gen Core i9-12900K Review: Hybrid Performance Brings Hybrid Complexity

by Dr. Ian Cutress & Andrei Frumusanu on November 4, 2021 9:00 AM ESTToday marks the official retail availability of Intel’s 12th Generation Core processors, starting with the overclockable versions this side of the New Year, and the rest in 2022. These new processors are the first widescale launch of a hybrid processor design for mainstream Windows-based desktops using the underlying x86 architecture: Intel has created two types of core, a performance core and an efficiency core, to work together and provide the best of performance and low power in a singular package. This hybrid design and new platform however has a number of rocks in the river to navigate: adapting Windows 10, Windows 11, and all sorts of software to work properly, but also introduction of DDR5 at a time when DDR5 is still not widely available. There are so many potential pitfalls for this product, and we’re testing the flagship Core i9-12900K in a few key areas to see how it tackles them.

Let’s Talk Processors

Since August, Intel has been talking about the design of its 12th Generation Core processor family, also known as Alder Lake. We’ve already detailed over 16000 words on the topic, covering the fundamentals of each new core, how Intel has worked with Microsoft to improve Windows performance with the new design, as features like DDR5, chipsets, and overclocking. We’ll briefly cover the highlights here, but these two articles are worth the read for those that want to know.

- Intel Architecture Day 2021: Alder Lake, Golden Cove, and Gracemont Detailed

- Intel 12th Gen Core Alder Lake for Desktops: CPUs, Chipsets, Power, DDR5, OCh

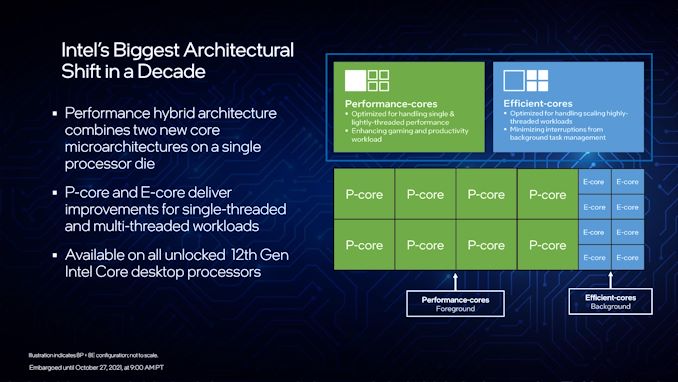

At the heart of Intel’s processors is a hybrid, or heterogeneous, core design. The desktop processor silicon will have eight performance cores (P-cores) and eight efficiency cores (E-cores), the latter in two groups of four. Each of the cores is designed differently to optimize for their targets, but supports the same software. The goal is that software that is not urgent runs on efficiency cores, but time-sensitive software runs on performance cores, and that has required a new management control between the processor and Windows has been developed to enable Alder Lake to work at its best. That control is fully enabled in Windows 11, and Windows 10 can get most of the way there but doesn’t have all the bells and whistles for finer details – Linux support is in development.

The use of this hybrid design makes some traditional performance measurements difficult to compare. Intel states that individually the performance cores are +19% over 11th Generation, and the efficiency cores are around 10th Generation performance levels at much lower power. At peak performance Intel has showcased in slides that four E-cores will outperform two 6th Generation cores in both performance and power, with the E-core being optimized also for performance per physical unit of silicon. Alternatively, Intel can use all P-cores and all E-cores on a singular task, up to 241W for the Core i9 processor.

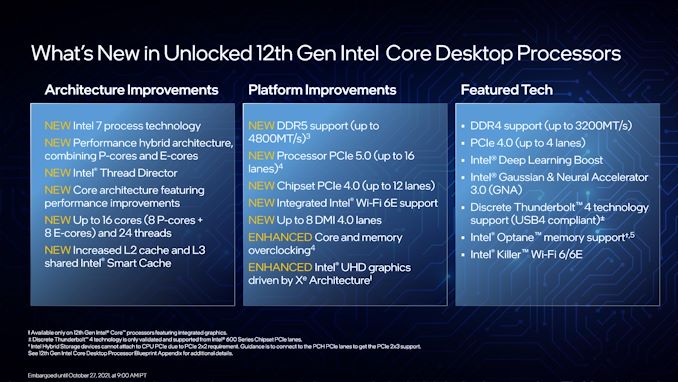

On top of all this, Intel is bringing new technology into the mix with 12th Gen Core. These processors will have PCIe 5.0 support, but also DDR5-4800 and DDR4-3200 support on the memory. This means that Alder Lake motherboards, using the new LGA1700 socket and Z690 chipsets, will be either DDR4 or DDR5 compatible. No motherboard will have slots for both (they’re not interchangeable), but as we are quite early in the DDR5 lifecycle, getting a DDR4 motherboard might be the only way for users to get hold of an Alder Lake system using their current memory. We test both DDR4 and DDR5 later on in the review to see if there is a performance difference.

A small word on power (see this article for more info) – rather than giving a simple ‘TDP’ value as in previous generations, which only specified the power at a base frequency, Intel is expanding to providing both a Base power and a Turbo power this time around. On top of that, Intel is also making these processors have ‘infinite Turbo time’, meaning that with the right cooling, users should expect these processors to run up to the Turbo power indefinitely during heavy workloads. Intel giving both numbers is a welcome change, although some users have criticized the decreasing turbo power for Core i7 and Core i5.

As we reported last week, here are the processors shipping today:

| Intel 12th Gen Core, Alder Lake | |||||||||

| AnandTech | Cores P+E/T |

E-Core Base |

E-Core Turbo |

P-Core Base |

P-Core Turbo |

IGP | Base W |

Turbo W |

Price $1ku |

| i9-12900K | 8+8/24 | 2400 | 3900 | 3200 | 5200 | 770 | 125 | 241 | $589 |

| i9-12900KF | 8+8/24 | 2400 | 3900 | 3200 | 5200 | - | 125 | 241 | $564 |

| i7-12700K | 8+4/20 | 2700 | 3800 | 3600 | 5000 | 770 | 125 | 190 | $409 |

| i7-12700KF | 8+4/20 | 2700 | 3800 | 3600 | 5000 | - | 125 | 190 | $384 |

| i5-12600K | 6+4/16 | 2800 | 3600 | 3700 | 4900 | 770 | 125 | 150 | $289 |

| i5-12600KF | 6+4/16 | 2800 | 3600 | 3700 | 4900 | - | 125 | 150 | $264 |

Processors that have a K are overclockable, and those with an F do not have integrated graphics. The graphics on each of the non-F chips are Xe-LP graphics, the same as the previous generation.

At the top of the stack is the Core i9-12900K, with eight P-cores and eight E-cores, running at a maximum 241 W. Moving down to i7 gives eight P-cores and four E-cores at 190 W, and the Core i5 gives six P-cores and four E-cores at 150 W. We understand that future processors may have six P-core and zero E-core designs.

| Compare at $550+ | |||||||

| AnandTech | Cores P+E/T |

P-Core Base |

P-Core Turbo |

IGP | Base W |

Turbo W |

Price |

| R9 5950X | 16/32 | 3400 | 4900 | - | 105 | 142 | $799 |

| i9-12900K | 8+8/24 | 3200 | 5200 | 770 | 125 | 241 | $589* |

| R9 5900X | 12/24 | 3700 | 4800 | - | 105 | 142 | $549 |

| * AMD Quotes RRP, Intel quotes 'tray' as 1000-unit sales. Retail is ~$650 | |||||||

The Core i9-12900K, the focus of this review today, is listed at a tray price of $589. Intel always lists tray pricing, which means ‘price if you buy 1000 units as an OEM’. The retail packaging is often another +5-10% or so, which means actual retail pricing will be nearer $650, plus tax. At that pricing it really sits between two competitive processors: the 16-core Ryzen 9 5950X ($749) and the 12-core Ryzen 9 5900X ($549).

Let’s Talk Operating Systems

Suffice to say, from the perspective of a hardware reviewer, this launch is a difficult one to cover. Normally with a new processor we would run A vs B, and that’s most of the data we need aside from some specific edge cases. For this launch, there are other factors to consider:

- P-core vs E-core

- DDR5 vs DDR4

- Windows 11 vs Windows 10

Every new degree of freedom to test is arguably a doubling of testing, so in this case 23 means 8x more testing than a normal review. Fun times. But the point to drill down to here is the last one.

Windows 11 is really new. So new in fact that performance issues on various platforms are still being fixed: recently a patch was put out to correct an issue with AMD L3 cache sizes, for example. Even when Intel presented data against AMD last week, it had to admit that they didn’t have the patch yet. Other reviewers have showcased a number of performance consistency issues with the OS when simply changing CPUs in the same system. The interplay of a new operating system that may improve performance, combined with a new heterogeneous core design, combined with new memory, and limited testing time (guess who’s CPUs were held in customs for a week), means that for the next few weeks, or months, we’re going to be seeing new performance numbers and comparisons crop up.

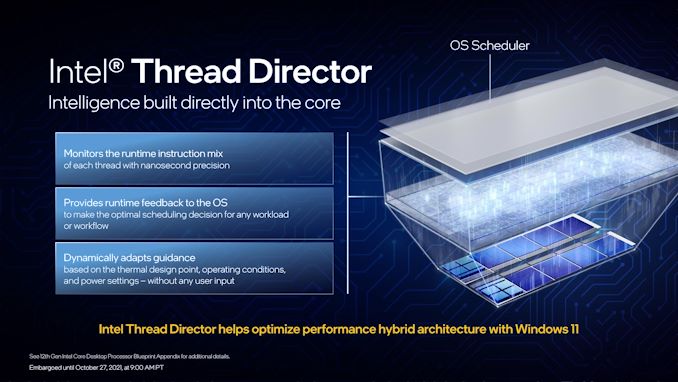

From Intel’s perspective, Windows 11 brings the full use of its Thread Director technology online. Normally the easiest way to run software on a CPU is to assume all the cores are the same - the advent of hyperthreading, favoured core, and other similar features meant that add-ins were applied to the operating system to help it work as intended at the hardware level. Hybrid designs add much more complexity, and so Intel built a new technology called Thread Director to handle it. At the base level, TD understands the CPU in terms of performance per core but also efficiency per core, and it can tell P-core from E-core from favoured P-core from a hyperthread. It gathers all this information, and tells the operating system what it knows – which threads need performance, what threads it thinks needs efficiency, and what are the best candidates to move up or down that stack. The operating system is still king, and can choose to ignore what TD suggests, but Windows 11 can take all that data and make decisions depending on what the user is currently focused on, the priority level of those tasks, and additional software hooks from developers regarding priority and latency.

The idea is that with Windows 11, it all works. With Windows 10, it almost all works. The main difference Intel told us is although Windows 10 can separate cores apart, and hyperthreads, it doesn’t really understand efficiency that well. So its decisions are made more in regards to performance requirements, rather than performance vs efficiency. At the end of the day, all this should mean to the user is that Windows 10 tries to minimizes the run-to-run variation, but Windows 11 does it better. Ultimate best-case performance shouldn’t change in any serious way: a single thread on a P-core, or across several P-cores for example, should perform the same.

Let’s Talk Testing

This review is going to focus on these specific comparisons:

- Core i9-12900K on DDR5 vs the Competition

- Core i9-12900K on DDR5 vs Core i9-12900K on DDR4

- Power and Performance of the P-Core vs E-Core

- Core i9-12900K Windows 11 vs Windows 10

Normally when a new version of Windows is launched, I stay as far away from it as possible. On a personal level, I enjoy consistency and stability in my workflow, but also when it comes to reviewing hardware – being able to be confident in having a consistent platform is the only true way to draw meaningful conclusions over a sustained period. Nonetheless, when a new operating system is launched, there is always the call to bulk wholesale move testing to a new platform. Windows 11 is Windows 10 with a new dress and some details moved around and improved, so it should be easier than most, however I’m still going to wait until the bulk of those initial early adopter issues, especially those that might affect performance are solved, before performing a flat refresh of our testing ecosystem. Expect that to come in Q2 next year, where we will also be updating to NVMe testing, and soliciting updates for benchmarks and new tests to explore.

For our testing, we’re leveraging the following platforms:

| Alder Lake Test Systems | ||

| AnandTech | DDR5 | DDR4 |

| CPU | Core i9-12900K 8+8 Cores, 24 Threads 125W Base, 241W Turbo |

|

| Motherboard | MSI Z690 Unify | MSI Z690 Carbon Wi-Fi |

| Memory | SK Hynix 2x32 GB DDR5-4800 CL40 |

ADATA 2x32 GB DDR4-3200 CL22 |

| Cooling | MSI Coreliquid 360mm AIO |

Corsair H150i Elite 360mm AIO |

| Storage | Crucial MX500 2TB | |

| Power Supply | Corsair AX860i | |

| GPUs | Sapphire RX460 2GB (Non-Gaming Tests) NVIDIA RTX 2080 Ti (Gaming Tests), Driver 496.49 |

|

| Operating Systems | Windows 10 21H1 Windows 11 Up to Date Ubuntu 21.10 (for SPEC Power) |

|

All other chips for comparison were ran as tests listed in our benchmark database, Bench, on Windows 10.

Highlights of this review

- The new P-core is faster than a Zen 3 core, and uses 55-65 W in ST

- The new E-core is faster than Skylake, and uses 11-15 W in ST

- Maximum all-core power recorded was 272 W, but usually below 241 W (even in AVX-512)

- Despite Intel saying otherwise, Alder Lake does have AVX-512 support (if you want it)!

- Overall Performance of i9-12900K is well above i9-11900K

- Performance against AMD overall is a mixed bag: win on ST, MT varies

- Performance per Watt of the P-cores still lags Zen3

- There are some fundamental Windows 10 issues (that can be solved)

- Don’t trust thermal software just yet, it says 100C but it’s not

- Linux idle power is lower than Windows idle power

- DDR5 gains shine through in specific MT tests, otherwise neutral to DDR4

474 Comments

View All Comments

Oxford Guy - Sunday, November 7, 2021 - link

‘or maybe switch off their E-cores and enable AVX-512 in BIOS’This from exactly the same person who posted, just a few hours ago, that it’s correct to note that that option can disappear and/or be rendered non-functional.

I am reminded of your contradictory posts about ECC where you mocked advocacy for it (‘advocacy’ being merely its mention) and proceeded to claim you ‘wish’ for more ECC support.

Once again, it’s helpful to have a grasp of what one actually believes prior to posting. Allocating less effort to posting puerile insults and more toward substance is advised.

mode_13h - Sunday, November 7, 2021 - link

> This from exactly the same person who posted, just a few hours ago, that it’s> correct to note that that option can disappear and/or be rendered non-functional.

You need to learn to distinguish between what Intel has actually stated vs. the facts as we wish them to be. In the previous post you reference, I affirmed your acknowledgement that the capability disappearing would be consistent with what Intel has actually said, to date.

In the post above, I was leaving open the possibility that *maybe* Intel is actually "cool" with there being a BIOS option to trade AVX-512 for E-cores. We simply don't know how Intel feels about that, because (to my knowledge) they haven't said.

When I clarify the facts as they stand, don't confuse that with my position on the facts as I wish them to be. I can simultaneously acknowledge one reality, which maintaining my own personal preference for a different reality.

This is exactly what happened with the ECC situation: I was clarifying Intel's practice, because your post indicated uncertainty about that fact. It was not meant to convey my personal preference, which I later added with a follow-on post.

Having to clarify this to an "Oxford Guy" seems a bit surprising, unless you meant like Oxford Mississippi.

> you mocked advocacy

It wasn't mocking. It was clarification. And your post seemed more to express befuddlement than expressive of advocacy. It's now clear that your post was a poorly-executed attempt at sarcasm.

Once again, it's helpful not to have your ego so wrapped up in your posts that you overreact when someone tries to offer a factual clarification.

Oxford Guy - Monday, November 8, 2021 - link

I now skip to the bottom of your posts If I see more of the same preening and posing, I spare myself the rest of the nonsense.mode_13h - Tuesday, November 9, 2021 - link

> If I see more of the same preening and posing, I spare myself the rest of the nonsense.Then I suggest you don't read your own posts.

I can see that you're highly resistant to reason and logic. Whenever I make a reasoned reply, you always hit back with some kind of vague meta-critique. If that's all you've got, it can be seen as nothing less than a concession.

O-o-o-O - Saturday, November 6, 2021 - link

Anyone talking about dumping x64 ISA?I don't see AVX-512 a good solution. Current x64 chips are putting so much complexity in CPU with irrational clock speed that migrating process-node further into Intel4 on would be a nightmare once again.

I believe most of the companies with in-house developers expect the end of Xeon-era is quite near, as most of the heavy computational tasks are fully optimized for GPUs and that you don't want coal burning CPUs.

Even if it doesn't come in 5 year time-frame, there's a real threat and have to be ahead of time. After all, x86 already extended its life 10+ years when it could have been discontinued. Now it's really a dinosaur. If so, non-server applications would follow the route as well.

We want more simple / solid / robust base with scalability. Not an unreliable boost button that sometimes do the trick.

SystemsBuilder - Saturday, November 6, 2021 - link

I don't see AVX-512 that negatively it is just the same as AVX2 but double the vectors size and a with a richer instruction set. I find it pretty cool to work with especially when you've written some libraries that can take advantage of it. As I wrote before, it looks like Golden cove got AVX-512 right based on what Ian and Andrei uncovered. 0 negative offset (e.g. running at full speed), power consumption not much more than AVX2, and it supports both FP16 and BP16 vectors! I think that's pretty darn good! I can work with that! Now I want my Sapphire rapids with 32 or 48 Golden cove P cores! No not fall 2022 i want it now! lolmode_13h - Saturday, November 6, 2021 - link

> When you optimize code today (for pre Alder lake CPUs) to take advantage> of AVX-512 you need to write two paths (at least).

Ah, so your solution depends on application software changes, specifically requiring them to do more work. That's not viable for the timeframe of concern. And especially not if its successor is just going to add AVX-512 to the E-cores, within a year or so.

> There are many levels of AVX-512 support and effectively you need write customized

> code for each specific CPUID

But you don't expect the capabilities to change as a function of which thread is running, or within a program's lifetime! What you're proposing is very different. You're proposing to change the ABI. That's a big deal!

> It is absolutely possible and it will come with time.

Or not. ARM's SVE is a much better solution.

> I think in the future P and E cores might have more than just AVX-512 that is different

On Linux, using AMX will require a thread to "enable" it. This is a little like what you're talking about. AMX is a big feature, though, and unlike anything else. I don't expect to start having to enable every new ISA extension I want to use, or query how many hyperthreads actually support - this becomes a mess when you start dealing with different libraries that have these requirements and limitations.

Intel's solution isn't great, but it's understandable and it works. And, in spite of it, they still delivered a really nice-performing CPU. I think it's great if technically astute users have/retain the option to trade E-cores for AVX-512 (via BIOS), but I think it's kicking a hornets nest to go down the path of having a CPU with asymmetrical capabilities among its cores.

Hopefully, Raptor Lake just adds AVX-512 to the E-cores and we can just let this issue fade into the mists of time, like other missteps Intel & others have made.

SystemsBuilder - Saturday, November 6, 2021 - link

I too believe AVX-512 exclusion in the E cores it is transitory. next gen E cores may include it and the issue goes away for AVX-512 at least (Raptor Lake?). Still there will be other features that P have but E won't have so the scheduler needs to be adjusted for that. This will continue to evolve with every generation of E and P cores - because they are here to stay.I read somewhere a few months ago but right now i do not remember where (maybe on Anandtech not sure) that the AVX-512 transistor budget is quite small (someone measured it on the die) so not really a big issue in terms of area.

AMX is interesting because where AVX-512 are 512 bit vectors, AMX is making that 512x512 bit matrices or tiles as intel calls it. Reading the spec on AMX you have BF16 tiles which is awesome if you're into neural nets. Of course gpus will still perform better with matrix calculations (multiplications) but the benefit with AMX is that you can keep both the general CPU code and the matrix specific code inside the CPU and can mix the code seamlessly and that's gonna be very cool - you cut out the latency between GPU and CPU (and no special GPU API's are needed). but of course you can still use the GPU when needed (sometimes it maybe faster to just do a matrix- matrix add for instance just inside the CPU with the AMX tiles) - more flexibility.

Anyway, I do think we will run into a similar issue with AMX as we have the AVX-512 on Alder Lake and therefore again the scheduler needs to become aware of each cores capabilities and each piece of code need to state what type of core they prefer to run on: AVX2, AVX-512, AMX capable core etc (the compliers job). This way the scheduler can do the best job possible with every thread.

There will be some teething for a while but i think this is the direction it is going.

mode_13h - Sunday, November 7, 2021 - link

The difference is that AMX is new. It's also much more specialized, as you point out. But that means that they can place new hoops for code to jump through, in order to use it.It's very hard to put a cat like AVX-512 back in the bag.

SystemsBuilder - Saturday, November 6, 2021 - link

To be clear, I also want to add that the way code is written today (in my organization) pre Alder Lake code base. Every time we write a code path for AVX512 we need to write a fallback code path incase the CPU is not AVX-512 capable. This is standard (unless you can control the execution H/W 100% - i.e. the servers).Does not mean all code has to be duplicated but the inner loops where the 80%/20% rule (i.e. 20% of the code that consumes 80% of the time, which in my experience often becomes like the 99%/1% rule) comes into play that's where you write two code paths:

1 for AVX-512 in case it CPU is capable and

2 with just AVX2 in case CPU is not capable

mostly this ends up being just as I said the inner most loops, and there are excellent broadly available templates to use for this.

Just from a pure comp sci perspective it is quite interesting to vectorize code and see the benefits - pretty cool actually.