The Intel Optane Memory M10 (64GB) Review: Optane Caching Refreshed

by Billy Tallis on May 15, 2018 10:45 AM EST- Posted in

- SSDs

- Storage

- Intel

- PCIe SSD

- SSD Caching

- M.2

- NVMe

- Optane

- Optane Memory

AnandTech Storage Bench - Heavy

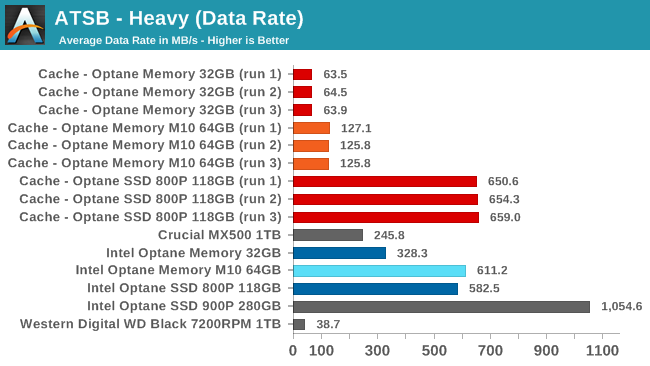

Our Heavy storage benchmark is proportionally more write-heavy than The Destroyer, but much shorter overall. The total writes in the Heavy test aren't enough to fill the drive, so performance never drops down to steady state. This test is far more representative of a power user's day to day usage, and is heavily influenced by the drive's peak performance. The Heavy workload test details can be found here. This test is run twice, once on a freshly erased drive and once after filling the drive with sequential writes.

The 118GB Optane SSD 800P is the only cache module large enough to handle the entirety of the Heavy test, with a data rate that is comparable to running the test on the SSD as a standalone drive. The smaller Optane Memory drives do offer significant performance increases over the hard drive, but not enough to bring the average data rate up to the level of a good SATA SSD.

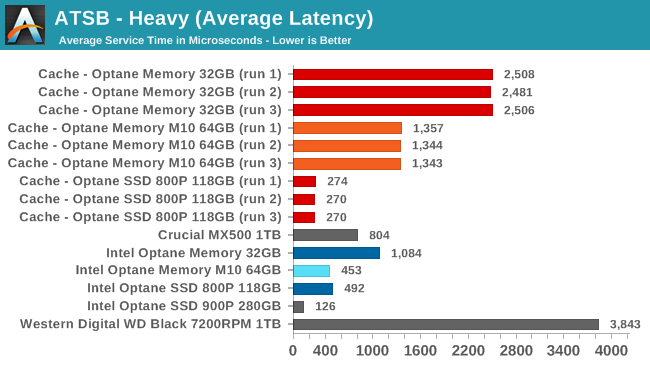

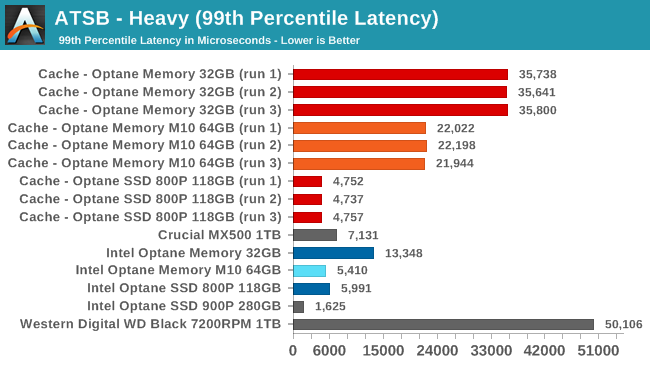

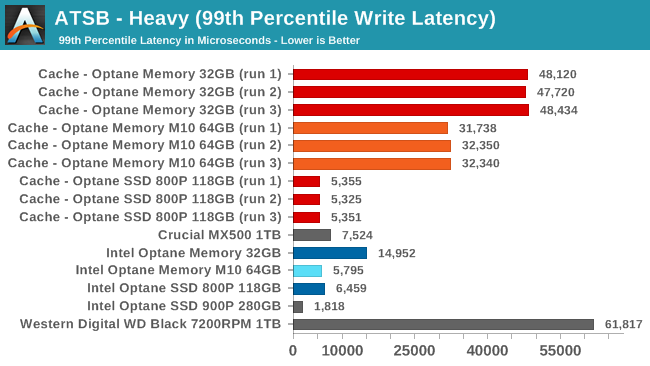

The 64GB Optane Memory M10 offers similar latency to the 118GB Optane SSD 800P when both are treated as standalone drives. In a caching setup the cache misses have a big impact on average latency and a bigger impact on 99th percentile latency, though even the 32GB cache still outperforms the bare hard drive on both metrics.

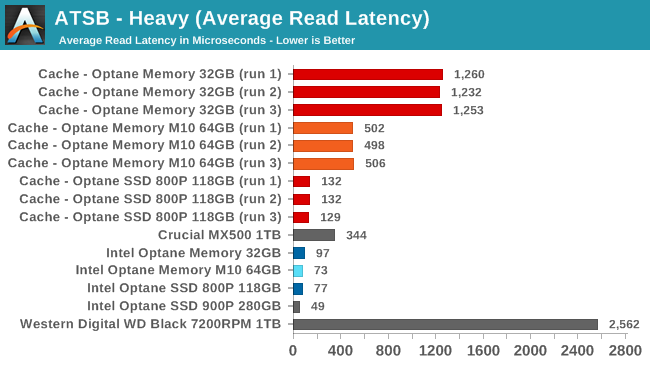

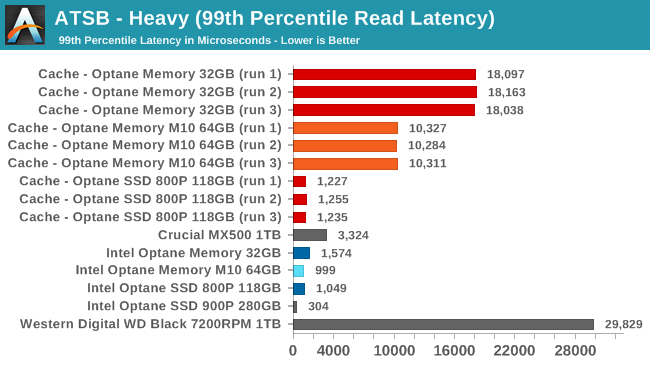

The average read latency scores show a huge disparity between standalone Optane SSDs and the hard drive. The 118GB cache performs almost as well as the standalone Optane drives while the 64GB cache averages a bit worse than the Crucial MX500 SATA SSD and the 32GB cache averages about half the latency of the bare hard drive.

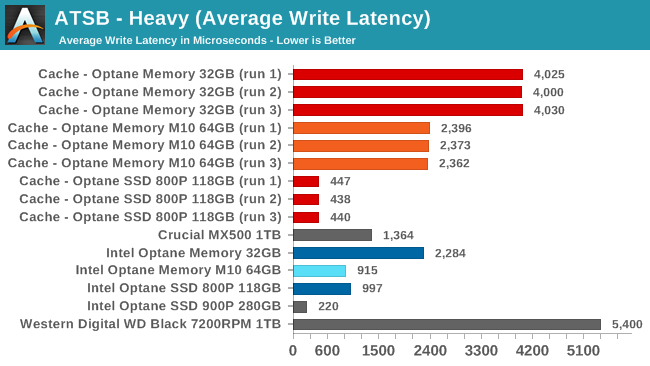

On the write side, the Optane M.2 modules don't perform anywhere near as well as the Optane SSD 900P, and the 32GB module has worse average write latency than the Crucial MX500. In caching configurations, the 118GB Optane SSD 800P has about twice the average write latency of the 900P while the smaller cache configurations are worse off than the SATA SSD.

The 99th percentile read and write latency scores rank about the same as the average latencies, but the impact of an undersized cache is much larger here. With 99th percentile read and write latencies in the tens of milliseconds, the 32GB and 64GB caches won't save you from noticeable stuttering.

96 Comments

View All Comments

jordanclock - Wednesday, May 16, 2018 - link

Yeah, 64GB is ~59GiB.IntelUser2000 - Tuesday, May 15, 2018 - link

Billy,Could you tell us why the performance is much lower? I was thinking Meltdown but 800P article says it has the patch enabled. The random performance here is 160MB/s for 800P, but on the other article it gets 600MB/s.

Billy Tallis - Tuesday, May 15, 2018 - link

The synthetic benchmarks in this review were all run under Windows so that they could be directly compared to results from the Windows-only caching drivers. My other reviews use Linux for the synthetic benchmarks. At the moment I'm not sure if the big performance disparity is due entirely to Windows limitations, or if there's some system tuning I could do to Windows to bring performance back up. My Linux testbed is set up to minimize OS overhead, but the Windows images used for this reivew were all stock out of the box settings.IntelUser2000 - Tuesday, May 15, 2018 - link

What is used for the random tests? IOmeter?Billy Tallis - Tuesday, May 15, 2018 - link

FIO version 3.6, Windows binaries from https://bluestop.org/fio/ (and Linux binaries compiled locally, for the other reviews). The only fio settings that had to change when moving the scripts from Linux to Windows was the ioengine option for selecting which APIs to use for IO. On Linux, QD1 tests are done with synchronous IO and higher queue depths with libaio, and on Windows all the queue depths used asynchronous IO.In this review I also didn't bother secure erasing the drives between running the burst and sustained tests, but that shouldn't matter much for these drives.

IntelUser2000 - Tuesday, May 15, 2018 - link

So looking at the original Optane Memory review, the loss must be due to Meltdown as it also gets 400MB/s.Billy Tallis - Tuesday, May 15, 2018 - link

The Meltdown+Spectre workarounds don't have anywhere near this kind of impact on Linux, so I don't think that's a sufficient explanation for what's going on with this review's Windows results.Last year's Optane Memory review only did synthetic benchmarks of the drive as a standalone device, not in a caching configuration because the drivers only supported boot drive acceleration at that time.

IntelUser2000 - Tuesday, May 15, 2018 - link

The strange performance may also explain why its sometimes faster in caching than when its standalone.Certainly the drive is capable of faster than that looking at raw media performance.

My point with the last review was that, whether its standalone or not, the drive on the Optane Memory review is getting ~400MB/s, while in this review its getting 160MB/s.

tuxRoller - Wednesday, May 16, 2018 - link

As Billy said you're comparing the results from two different OSs'Intel999 - Tuesday, May 15, 2018 - link

Will there be a comparison between the uber expensive Intel approach to sped up boot times with AMD's free approach using StorageMI?