The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

Modifying a Krait Platform: More Complicated

Modifying the Dell XPS 10 is a little more difficult than Acer's W510 and Surface RT. In both of those products there was only a single inductor in the path from the battery to the CPU block of the SoC. The XPS 10 uses a dual-core Qualcomm solution however. Ever since Qualcomm started doing multi-core designs it has opted to use independent frequency and voltage planes for each core. While all of the A9s in Tegra 3 and both of the Atom cores used in the Z2760 run at the same frequency/voltage, each Krait core in the APQ8060A can run at its own voltage and frequency. As a result, there are two power delivery circuits that are needed to feed the CPU cores. I've highlighted the two inductors Intel lifted in orange:

Each inductor was lifted and wired with a 20 mΩ resistor in series. The voltage drop across the 20 mΩ resistor was measured and used to calculate CPU core power consumption in real time. Unless otherwise stated, the graphs here represent the total power drawn by both CPU cores.

Unfortunately, that's not all that's necessary to accurately measure Qualcomm CPU power. If you remember back to our original Krait architecture article you'll know that Qualcomm puts its L2 cache on a separate voltage and frequency plane. While the CPU cores in this case can run at up to 1.5GHz, the L2 cache tops out at 1.3GHz. I remembered this little fact late in the testing process, and we haven't yet found the power delivery circuit responsible for Krait's L2 cache. As a result, the CPU specific numbers for Qualcomm exclude any power consumed by the L2 cache. The total platform power numbers do include it however as they are measured at the battery.

The larger inductor in yellow feeds the GPU and it's instrumented using another 20 mΩ resistor.

Visualizing Krait's Multiple Power/Frequency Domains

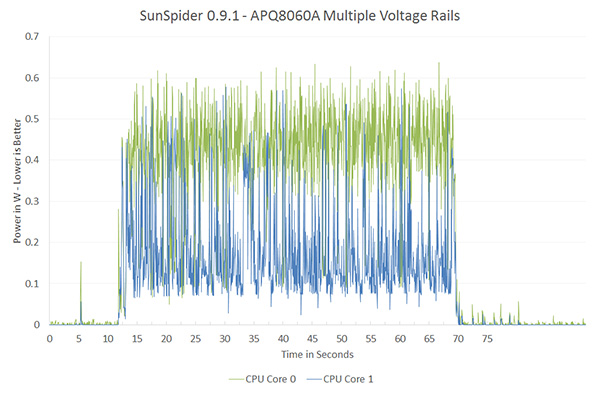

Qualcomm remains adament about its asynchronous clocking with multiple voltage planes. The graph below shows power draw broken down by each core while running SunSpider:

SunSpider is a great benchmark to showcase exactly why Qualcomm has each core running on its own power/frequency plane. For a mixed workload like this, the second core isn't totally idle/power gated but it isn't exactly super active either. If both cores were tied to the same voltage/frequency, the second core would have higher leakage current than in this case. The counter argument would be that if you ran the second core at its max frequency as well it would be able to complete its task quicker and go to sleep, drawing little to no power. The second approach would require a very fast microcontroller to switch between v/f modes and it's unclear which of the two would offer better power savings. It's just nice to be able to visualize exactly why Qualcomm does what it does here.

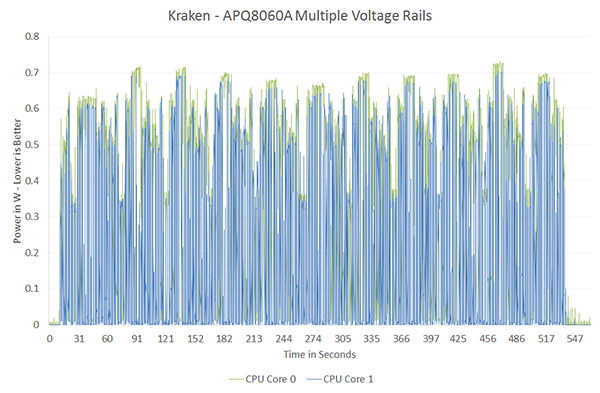

On the other end of the spectrum however is a benchmark like Kraken, where both cores are fairly active and the workload is balanced across both cores:

Here there's no real benefit to having two independent voltage/frequency planes, both cores would be served fine by running at the same voltage and frequency. Qualcomm would argue that the Kraken case is rare (single threaded performance still dominates most user experience), and the power savings in situations like SunSpider are what make asynchronous clocking worth it. This is a much bigger philosophical debate that would require far more than a couple of graphs to support and it's not one that I want to get into here. I suspect that given its current power management architecture, Qualcomm likely picked the best solution possible for delivering the best possible power consumption. It's more effort to manage multiple power/frequency domains, effort that I doubt Qualcomm would put in without seeing some benefit over the alternative. That being said, what works best for a Qualcomm SoC isn't necessarily what's best for a different architecture.

140 Comments

View All Comments

metafor - Friday, January 4, 2013 - link

It matters to a degree. Look at the CPU power chart, the CPU is constantly being ramped from low to high frequencies and back.Tegra automatically switches the CPU to a low-leakage core at some frequency threshold. This helps in almost all situations except for workloads that constantly keep the CPU at above that threshold, which, if you look at the graph, isn't the case.

That being said, that doesn't mean it'll be anywhere near enough to catch up to its Atom and Krait competitors.

jeffkro - Saturday, January 5, 2013 - link

The tegra 3 is also not the post powerful arm processor, intel obviously chose it to make atom look better.npoe1 - Wednesday, January 9, 2013 - link

From one of Ananad's articles: "NVIDIA recently revealed it was doing something similar to this with its upcoming Tegra 3 (Kal-El) SoC. NVIDIA outfitted its next-generation SoC with five CPU cores, although only a maximum of four are visible to the OS. If you’re running light tasks (background checking for email, SMS/MMS, twitter updates while your phone is locked) then a single low power Cortex A9 core services those needs while the higher performance A9s remain power gated. Request more of the OS (e.g. unlock your phone and load a webpage) and the low power A9 goes to sleep and the 4 high performance cores wake up."http://www.anandtech.com/show/4991/arms-cortex-a7-...

jeffkro - Saturday, January 5, 2013 - link

A15 currently pulls to much power for smartphone but it makes for a great tablet chip as well as providing enough horse power to power basic laptops.djgandy - Friday, January 4, 2013 - link

The most obvious thing here is that PowerVR graphics are far superior to Nvidia graphics.Wolfpup - Friday, January 4, 2013 - link

Actually no, that isn't obvious at all. Tegra 3 is a two year old design, on a 2 generations old process. The fact that it's still competitive today is just because it was so good to begin with. It'll be nessisary to look at the performance and power usage of upcoming Nvidia chips on the same process to actually say anything "obvious" about them.Death666Angel - Friday, January 4, 2013 - link

According to Wikipedia, the 545 is from January '10, so it's got its a 3 year old now. The only current gen thing here is the Mali. The 225 is just a 220 with a higher clock, so it's about 1.5 to 2 years old.djgandy - Friday, January 4, 2013 - link

And a 4/5 year old atom and the 2/3 year+ old SGX545 aren't old designs?Look at the power usage of Nvidia. It's way beyond what is acceptable for any SOC design. Phones from 2 years ago used far less power on older processes than the 40nm T3! Just look at GLbenchmark battery life tests for the HTC One X and you'll see how poor the T3 GPU is. In fact just take your Nvidia goggles off and re-read this whole article.

Wolfpup - Friday, January 4, 2013 - link

Atom's basic design is old, the manufacturing process is newer. Tegra 3 is by default at the biggest disadvantage here. You accuse me of bias when it appears you're actually biased.Chloiber - Tuesday, January 8, 2013 - link

First of all it's still 40nm.Second of all: you mentioned the battery benchmarks yourself. Go look at the Nexus 4 review and look how the international version of the One X fares. Battery life on the T3 One X is very good, if you take into account that it's based on 40nm compared to 28nm of the One XL and uses 4 cores.