The NVIDIA GeForce GTX 750 Ti and GTX 750 Review: Maxwell Makes Its Move

by Ryan Smith & Ganesh T S on February 18, 2014 9:00 AM ESTMaxwell’s Feature Set: Kepler Refined

To start our look at the Maxwell architecture, we’ll start with a look at the feature set, as this will be the shorter and easier subject to begin with.

In short, Maxwell only offers a handful of new features compared to Kepler. Kepler itself was a natural evolution of Fermi, further building on NVIDIA’s SM design and Direct3D 11 functionality. Maxwell in turn is a smaller evolution yet.

From a graphics/gaming perspective there will not be any changes. Maxwell remains a Direct3D 11.0 compliant design, supporting the base 11.0 functionality along with many (but not all) of the features required for Direct3D 11.1 and 11.2. NVIDIA as a whole has not professed much of an interest in being 11.1/11.2 compliant – they weren’t in a rush on 10.1 either – so this didn’t come as a great surprise to us. Nevertheless it is unfortunate, as NVIDIA carries enough market share that their support (or lack thereof) for a feature is often the deciding factor whether it’s used. Developers can still use cap bits to access the individual features of D3D 11.1/11.2 that Maxwell does support, but we will not be seeing 11.1 or 11.2 becoming a baseline for PC gaming hardware this year.

On the other hand this means that for the purposes of the GeForce family the GTX 750 series will fit in nicely into the current stack, despite the architectural differences. As a consumer perspective is still analogous to a graphics perspective, Maxwell does not have any features that will explicitly set it apart from Kepler. All 700 series parts will support the same features, even NVIDIA ecosystem features such as GameWorks, NVENC, and G-Sync, so Maxwell is fully aligned with Kepler in that respect.

At a lower level the feature set has only changed to a slightly greater degree. I/O functionality is identical to Kepler, with 4 display controllers backing NVIDIA’s capabilities. HDMI 1.4 and DisplayPort 1.2 functionality join the usual DVI support, with Maxwell being a bit early to support any next generation display connectivity standards.

Video Encode & Decode

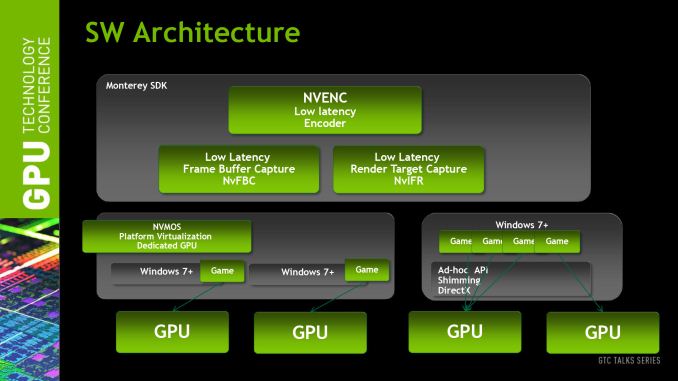

Meanwhile turning our gaze towards video encoding and decoding, we find one of the few areas that has received a feature upgrade on Maxwell. NVENC, NVIDIA’s video encoder, has received an explicit performance boost. NVIDIA tells us that Maxwell’s NVENC should be 1.5x-2x faster than Kepler’s NVENC, or in absolute terms capable of encoding speeds 6x-8x faster than real time.

For the purposes of the GTX 750 series, the impact of this upgrade will heavy depend on how NVENC is being leveraged. For real time applications such as ShadowPlay and GameStream, which by the very definition can’t operate faster than real time, the benefit will primarily be a reduction in encoding latency by upwards of several milliseconds. For offline video transcoding using utilities such as Cyberlink’s MediaEspresso, the greater throughput should directly translate into faster transcoding.

The bigger impact of this will be felt in mobile and server applications, when GM107 makes its introduction in those product lines. In the case of mobile usage the greater performance of Maxwell’s NVENC block directly corresponds with lower power usage, which will reduce the energy costs of using it when operating off of a battery. Meanwhile in server applications the greater performance will allow a sliding scale of latency reductions and an increase in the number of client sessions being streamed off of a single GPU, which for NVIDIA’s purposes means they will get to increase the client density of their GRID products.

Speaking of video, decoding is also receiving a bit of a lift. Maxwell’s VP video decode block won’t feature full H.265 (HEVC) support, but NVIDIA is telling us that they will offer partial hardware acceleration, relying on a mix of software and hardware to decode H.265. We had been hoping for full hardware support on Maxwell, but it looks like it’s a bit premature for that in a discrete GPU. The downside to this is that the long upgrade cycle for video cards – many users are averaging 4 years these days – means there’s a good chance that GTX 750 owners will still be on their GTX 750 cards when H.265 content starts arriving in force, so it will be interesting to see just how much of the process NVIDIA can offload onto their hardware as it stands.

H.265 aside, video decoding overall is getting faster and lower power. NVIDIA tells us that decoding is getting a 8x-10x performance boost due to the implementation of a local decoder cache and an increase in memory efficiency for video decoding. As for power consumption, combined with the aforementioned performance gains, NVIDIA has implemented a new power state called “GC5” specifically for low usage tasks such as video playback. Unfortunately NVIDIA isn’t telling us much about how GC5 works, but as we’ll see in our benchmarks there is a small but distinct improvement in power consumption in the video decode process.

177 Comments

View All Comments

TheinsanegamerN - Tuesday, February 18, 2014 - link

Look at sapphire's 7750. superior in every way to the 6570, and is single slot low profile. and overclocks like a champ.dj_aris - Tuesday, February 18, 2014 - link

Sure but it's cooler is kind of loud. Definitely NOT a silent HTPC choice. Maybe a LP 750 would be better.evilspoons - Tuesday, February 18, 2014 - link

Thanks for pointing that out. None of my local computer stores sell that, but I took a look on MSI's site and sure enough, there it is. They also seem to have an updated version of the same card being sold as an R7 250, although I'm not sure there's any real difference or if it's just a new sticker on the same GPU. Clock speeds, PCB design, and heat sink are the same, anyway.Sabresiberian - Tuesday, February 18, 2014 - link

I'm hoping the power efficiency means the video cards at the high end will get a performance boost because they are able to cram more SMMs on the die than SMXs were used in Kepler solutions. This of course assumes the lower power spec means less heat as well.I do think we will see a significant performance increase when the flagship products are released.

As far as meeting DX11.1/11.2 standards - it would be interesting to hear from game devs how much this effects them. Nvidia has never been all that interested in actually meeting all the requirements for Microsoft to give them official status for DX versions, but that doesn't mean the real-world visual quality is reduced. In the end what I care about is visual quality; if it causes them to lose out compared to AMD's offerings, I will jump ship in a heartbeat. So far that hasn't been the case though.

Krysto - Tuesday, February 18, 2014 - link

Yeah, I'm hoping for a 10 Teraflops Titan, so I can get to pair with my Oculus Rift next year!Kevin G - Tuesday, February 18, 2014 - link

nVidia has been quite aggressive with the main DirectX version. They heavily pushed DX10 back in day with the Geforce 8000/9000 series. They do tend to de-emphassize smaller updates like 8.1, 10.1, 11.1 and 11.2. This is partially due to their short life spans on the market before the next major update arrives.I do expect this to have recently changed as Windows it is moving to rapid release schedule and it'll be increasingly important to adopt these smaller iterations.

kwrzesien - Tuesday, February 18, 2014 - link

Cards on Newegg are showing DirectX 11.2 in the specs list along with OpenGL 4.4. Not that I trust this more than the review - we need to find out more.JDG1980 - Tuesday, February 18, 2014 - link

The efficiency improvements are quite impressive considering that they're still on 28nm. TDP is low enough that AIBs should be able to develop fanless versions of the 750 Ti.The lack of HDMI 2.0 support is disappointing, but understandable, considering that it exists virtually nowhere. (Has the standard even been finalized yet?) But we need to get there eventually. How hard will it be to add this feature to Maxwell in the future? Does it require re-engineering the GPU silicon itself, or just re-designing the PCB with different external components?

Given the increasing popularity of cryptocoin mining, some benchmarks on that might have been useful. I'd be interested to know if Maxwell is any more competitive in the mining arena than Kepler was. Admittedly, no one is going to be using a GPU this small for mining, but if it is competitive on a per-core basis, it could make a big difference going forward.

xenol - Tuesday, February 18, 2014 - link

I'm only slightly annoyed that NVIDIA released this as a 700 series and not an 800 series.DanNeely - Tuesday, February 18, 2014 - link

I suspect that's an indicator that we shouldn't expect the rest of the Maxwell line to launch in the immediate future.