Samsung SSD 845DC EVO/PRO Performance Preview & Exploring IOPS Consistency

by Kristian Vättö on September 3, 2014 8:00 AM EST

Traditionally Samsung's enterprise SSDs have only been available to large server OEMs (e.g. Dell, EMC, and IBM). In other words, unless you were buying tens of thousands of drives, Samsung would not sell you any. This is a rather common strategy in the industry because it cuts the validation time as you only need to work with a handful of partners and systems, whereas channel products need to be validated for a variety of configurations and may require more aftermarket support as well.

However, back in June Samsung made a change in its strategy and released the 845DC EVO, Samsung's first enterprise SSD for the channel. The 845DC EVO was accompanied by the 845DC PRO a month later, which targets the more write-intensive workloads, whereas the EVO is more geared towards mixed and read-centric workloads. Samsung also sent us the PM853T, which is an OEM version of the 845DC EVO and we now have the chance to see if there is any difference between the channel and OEM versions.

Due to my hectic travel schedule over the past couple of months, I only have a performance preview today. I am in the process of testing the drives through our new enterprise suite, so a full review will follow soon, but I already have a sneak peek of what we will be doing in the future. Or actually, I do not have any new tests to share, but a new way of looking at our existing test data.

In the past our IO consistency test has only looked at the average IOPS in one second intervals, which is an industry standard and a fairly accurate way of describing performance. However, an average is still an average. Modern SSDs can easily process tens of thousands of IOs per second, so even within one second the variation in performance can be huge, which is something that the average does not show.

What we are doing is reporting two additional metrics that give us an idea of what is happening every second. These two are minimum IOPS and standard deviation. The former is simply maximum response time translated into IOPS and it gives us the worst possible IOPS that the drive provides at any given time. The latter, on the other hand, gives us an insight to the performance variation for every single second.

Explaining Throughput, IOPS, and Latency

Before we get to our new benchmarks, there is something I want to discuss regarding storage benchmarking in general that has been bugging me for a while now. Throughput, IOPS, and latency are the three main metrics of storage performance, yet the relation between the three is not that well understood. If you take a look at any enterprise SSD spec sheet, you will very likely see all three metrics used, but what many do not know is that it doesn't have to be that way. Everything could be reported in megabytes or IOs per second, or through latency, and I will soon explain how; meanwhile, manufacturers have chosen to use all three because it suits them better for marketing. To see the relation between the three metrics, we first need to understand what each metric really is.

Let's start with latency because it is ultimately the mother of all other metrics and the simplest to understand. Sometimes IO latency is referred to as response or service time, but ultimately all three terms measure the same thing: the time it takes for an IO to complete. Basically, the measurement starts when the OS sends a request to the drive and ends when the drive finishes processing the request. In the case of a read, the request is considered completed when the OS receives the data, and with writes completion is achieved when the drive informs the OS that it has received the data. Note that receiving the data does not mean that the drive has written the data to its final medium – the drive can send the completion command even though the data is sitting in a DRAM cache (which is why even hard drives can have very high burst speeds).

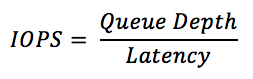

Now that we understand latency, we can have a look at IOPS, which is simply an abbreviation for IOs per second. IOPS describes how many IO operations the drive can handle per second and it is directly related to latency. For instance, if your drive has a constant latency of 1ms (0.001s), your drive can process 1,000 IOs per second. Pretty simple, right? But things get a bit more complicated when we incorporate queue depth into the equation.

Let's assume that it still takes 1ms for our drive to process one IO, but now there are two IO in the queue. As it still takes 1ms to process the first IO, the latency for the second IO cannot be 1ms because the OS already sent the IO request for both, but the second request was placed in a queue as the drive had not finished processing the previous IO. Assuming that both IOs were sent at the same time, the latency of the second IO would be 2ms.

Do you see what happened there? We placed two IOs in the queue (i.e. doubled the queue depth) and suddenly our latency doubled, but our drive was still doing 1,000 IOPS. Basically, we asked the drive to process more IOs by adding them to the queue, but in this case our hypothetical drive could only process 1,000 IOs per second. If we added even more IOs to the queue, the latency would keep increasing because there would be more IOs waiting in the queue and it would still take 1ms to process each one of them.

Fortunately SSDs can take advantage of parallelism by writing to multiple dies and planes at the same time, so in the real world any decent SSD would not be limited to 1,000 IOPS across all queue depths, meaning that the latency would not scale up like crazy as in our example above.

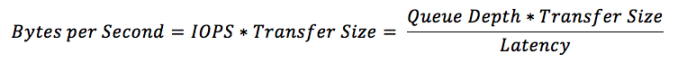

One thing that IOPS does not take into account is the transfer size. Obviously it takes longer to transfer and process 128KB of data than it takes to do the same for 4KB of data, so IOPS can be misleading. In other words, 1,000 IOPS on its own does not tell you anything useful because that could be with a transfer size of 512 bytes, meaning that your drive might only do a mere 125 IOPS with 4KB transfer size, which would be pretty awful for a modern drive.

We need throughput to bring the transfer size into the equation. Throughput measures how many bytes are transferred per second and commonly throughput is measured in mega- or gigabytes per second given the speed of today's storage. Mathematically throughput is simply IOPS times the transfer size as IOPS already tells us how many IOs are happening per second, so all we need is the size of those IOs to know how many bytes are transferred.

Putting The Secret Marketing Sauce Together

What we just learned is that all three specifications – latency, IOPS, and throughput – are ultimately the same. You can translate IOPS to MB/s and MB/s to latency as long as you know the queue depth and transfer size. As I mentioned in the beginning, the reason why we "need" all three is marketing.

Take 128KB sequential performance for instance. 500MB/s sounds much better than ~4,000 IOPS because the 4KB random write IOPS for the same SSD can be 90,000. On the other hand, 90,000 IOPS with 4KB transfer size is only ~370MB/s, so from a marketing perspective it is much better to say that the drive does 500MB/s 128KB sequential and 90,000 IOPS 4KB random.

Latency is no different. It is just another best case scenario figure that the manufacturers want to report to make their product look better. 20µs latency sounds brilliant until you read the actual data sheet and realize that it is often with a sequential 4KB transfer at a queue depth of one. That is the best case scenario because the latency of a random 4KB transfer at queue depth of 32 can easily be several milliseconds, which is far from the stated 20µs latency. If the latency was truly 20µs for a random 4KB transfer at queue depth of 32, then the drive could do 1,600,000 IOPS!

The reason I wanted to cover this is to help everyone understand what the storage metrics really mean and the relationship between them. I have found this to be fairly poorly understood and I want to fix that. We do not necessarily need all three metrics because they just allow for misleading marketing (like the latency example above) and I believe the best way to fix the marketing is to educate the buyers to take the marketing with a grain of salt (or at least read the fine print to see if the figure is meaningful at all).

Test Setup

| CPU | Intel Core i7-4770K running at 3.3GHz (Turbo & EIST enabled, C-states disabled) |

| Motherboard | ASUS Z87 Deluxe (BIOS 1707) |

| Chipset | Intel Z87 |

| Chipset Drivers | Intel 9.4.0.1026 + Intel RST 12.9 |

| Memory | Corsair Vengeance DDR3-1866 2x8GB (9-10-9-27 2T) |

| Graphics | Intel HD Graphics 4600 |

| Graphics Drivers | 15.33.8.64.3345 |

| Desktop Resolution | 1920 x 1080 |

| OS | Windows 7 x64 |

- Thanks to Intel for the Core i7-4770K CPU

- Thanks to ASUS for the Z87 Deluxe motherboard

- Thanks to Corsair for the Vengeance 16GB DDR3-1866 DRAM kit, RM750 power supply, Hydro H60 CPU cooler and Carbide 330R case

31 Comments

View All Comments

LiviuTM - Wednesday, September 3, 2014 - link

Great article, Kristian.I enjoyed finding more about latency, IOPS and throughput and the relationship between them.

Keep up the good work :)

Chapbass - Wednesday, September 3, 2014 - link

I would like to echo this statement. Some of the heavy technical stuff makes my eyes glaze over at times, but this was so well written that I really got into it. Awesome article, Kristian.romrunning - Wednesday, September 3, 2014 - link

"The only difference between the 845DC EVO and PM853T is the firmware and the PM853T is geared more towards sustained workloads, which results in slightly higher random write speed (15K IOPS vs 14K IOPS)."Chart for PM853T shows 14K for Random Writes, so likely needs to be corrected.

Kristian Vättö - Wednesday, September 3, 2014 - link

Good catch, I was waiting for Samsung to send me the full data sheet for the PM853T but I never got it, so I accidentally left the 845DC EVO specs there. I've now updated it with the specs I have and with some additional commentary.JellyRoll - Wednesday, September 3, 2014 - link

The only issue with calculating performance as listed on the first page is that it assumes that the SSD works perfectly in all aspects. No ECC, no wear leveling, no garbage collection. None of these are true. Even without those factors no SSD will ever behave absolutely perfectly in every aspect at all times....anything but. That is why there is so much variation between vendors. It would be impossible to calculate performance using that method with an SSD in the real world.Kristian Vättö - Wednesday, September 3, 2014 - link

Of course real world is always different because the transfer size and queue depth are constantly changing and no SSD behaves perfectly. I mentioned that it is a hypothetical SSD and obviously no real drive would have a constant latency of 1ms or perfect transfer size scaling. The goal was to demonstrate how the metrics are related and it is easier with concrete, albeit hypothetical, examples.hrrmph - Wednesday, September 3, 2014 - link

I think you nailed it on the theoretical stuff (the relationships between the parameters), and presented it well (easy to understand).This has been bugging me for a while too, although I won't pretend to have gotten it figured out - it's just that I keep getting pickier in my lab notes and shopping specs about things like queue depth for a given size transfer for the specified performance. Leave any condition or parameter out, and the specs seem kinda useless. Leave everything in, and then I wonder which ones are pertinent to my usage scenarios.

Now, your explanation will have me questioning the manufacturer's motives for the selection of each unit of measure chosen for each listed spec. Who says this field of endeavor doesn't lead to obsession? :)

If any of the specs *are* pertinent to my usage scenarios, then I wonder which ones are *most* pertinent for which of my usage scenarios (laptop, versus general desktop, versus high powered workstation).

Any relationships that you discover or methods that you develop for the charts to help explain it better are most welcome. I know this is an over-simplification, but I would guess that most workstation users want to know:

- Is my drive limiting my performance (why did that operation stutter or lag, or why does it take so long - was it the drive)?

- Is there anything I can do about it that I can afford (mainly, what can I replace it with - new controller card, newer, better designed SSDs, better racks, cables, etc.)?

I am pleased that you are helping us out by further dissecting performance consistency / variation. I suspect that although SSDs are an order of magnitude (or more) better than HDDs at many tasks, the "devil is in the details," and that there is a reason that many SSD equipped machines still "hiccup," fairly frequently (although not nearly as often or as bad as HDD equipped machines). I also suspect that drive sub-systems are still one of the most common weaker links that is responsible for such hiccups.

I am particularly interested in the (usually) brutally difficult small file size tests. These tests seem to be able to bring even the best of machines to a crawl, and any device (ie: SSD) that can help performance on those tests seems to be very likely to be noticeable to the end user.

If you do indeed find "let downs" in performance consistency (or any other drive related performance spec), then maybe the manufacturers will work to improve upon those weaknesses until we get "buttery smooth" performance...

...or at least until we can definitively start looking at other sub-systems (compute, memory, I/O, etc.) to solve the hiccups.

My introductory courses were on 8086 computers running DOS. I don't remember them often stopping to think about anything... until I started "hitting" the disks heavily. The more things change, the more I suspect they stay the same ;)

So I keep allocating more of my budget to disks and disk sub-systems than anything else. AT's articles are thus *very* helpful in "aiming" that budget and I hope you have some revelations for us soon that show which products are worth the money.

iwod - Wednesday, September 3, 2014 - link

I have been wondering for a bit, why hasn't Enterprise switched to some other interconnect? Surely they could do PCI-E have have it directly connected to the CPU. ( Assuming they dont need those for GPU or other things ).And I have been Samsung being extremely aggressive with the web hosting market. Where Intel is lacking behind.

FunBunny2 - Wednesday, September 3, 2014 - link

"real" enterprise has been running SAS and FibreChannel for rather a long time. InfiniBand every now and again. To the extent that enterprise buys X,000 drives to parcel out to offices and such, then that's where the SATA drives go. But that's not really enterprise storage. Real enterprise SSD/flash/etc. doesn't have a list price (well, only in the sense that your car did) and I'd wager that not one of the enterprise SSD/flash companies (and no, Intel doesn't count) has ever offered up a sample to AnandTech.rossjudson - Thursday, September 4, 2014 - link

Fusion-io (and others) have been doing precisely this (PCIe connected flash storage) for a number of years. They are currently producing modules with up to 6TB of storage.