The Apple iPad Pro Review

by Ryan Smith, Joshua Ho & Brandon Chester on January 22, 2016 8:10 AM ESTSoC Analysis: On x86 vs ARMv8

Before we get to the benchmarks, I want to spend a bit of time talking about the impact of CPU architectures at a middle degree of technical depth. At a high level, there are a number of peripheral issues when it comes to comparing these two SoCs, such as the quality of their fixed-function blocks. But when you look at what consumes the vast majority of the power, it turns out that the CPU is competing with things like the modem/RF front-end and GPU.

x86-64 ISA registers

Probably the easiest place to start when we’re comparing things like Skylake and Twister is the ISA (instruction set architecture). This subject alone is probably worthy of an article, but the short version for those that aren't really familiar with this topic is that an ISA defines how a processor should behave in response to certain instructions, and how these instructions should be encoded. For example, if you were to add two integers together in the EAX and EDX registers, x86-32 dictates that this would be equivalent to 01d0 in hexadecimal. In response to this instruction, the CPU would add whatever value that was in the EDX register to the value in the EAX register and leave the result in the EDX register.

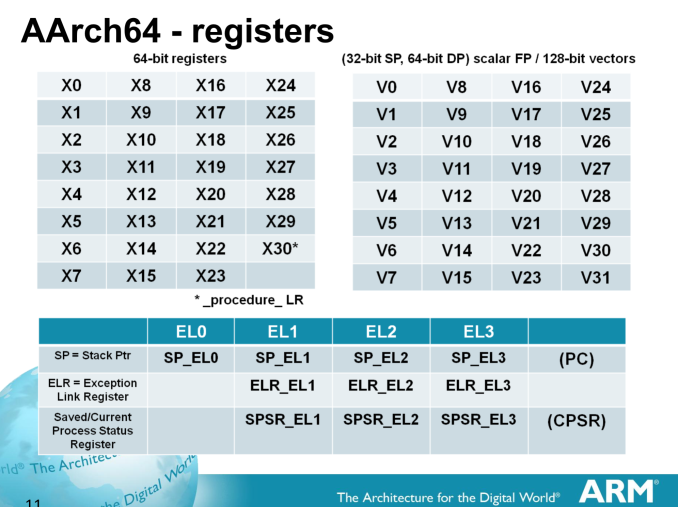

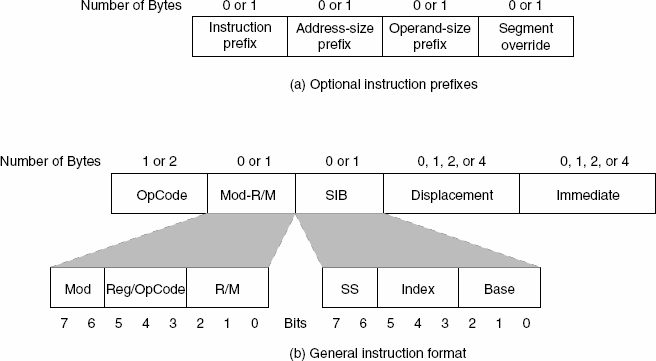

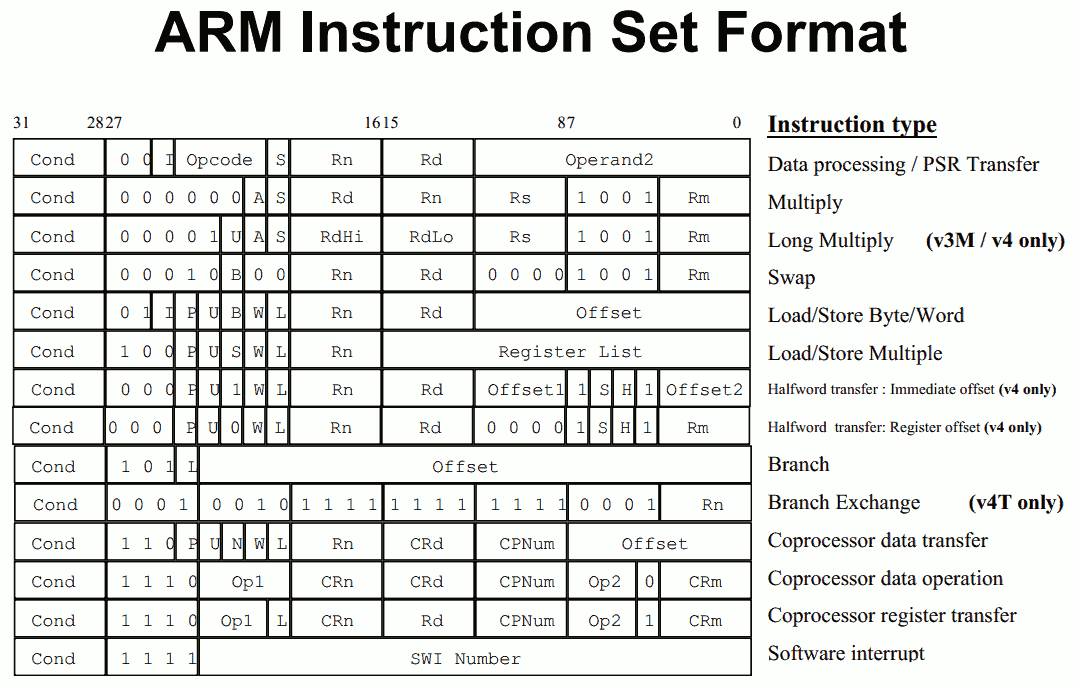

The fundamental difference between x86 and ARM is that x86 is a relatively complex ISA, while ARM is relatively simple by comparison. One key difference is that ARM dictates that every instruction is a fixed number of bits. In the case of ARMv8-A and ARMv7-A, all instructions are 32-bits long unless you're in thumb mode, which means that all instructions are 16-bit long, but the same sort of trade-offs that come from a fixed length instruction encoding still apply. Thumb-2 is a variable length ISA, so in some sense the same trade-offs apply. It’s important to make a distinction between instruction and data here, because even though AArch64 uses 32-bit instructions the register width is 64 bits, which is what determines things like how much memory can be addressed and the range of values that a single register can hold. By comparison, Intel’s x86 ISA has variable length instructions. In both x86-32 and x86-64/AMD64, each instruction can range anywhere from 8 to 120 bits long depending upon how the instruction is encoded.

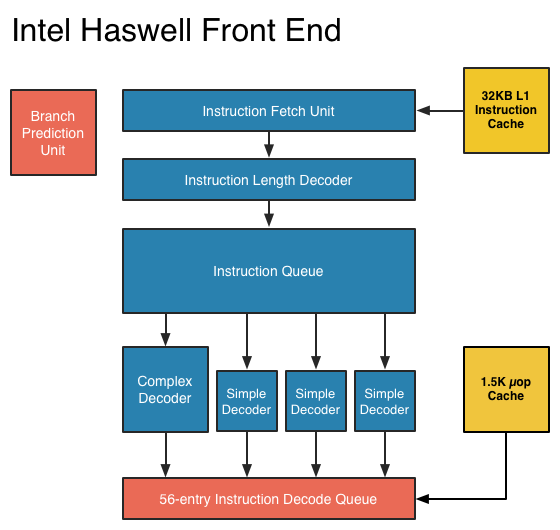

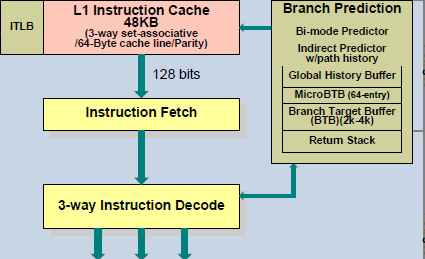

At this point, it might be evident that on the implementation side of things, a decoder for x86 instructions is going to be more complex. For a CPU implementing the ARM ISA, because the instructions are of a fixed length the decoder simply reads instructions 2 or 4 bytes at a time. On the other hand, a CPU implementing the x86 ISA would have to determine how many bytes to pull in at a time for an instruction based upon the preceding bytes.

A57 Front-End Decode, Note the lack of uop cache

While it might sound like the x86 ISA is just clearly at a disadvantage here, it’s important to avoid oversimplifying the problem. Although the decoder of an ARM CPU already knows how many bytes it needs to pull in at a time, this inherently means that unless all 2 or 4 bytes of the instruction are used, each instruction contains wasted bits. While it may not seem like a big deal to “waste” a byte here and there, this can actually become a significant bottleneck in how quickly instructions can get from the L1 instruction cache to the front-end instruction decoder of the CPU. The major issue here is that due to RC delay in the metal wire interconnects of a chip, increasing the size of an instruction cache inherently increases the number of cycles that it takes for an instruction to get from the L1 cache to the instruction decoder on the CPU. If a cache doesn’t have the instruction that you need, it could take hundreds of cycles for it to arrive from main memory.

Of course, there are other issues worth considering. For example, in the case of x86, the instructions themselves can be incredibly complex. One of the simplest cases of this is just some cases of the add instruction, where you can have either a source or destination be in memory, although both source and destination cannot be in memory. An example of this might be addq (%rax,%rbx,2), %rdx, which could take 5 CPU cycles to happen in something like Skylake. Of course, pipelining and other tricks can make the throughput of such instructions much higher but that's another topic that can't be properly addressed within the scope of this article.

By comparison, the ARM ISA has no direct equivalent to this instruction. Looking at our example of an add instruction, ARM would require a load instruction before the add instruction. This has two notable implications. The first is that this once again is an advantage for an x86 CPU in terms of instruction density because fewer bits are needed to express a single instruction. The second is that for a “pure” CISC CPU you now have a barrier for a number of performance and power optimizations as any instruction dependent upon the result from the current instruction wouldn’t be able to be pipelined or executed in parallel.

The final issue here is that x86 just has an enormous number of instructions that have to be supported due to backwards compatibility. Part of the reason why x86 became so dominant in the market was that code compiled for the original Intel 8086 would work with any future x86 CPU, but the original 8086 didn’t even have memory protection. As a result, all x86 CPUs made today still have to start in real mode and support the original 16-bit registers and instructions, in addition to 32-bit and 64-bit registers and instructions. Of course, to run a program in 8086 mode is a non-trivial task, but even in the x86-64 ISA it isn't unusual to see instructions that are identical to the x86-32 equivalent. By comparison, ARMv8 is designed such that you can only execute ARMv7 or AArch32 code across exception boundaries, so practically programs are only going to run one type of code or the other.

Back in the 1980s up to the 1990s, this became one of the major reasons why RISC was rapidly becoming dominant as CISC ISAs like x86 ended up creating CPUs that generally used more power and die area for the same performance. However, today ISA is basically irrelevant to the discussion due to a number of factors. The first is that beginning with the Intel Pentium Pro and AMD K5, x86 CPUs were really RISC CPU cores with microcode or some other logic to translate x86 CPU instructions to the internal RISC CPU instructions. The second is that decoding of these instructions has been increasingly optimized around only a few instructions that are commonly used by compilers, which makes the x86 ISA practically less complex than what the standard might suggest. The final change here has been that ARM and other RISC ISAs have gotten increasingly complex as well, as it became necessary to enable instructions that support floating point math, SIMD operations, CPU virtualization, and cryptography. As a result, the RISC/CISC distinction is mostly irrelevant when it comes to discussions of power efficiency and performance as microarchitecture is really the main factor at play now.

408 Comments

View All Comments

glenn.tx - Saturday, January 23, 2016 - link

I mean't CAN"T be ignored :0MaxIT - Saturday, February 13, 2016 - link

There is absolutely no way I could (or would) use a Surface Pro as my primary laptop (or even tablet)...digiguy - Saturday, January 23, 2016 - link

I don't think anyone is saying that ipad pro is the only game in town. At least not me. As you can see I am a "multi-device" guy. I don't believe in one device for everything. And all my devices and synced via dropbox + onedrive + sugarsync.I have several activities and a lot of what I do is done via a computer. No surface pro/book or other clone could replace my main device, the one I spend most time on and from which I am writing, a 17 inch quad core i7 with 16GB of RAM, 2 SSDs, 1 HHD, lots of ports etc. I am a heavy multi-tasker and 8GB or a dual core are sometimes not enough.

But my quad core stays at home and I also work on the go (teach at university, among other things) and travel, so I have my SP3 for when I work on the go and my 14 inch ultrabook for travelling (better on my lap and on a plane).

So each device has a role and I would never dream of replacing any laptop with the ipad pro... I think very few people buy the ipad pro with this objective. For what I use it for ipad pro beats SP3 on a lot of things (size, sound, weight, touch optimization) and that’s why I bought it.

Constructor - Saturday, January 23, 2016 - link

Yeah, when backward desktop compatibility is the primary thing you need.The whole point of contention her is that some people overgeneralize their own needs to apply to absolutely every professional user, which is simply not the case.

An iPad (Pro or otherwise) can very well be a professional tool, especially when flexibility of use, portability and ease of use are critical, but of course there will still be cases where other computers will be better suited. Just not exclusively any more by a long shot.

Murloc - Sunday, January 24, 2016 - link

you won't find that kind of people (pure office workers who only need the office ecosystem and sync everything to the cloud and have no need for other software or usb ports) commenting on this review.glenn.tx - Saturday, January 23, 2016 - link

"I didn’t really notice that it had gotten significantly harder to handle in the hands than an iPad Air 2"... Are you kidding me? Find me someone else that agrees with this opinion. Every other review of the Pro notes that it's a bit unwieldy. I wish I could find another quality technical source for reviews other than Anandtech because the bias for Apple is gushing.KPOM - Saturday, January 23, 2016 - link

Most sites have said that the weight balance is good.Constructor - Saturday, January 23, 2016 - link

Most people who have handled mine have remarked how light it is relative to what they expected at its size, and that reflects my own impression as well.Of course it's bigger and heavier than an iPad Air 2, but "harder to handle" is something I can't really get together with the actual device. It's just A4-sized like any ordinary paper magazine. If you can't cope with that, you must have major problems anyway.

digiguy - Saturday, January 23, 2016 - link

I would say that the air you can hold it from one side for as long as you like, the ipad pro you'll probably want to hold it like a pizza if for more that a few seconds, but if you hold it like that you can hold it for long without feeling tired. That's not a big issue IMO.Constructor - Saturday, January 23, 2016 - link

Even prolonged sessions playing Real Racing for several hours (where it's held free without support with both hands because it also acts as the steering wheel) have not been a major problem yet.Yes, holding it with one hand at one edge is not ideal, but it's just like a stiff A4 note pad in general. Not a big problem.

One great feature is that the microfiber back side of the Smart Cover sticks very firmly to any kind of fabric, so it's perfectly comfortable to cross my legs and put the iPad on my thigh, without touching it with my hands at all. It just stays there very securely (even on a train) as if glued in place.

Actually much more comfortable than any book or magazine I've ever read while sitting down (which you always have to keep from slipping away and from trying to close by itself).

It is also very useful when typing on my lap with the onscreen keyboard, where it also prevents the iPad from slipping all over the place.

I don't know if that was explicitly a design goal, but it's definitely a great advantage and one of the reasons why I can very much recommend the regular Smart Cover. (The one for my previous iPad 3 had survived four years of regular use with minimal wear already.)