Qualcomm Snapdragon S4 (Krait) Performance Preview - 1.5 GHz MSM8960 MDP and Adreno 225 Benchmarks

by Brian Klug & Anand Lal Shimpi on February 21, 2012 3:01 AM EST- Posted in

- Smartphones

- Snapdragon

- Qualcomm

- Adreno

- Krait

- Mobile

The MSM8960 is an unusual member of the Krait family in that it doesn't use an Adreno 3xx GPU. In order to get the SoC out quickly, Qualcomm paired the two Krait cores in the 8960 with a tried and true GPU design: the Adreno 225. Adreno 225 itself hasn't been used in any prior Qualcomm SoC, but it is very closely related to the Adreno 220 used in the Snapdragon S3 that we've seen in a number of recent handsets.

Compared to Adreno 220, 225 primarily adds support for Direct3D 9_3 (which includes features like multiple render targets). The resulting impact on die area is around 5% and required several months of work on Qualcomm's part.

From a compute standpoint however, Adreno 225 looks identical to Adreno 220. The big difference is thanks to the 8690's 28nm manufacturing process, Adreno 225 can now run at up to 400MHz compared to 266MHz in Adreno 220 designs. A 50% increase in GPU clock frequency combined with a doubling in memory bandwidth compared to Snapdragon S3 gives the Adreno 225 a sizable advantage over its predecessor.

| Mobile SoC GPU Comparison | |||||||||||

| Adreno 225 | PowerVR SGX 540 | PowerVR SGX 543 | PowerVR SGX 543MP2 | Mali-400 MP4 | GeForce ULP | Kal-El GeForce | |||||

| SIMD Name | - | USSE | USSE2 | USSE2 | Core | Core | Core | ||||

| # of SIMDs | 8 | 4 | 4 | 8 | 4 + 1 | 8 | 12 | ||||

| MADs per SIMD | 4 | 2 | 4 | 4 | 4 / 2 | 1 | 1 | ||||

| Total MADs | 32 | 8 | 16 | 32 | 18 | 8 | 12 | ||||

| GFLOPS @ 200MHz | 12.8 GFLOPS | 3.2 GFLOPS | 6.4 GFLOPS | 12.8 GFLOPS | 7.2 GFLOPS | 3.2 GFLOPS | 4.8 GFLOPS | ||||

| GFLOPS @ 300MHz | 19.2 GFLOPS | 4.8 GFLOPS | 9.6 GFLOPS | 19.2 GFLOPS | 10.8 GFLOPS | 4.8 GFLOPS | 7.2 GFLOPS | ||||

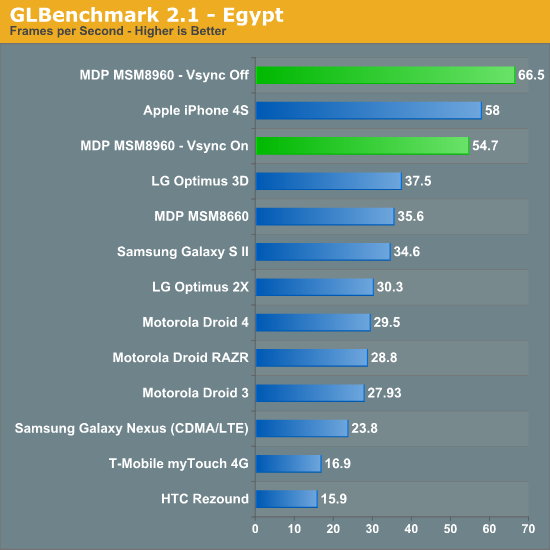

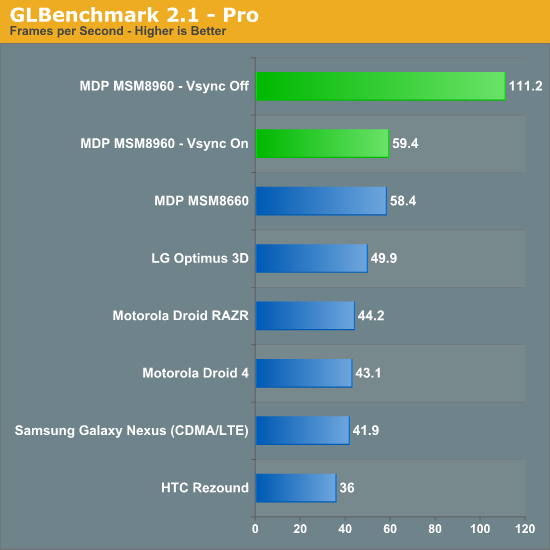

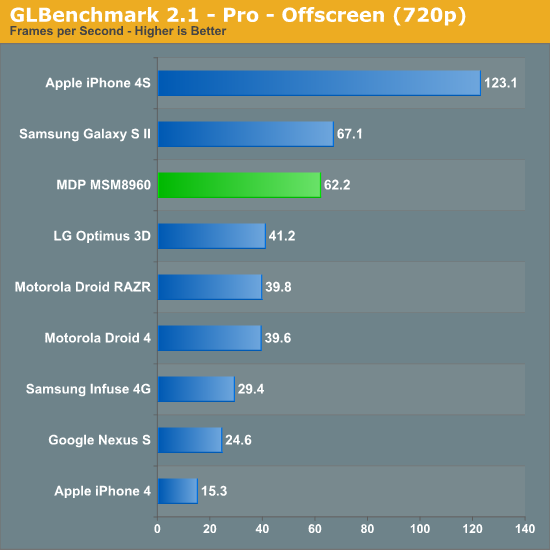

We turn to GLBenchmark and Basemark ES 2.0 V1 to measure the Adreno 225's performance:

Limited by Vsync the Adreno 225 can actually deliver similar performance to the PowerVR SGX 543MP2 in Apple's A5. However if we drive up the resolution, avoid vsync entirely and look at 720p results the Adreno 225 falls short. Its performance is measurably better than anything else available on the Android side in the Egypt benchmark, however the older Pro test still shows the SGS2's Mali-400 implementation as quicker. The eventual move to Adreno 3xx GPUs will likely help address this gap.

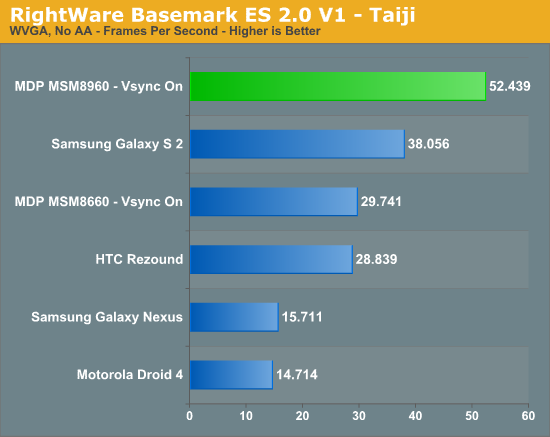

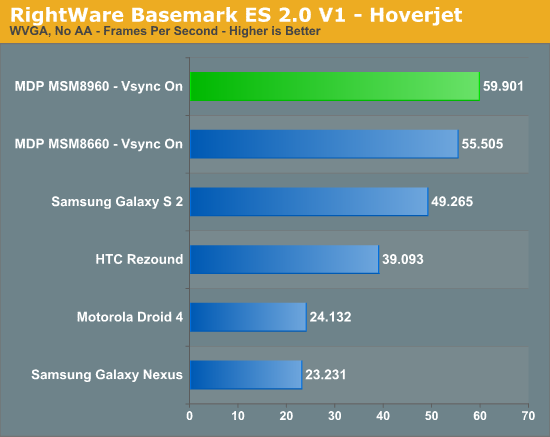

Basemark ES 2.0 tells a similar story (updated: notes below):

In the original version of this article we noticed some odd behavior on the part of the Mali-400 MP4 based Samsung Galaxy S 2. Initially we thought the ARM based GPU was simply faster than the Adreno 225 implementation in the MSM8960, however it turns out there was another factor at play. The original version of Basemark ES 2.0 V1 had anti-aliasing enabled and requested 4X MSAA from all devices that ran it. Some GPUs will run the test with AA disabled for various reasons (e.g. some don't technically support 4X MSAA), while others (Adreno family included) will run with it enabled. This resulted in the Adreno GPUs being unfairly penalized. We've since re-run all of the numbers with AA disabled and at WVGA (to avoid hitting vsync on many of the devices).

Basemark clearly favors Qualcomm's Adreno architecture, whether or not that's representative of real world workloads is another discussion entirely.

The results above are at 800 x 480. We're unable to force display resolution on the iOS version of Basemark so we've got a native resolution comparison below:

| RightWare Basemark ES 2.0 V1 Comparison (Native Resolution) | ||||

| Taiji | Hoverjet | |||

| Apple iPhone 4S (960 x 640) | 16.623 fps | 30.178 fps | ||

| Qualcomm MDP MSM8960 (1024 x 600) | 40.576 fps | 59.586 fps | ||

Even at its lower native resolution, Apple's iPhone 4S is unable to outperform the MSM8960 based MDP here. It's unclear why there's such a drastic reversal in standing between the Adreno 225 and PowerVR SGX 543MP2 compared to the GLBenchmark results. Needless to say, 3D performance can easily vary depending on the workload. We're still in dire need of good 3D game benchmarks on Android. Here's hoping that some cross platform iOS/Android game developers using Epic's UDK will expose frame rate counters/benchmarking tools in their games.

86 Comments

View All Comments

sciwizam - Tuesday, February 21, 2012 - link

Any thoughts on how Krait will compare against the A15 chips and when's the earliest will those be on market?wapz - Tuesday, February 21, 2012 - link

Look in the architecture article on page 1 here: http://www.anandtech.com/show/4940/qualcomm-new-sn..."ARM hasn't published DMIPS/MHz numbers for the Cortex A15, although rumors place its performance around 3.5 DMIPS/MHz."

Krait has 3.3 DMIPS/MHz, so if a dual Cortex A15 would run at the same frequency they would be fairly comparable I would imagine (obviously ignoring all other elements that could help performance on either of them).

wapz - Tuesday, February 21, 2012 - link

And if that's the case, HTC will have an interesting problem with their new lineup. It would mean, if the rumours are correct, their new flagship One X model using Tegra3 AP33 chipset at 1,5GHz and a 4,7 inch 720p screen might be slower compared to the One S, sporting the Snapdragon S4 chipset and a 4,3 inch screen with qHD.Lucian Armasu - Tuesday, February 21, 2012 - link

In that case even if the GPU's were equal in performance, the One S would be faster due to the fact that it uses a lower resolution.zorxd - Tuesday, February 21, 2012 - link

If by "faster", you mean more FPS in a 3D game, then yes.The resulting image quality would be lower however.

metafor - Tuesday, February 21, 2012 - link

Yes. FPS is only one factor in the overall equation of user experience. Higher resolution rendering is definitely preferable assuming one could maintain ~60fps.zorxd - Tuesday, February 21, 2012 - link

I'd rather have a 34 fps 1280x720 than a 60 fps 960x540.sosrandom - Tuesday, February 21, 2012 - link

or better graphics at 30fps @ 960x540 than 30fps @ 1280x720trob6969 - Wednesday, February 22, 2012 - link

I agree, i would take quality over speed any day as long as the difference in speed is measured in mere seconds.vol7ron - Thursday, March 1, 2012 - link

I can't wait to see the power savings, especially since the modem is a huge power draw, and one of the benefits is that QC is the manufacturer, which means integrated chip and less power consumption (as well as thinner device).