The AMD Vega GPU Architecture Teaser: Higher IPC, Tiling, & More, Coming in H1’2017

by Ryan Smith on January 5, 2017 9:00 AM ESTHBM2 & “The World’s Most Scalable GPU Memory Architecture”

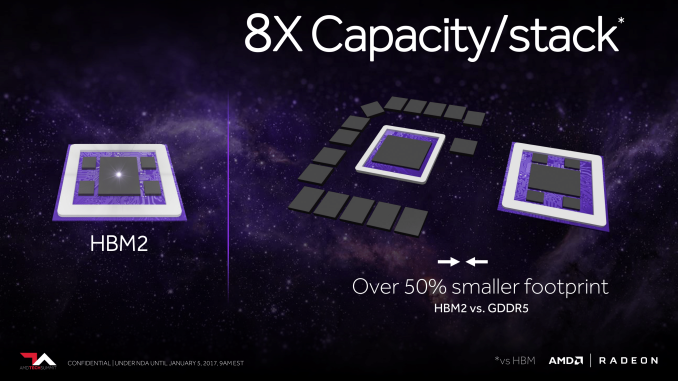

With the launch of the Fiji GPU and resulting Radeon R9 Fury products in 2015, AMD became the first GPU manufacturer to ship with first-generation High Bandwidth Memory (HBM). The ultra-wide memory standard essentially turned the usual rules of GPU memory on its head, replacing narrow, high clocked memory (GDDR5) with wide, low clocked memory. By taking advantage of Through Silicon Vias (TSVs) and silicon interposers, HBM could offer far more bandwidth than GDDR5 while consuming less power and taking up less space.

Now for Vega, AMD is back again with support for the next generation of HBM technology, HBM2. In fact this is the very first thing we ever learned about Vega, going back to AMD’s roadmap from last year where it was the sole detail listed for the architecture.

HBM2 builds off of HBM, offering welcome improvements in both bandwidth and capacity. In terms of bandwidth, HBM2 can clock at up to 2Gbps per pin, twice the rate of HBM1. This means that at those clockspeeds (and I’ll note that at least so far we haven’t seen any 2Gbps HBM2), AMD can either double their memory bandwidth or cut the number of HBM stacks they need in half to get the same amount of bandwidth. The latter point is of particular interest, as we’ll get to here in a bit.

But more important still are the capacity increases. HBM1 stacked topped out at 1GB each, which means Fiji could have no more than 4GB of VRAM. HBM2 stacks go much higher – up to 8GB per stack – which means AMD’s memory capacity problems when using HBM have for all practical purposes gone away. AMD could in time offer 8GB, 16GB, or even 32GB of HBM2 memory, which is more than competitive with current GDDR5 memory types.

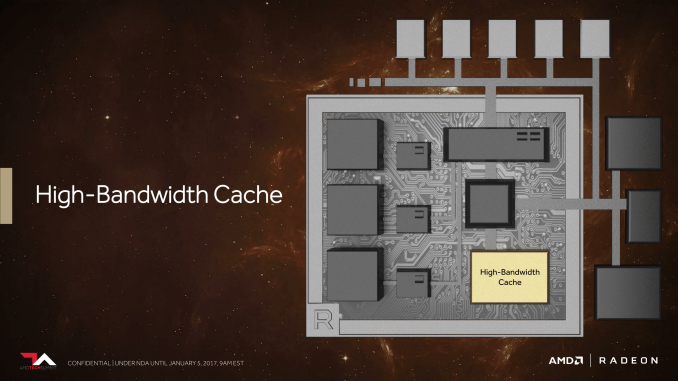

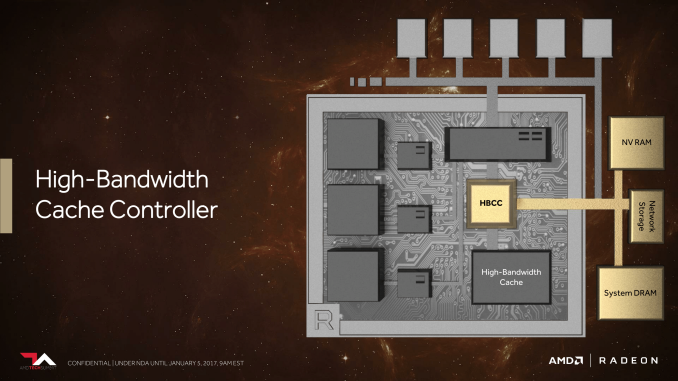

Meanwhile it’s very interesting to note that with Vega, AMD is calling their on-package HBM stacks “high-bandwidth cache” rather than “VRAM” or similar terms as was the case with Fiji products.

This is a term that can easily be misread – and it’s the one area where perhaps it’s too much of a tease – but despite the name, there are no signals from AMD right now that it’s going to be used as a cache in the pure, traditional sense. Rather, because AMD has already announced that they’re looking into other ideas such as on-card NAND storage (the Radeon Pro SSG), they are looking at memory more broadly.

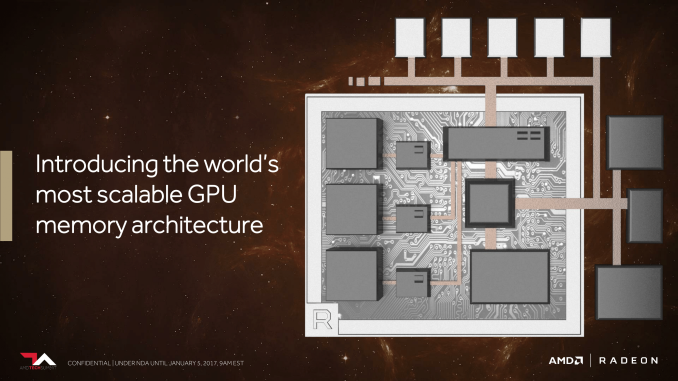

And this brings us to what AMD is calling “The World’s Most Scalable GPU Memory Architecture”. Along with supporting HBM, AMD has undertaken a lot of under-the-hood work to better support large dataset management between the high bandwidth cache (HBM2), on-card NAND, and even farther out sources like system RAM and network storage.

The basic idea here is that, especially in the professional space, data set size is vastly larger than local storage. So there needs to be a sensible system in place to move that data across various tiers of storage. This may sound like a simple concept, but in fact GPUs do a pretty bad job altogether of handling situations in which a memory request has to go off-package. AMD wants to do a better job here, both in deciding what data needs to actually be on-package, but also in breaking up those requests so that “data management” isn’t just moving around a few very large chunks of data. The latter makes for an especially interesting point, as it could potentially lead to a far more CPU-like process for managing memory, with a focus on pages instead of datasets.

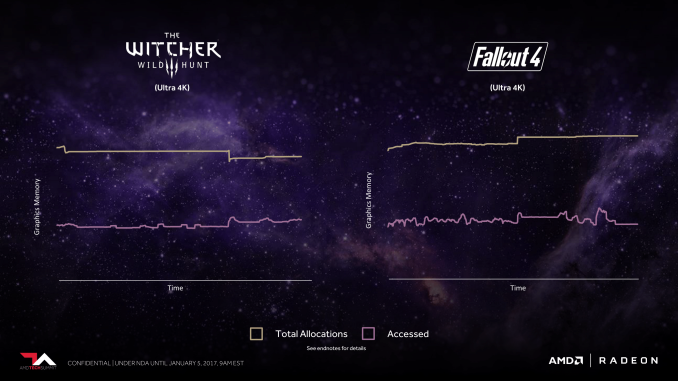

Interestingly, to drive this point home, AMD actually turned to games rather than professional applications. Plotting out the memory allocation and usage patterns of The Witcher III and Fallout 4, AMD finds that both games allocate far more memory than they actually use, by nearly a factor of 2x. Part of this is undoubtedly due to the memory management model of the DirectX 11 API used by both games, but a large factor is also simply due to the fact that this is traditionally what games have always done. Memory stalls are expensive and games tend to be monolithic use cases, so why not allocate everything you can, just to be sure you don’t run out?

The end result here is that AMD is painting a very different picture for how they want to handle memory allocations and caching on Vega and beyond. In the short term it’s professional workloads that stand to gain the most, but in the long run this is something that could impact games as well. And not to be ignored is virtualization; AMD’s foray into GPU virtualization is still into its early days, but this likely will have a big impact on virtualization as well. In fact I imagine it’s a big reason why AMD is giving Vega the ability to support a relatively absurd 512TB of virtual address space, many times the size of local VRAM. Multi-user time-sharing workloads are a prime example of where large address spaces can be useful.

ROPs & Rasterizers: Binning for the Win(ning)

We’ll suitably round-out our overview of AMD’s Vega teaser with a look at the front and back-ends of the GPU architecture. While AMD has clearly put quite a bit of effort into the shader core, shader engines, and memory, they have not ignored the rasterizers at the front-end or the ROPs at the back-end. In fact this could be one of the most important changes to the architecture from an efficiency standpoint.

Back in August, our pal David Kanter discovered one of the important ingredients of the secret sauce that is NVIDIA’s efficiency optimizations. As it turns out, NVIDIA has been doing tile based rasterization and binning since Maxwell, and that this was likely one of the big reasons Maxwell’s efficiency increased by so much. Though NVIDIA still refuses to comment on the matter, from what we can ascertain, breaking up a scene into tiles has allowed NVIDIA to keep a lot more traffic on-chip, which saves memory bandwidth, but also cuts down on very expensive accesses to VRAM.

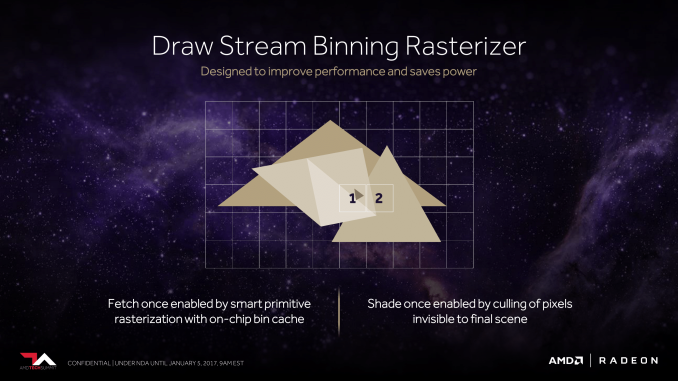

For Vega, AMD will be doing something similar. The architecture will add support for what AMD calls the Draw Stream Binning Rasterizer, which true to its name, will give Vega the ability to bin polygons by tile. By doing so, AMD will cut down on the amount of memory accesses by working with smaller tiles that can stay-on chip. This will also allow AMD to do a better job of culling hidden pixels, keeping them from making it to the pixel shaders and consuming resources there.

As we have almost no detail on how AMD or NVIDIA are doing tiling and binning, it’s impossible to say with any degree of certainty just how close their implementations are, so I’ll refrain from any speculation on which might be better. But I’m not going to be too surprised if in the future we find out both implementations are quite similar. The important thing to take away from this right now is that AMD is following a very similar path to where we think NVIDIA captured some of their greatest efficiency gains on Maxwell, and that in turn bodes well for Vega.

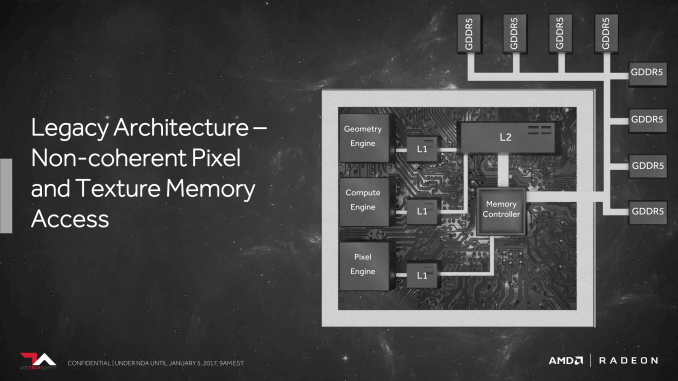

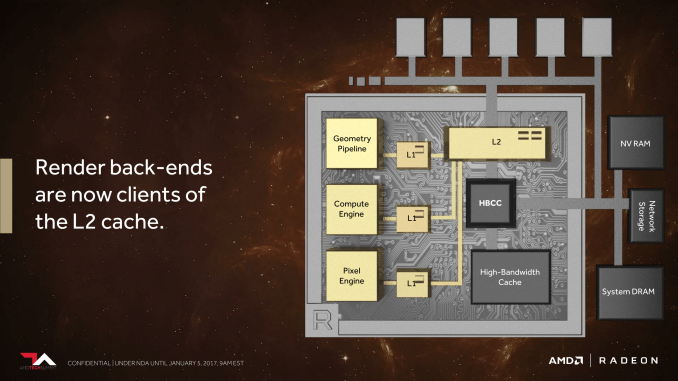

Meanwhile, on the ROP side of matters, besides baking in the necessary support for the aforementioned binning technology, AMD is also making one other change to cut down on the amount of data that has to go off-chip to VRAM. AMD has significantly reworked how the ROPs (or as they like to call them, the Render Back-Ends) interact with their L2 cache. Starting with Vega, the ROPs are now clients of the L2 cache rather than the memory controller, allowing them to better and more directly use the relatively spacious L2 cache.

This is especially significant for a specific graphics task, which is rendering to a texture (as opposed to a frame buffer to be immediately displayed). Render to texture is an especially common operation for deferred shading, and while deferred shading itself isn’t new, its usage is increasing. With this change to Vega, the ROPs can now send a scene rendered to a texture to the L2 cache, which can in turn be fetched by the texture units for reuse in the next stage of the rendering process. Any potential performance improvements from this change are going to be especially game-specific since not every game uses deferred shading, but it’s one of those corner cases that is important for AMD to address in order to ensure more consistent performance.

155 Comments

View All Comments

silverblue - Thursday, January 5, 2017 - link

Formerly Videologic, changed in 1999 to Imagination Technologies.I had a Kyro II. The first Kyro was clocked at a mere 115MHz for both core and memory, but Kyro II was a whole 60MHz faster. It really worked well in UT when paired with my XP 1700+. :) There was a Kyro II SE with 200MHz clocks but was as rare as rocking horse excrement. I was also very excited about its supposed TnL, DDR-powered successor, but it never launched, which meant bye-bye on the desktop for PowerVR, rather than the bloody nose that ATi and NVIDIA deserved at that time.

wumpus - Friday, January 6, 2017 - link

If my poor memory is remotely accurate (don't bet on it), the chip to run Unreal Tournament was the S3 chip (probably the last they made). Not sure if the "TnL" it supposedly had was fraudulent or defective, but the texture compression (probably only used in Unreal Tournament before S3 died and it became a standard feature) gave the S3 the best visuals in the game.I know I had one of those boards (simply because it was cheap, long after its day) but can't remember how it performed on Unreal Tournament (I may not have bothered putting windows on that box. In those days the best way to connect windows to the internet was to connect a Linux machine to a DSL modem, then connect windows to Linux. Connecting Windows to DSL was asking to get it to blindly reset all your configuration files at random intervals).

BrokenCrayons - Friday, January 6, 2017 - link

You're probably thinking about S3's Savage video card series with the S3 MeTaL API. It's been ages, but I'm pretty sure UT supported MeTaL out of the box. Epic was pretty good about that back when there was a plethora of competing APIs before we settled into DirectX and OpenGL. When I tried to diversify my PC shop by adding an after-hours LAN arcade, we used those graphics cards because they were good for the multiplayer stuff we wanted to run. I do recall they were a bit glitchy under Descent Freespace. There were artifacts around ship engine glow effects and I think it was due to poor DirectX suport, but don't quote me on that, it's been years.silverblue - Friday, January 6, 2017 - link

Yeah, MeTaL was there at retail, as long as you installed the texture pack from the second disc. I had a Savage 4 (Diamond S540) which worked flawlessly... until I flashed the BIOS one day, thereafter it would hang during every session at a random point, thus requiring a reboot. That was a stupid idea, especially considering it looked excellent. Luckily, somebody got the OpenGL mode to work with those textures.I believe the T&L on the Savage 2000 was unfit for purpose; it was broken in hardware so you couldn't coax its true performance out.

silverblue - Friday, January 6, 2017 - link

Sorry, to clarify, you could use MeTaL without the texture pack, but what would be the point? :)Threska - Friday, January 6, 2017 - link

And for the time it was a very nice card. Shame Kyro didn't take offhttps://en.wikipedia.org/wiki/PowerVR

eldakka - Friday, January 6, 2017 - link

I always liked the infinite planes concept (no polygons!) of the PowerVR 1 series. I remember being disappointed at the time, and still am, that infinite planes never caught on. The entire paradigm gave many operations 'for free' that today require a lot of hardware and software support to implement.Threska - Saturday, January 7, 2017 - link

Could have been a patent issue.tarqsharq - Friday, January 6, 2017 - link

I remembering excitedly reading about TBR with an article about the Kyro II on this very website oh so many years ago like you're saying. http://www.anandtech.com/show/735Funny enough, it didn't do well in the market because the APIs at the time weren't very flexible and required a lot of tweaking to it working in each engine pretty much.

The games that it did work well in, the card punched WAY above its cost bracket if I remember correctly.

Jtaylor1986 - Thursday, January 5, 2017 - link

This design has probably been in the works for 3+ years. Nvidia just beat them to market it had nothing to do with David Kanter.