Arm Announces Neoverse N1 & E1 Platforms & CPUs: Enabling A Huge Jump In Infrastructure Performance

by Andrei Frumusanu on February 20, 2019 9:00 AM EST

Anybody following the industry over the last decade will have heard of Arm. We best know the company for being the enabler and providing the architecture as well as CPU designs that power essentially all of today’s mobile devices. The last 7-5 years in particular we’ve seen meteoric advances in silicon performance of the mobile SoCs found in our smartphones and tablets.

However Arm's ambition goes widely beyond just mobile and embedded devices. The market for compute in general is a lot larger than that, and looking at things in a business sense, high-end devices like servers and related infrastructure carry far greater profit margins. So for a successful CPU designer like Arm who is still on the rise, it's a very lucrative market to aim for, as current leader Intel can profess.

To that end, while Arm has been wildly successful in mobile and embedded, anything requiring more performance has to date been out of reach or has come with significant drawbacks. Over the last decade we’ve heard of numerous prophecies how products based on the architecture will take the server and infrastructure market by storm “any moment now”. In the last couple of years in particular we’ve seen various vendors attempt to bring this goal to fruition: Unfortunately, the results of the first generation of products were less than successful, and as such, even though some did better than others, the Arm server ecosystem has seen a quite a bit of hardship in its first years.

A New Focus On Performance

While Arm has been successful in mobile for quite some time, the overall performance of their designs has often left something to be desired. As a result, the company has been undertaking a new focus on performance that is spanning everything from mobile to servers. Working towards this goal, 2018 was an important year for Arm as the company had introduced its brand-new Cortex A76 microarchitecture design: Representing a clean-sheet endeavor, learning from the experience gained in previous generations, the company has put high hopes in the brand-new Austin-family of microarchitectures. In fact, Arm is so confident on its upcoming designs that the company has publicly shared its client compute CPU roadmap through 2020 and proclaiming it will take Intel head on in PC laptop space.

While we’ll have to wait a bit longer for products such as the Snapdragon 8CX to come to market, we’ve already had our hands on the first mobile devices with the Cortex A76, and very much independently verified all of Arm’s performance and efficiency claims.

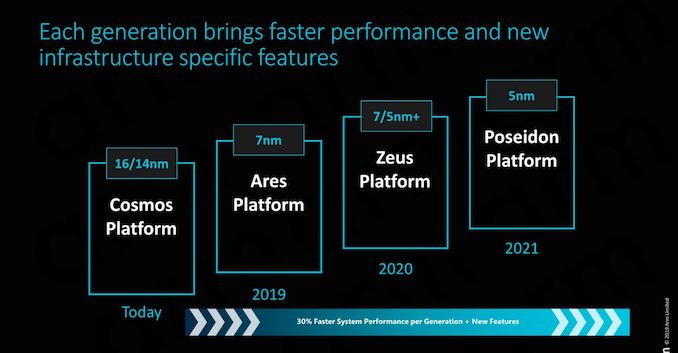

And then of course, there's Neoverse, the star of today's Arm announcements. With Neoverse Arm is looking to do for servers and infrastructure what it's already doing for its mobile business, by greatly ramping up their performance and improving their competitiveness with a new generation of processor designs. We'll get into Neoverse in much deeper detail in a moment, but in context, it's one piece of a much larger effort for Arm.

All of these new microarchitectures are important to Arm because they represent an inflection point in the market: Performance is now nearing that of the high-end players such as Intel and AMD, and Arm is confident in its ability to sustain significant annual improvements of 25-30% - vastly exceeding the rate at which the incumbent vendors are able to iterate.

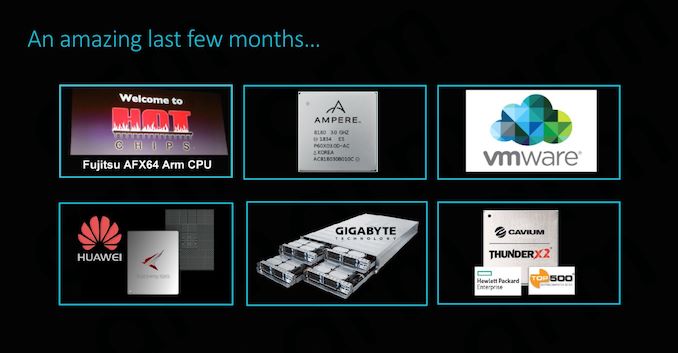

The Server Inflection Point: An Eventful Last Few Months Indeed

The last couple of months have been quite exciting for the Arm server ecosystem. At last year’s Hotchips we’ve covered Fujitsu’s session of their brand-new A64FX HPC (High performance compute) processor, representing not only the company shift from SPARC to ARMv8, but also delivering the first chip to implement the new SVE (Scalable Vector Extensions) addition to the Arm architecture.

Cavium’s ThunderX2 saw some very impressive performance leaps, making its new processor among the first to be able to compete with Intel and AMD – with partners such as GIGABYTE offering whole server systems solutions based on the new SoC.

Most recently, we saw Huawei unveiled their new Kunspeng 920 server chip promising to be the industry’s highest performing Arm server CPU.

The big commonality between the above mentioned three products is the fact that each represents individual vendor’s efforts at implementing a custom microarchitecture based on an ARMv8 architectural license. This in fact begs the question: what are Arm’s own plans for the server and infrastructure market? Well for those following closely, today’s coverage of the new Neoverse line-up shouldn’t come as a complete surprise as the company had first announced the branding and road-map back in October.

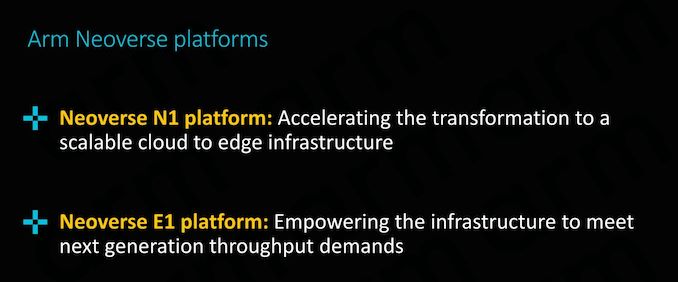

Introducing the Neoverse N1 & E1 platforms: Enabling the Ecosystem

Today’s announcement is all about enabling the ecosystem; we’ll be covering in more detail two new “platforms” that will be at the core of Arm’s infrastructure strategy for the next few years, the Neoverse N1 and E1 platforms:

Particularly today’s announcement of the Neoverse N1 platform sheds light onto what Arm had teased back in the initial October release, detailing what exactly “Ares” is and how the server/infrastructure counter-part to the Cortex A76 µarchitecture will be bringing major performance boosts to the Arm infrastructure ecosystem.

101 Comments

View All Comments

blu42 - Thursday, February 21, 2019 - link

Yep. While ISA may not matter as an aggregate over the set of all tasks, ISAs matter very much when it comes to the performance of any individual task, just the same way as ASICs matter versus gen-purpose CPUs for any given task. One can think of ASICs as an extreme-case specialization of gen-purpose ISAs.Meteor2 - Wednesday, February 20, 2019 - link

Indeed, and reality is that all architectures are converging in terms of performance. It’s just a question of how much money any given manufacturer wants to invest. Intel cut R&D and the results are plain. AMD invested wisely. What Apple has achieved with the ARM ISA is phenomenal. Goodness knows what they could do if they turned their attention away from mobile but goodness knows how much it cost, too.Vitor - Wednesday, February 20, 2019 - link

Although the article is about servers and such, I can't help thinking that in less than a decade RISC CPUs can overtake the deskop/notebook market.And, corretct if I'm wrong, RISC is inherently more efficient than X86 derivates.

SarahKerrigan - Wednesday, February 20, 2019 - link

The evidence for "inherently more efficient" is pretty shaky, although I'd venture that validation of ARM cores is considerably simpler than validation of x86.That being said, ARM has been delivering rapidly and consistently on uarch, and Intel has not.

hMunster - Wednesday, February 20, 2019 - link

ARM is playing catch-up to Intel which got to the point of "no more low hanging fruit" much earlier.Wilco1 - Wednesday, February 20, 2019 - link

Well as an example Intel was unable to design competitive SoCs for the mobile market despite having a process advantage, investing $10+ Billion and even paying various companies to use their chips - "contra-revenue". There is no doubt the complexity of x86 translates into a significant overhead in design and verification, area, power and (at the low end) performance.hMunster - Wednesday, February 20, 2019 - link

The RISC vs. CISC debate does not really matter much anymore.HStewart - Wednesday, February 20, 2019 - link

A lot of this is because CISC process can now handle multiple microinstructions per clock cycle taking advantage of RISC smaller instruction away.But software compatibility is the major concern with this and Microsoft has many failed attempts to try to change this dependency.

FunBunny2 - Wednesday, February 20, 2019 - link

"A lot of this is because CISC process can now handle multiple microinstructions per clock cycle taking advantage of RISC smaller instruction away."that's a testable assertion. not by me, however. the execution of multiple microinstructions by CISC ISA machines doesn't mean, ceteris paribus, that the overlying CISC instruction runs as efficiently as a native RISC instruction; it just must run through the microinstructions. to the extent that CISC ISAs are really executed as some RISC machine on the silicon, that doesn't mean, apples to apples, that said CISC machine executes as efficiently as a native RISC machine. (native RISC does make headaches for the compiler writer, no doubt.) I'd wager that the real reason for RISC microarch was the desire to continue with X86 object code with a bit more performance back when the transistor budget began expanding, but not enough to build the entire ISA in silicon. and to keep the compiler writer from having to continually update as the real ISA (RISC) keeps changing. die shots of current cpu show that the 'core' is a diminishing percent of the real estate.

the still unanswered question: why did Intel/AMD not use the exploding transistor budget to execute the entire instruction set in hardware, but to create these behind-the-scenes RISC machines?

wumpus - Wednesday, February 20, 2019 - link

From memory, Dec was able to make VAX four times faster by pipelining the microcode from VAX instructions compared to "executing the VAX instruction all at once faster". VAX was about the CISCisest CISC that ever CISCed (and sold successfully. I think Intel's BiiN was worse).Dec also made Alpha, which even the first iteration was another 4 times faster than the "pipelined microcode" VAX.

And this was all single issue. Don't even think of trying to issue multiple "full CISC" instructions at once.