Arm's New Cortex-A78 and Cortex-X1 Microarchitectures: An Efficiency and Performance Divergence

by Andrei Frumusanu on May 26, 2020 9:00 AM EST- Posted in

- SoCs

- CPUs

- Arm

- Smartphones

- Mobile

- GPUs

- Cortex

- Cortex A78

- Cortex X1

- Mali G78

The Cortex-A78 Micro-architecture: PPA Focused

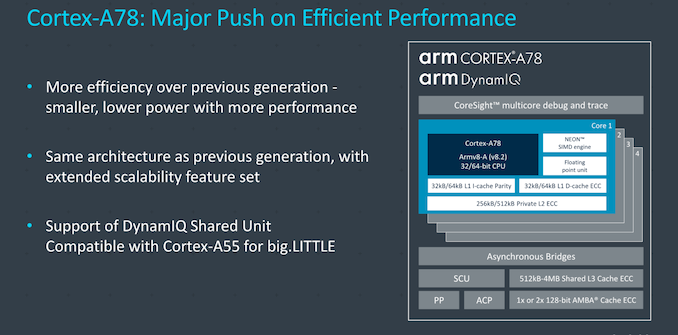

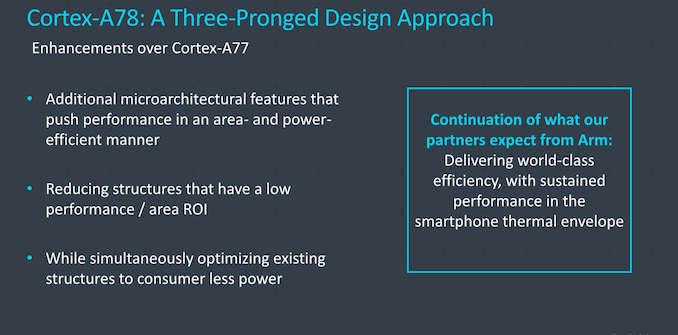

The new Cortex-A78 had been on Arm’s roadmaps for a few years now, and we have been expecting the design to represent the smallest generational microarchitectural jump in Arm’s new Austin family. As the third iteration of Arm's Austin core designs, A78 follows the sizable 25-30% IPC improvements that Arm delivered on the Cortex-A76 and A77, which is to say that Arm has already picked a lot of the low-hanging fruit in refining their Austin core.

As the new A78 now finds itself part of a sibling pairing along side the higher performance X1 CPU, we naturally see the biggest focus of this particular microarchitecture being on improving the PPA of the design. Arm’s goals were reasonable performance improvements, balanced with reduced power usage and maintaining or reducing the area of the core.

It’s still an Arm v8.2 CPU, sharing ISA compatibility with the Cortex-A55 CPU for which it is meant to be paired with in a DynamIQ cluster. We see similar scaling possibilities here, with up to 4 cores per DSU, with an L3 cache scaling up to 4MB in Arm’s projected average target designs.

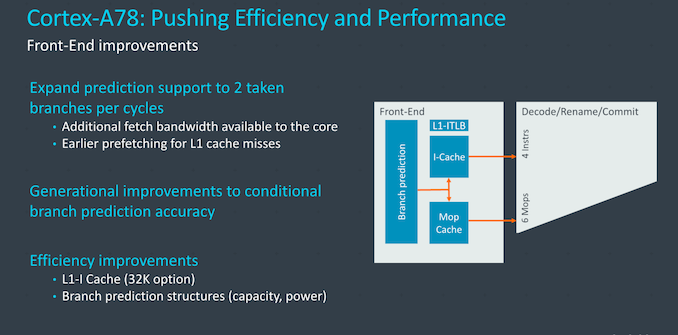

Microarchitectural improvements of the core are found throughout the design. On the front-end, the biggest change has been on the part of the branch predictor, which now is able to process up to two taken branches per cycle. Last year, the Cortex-A77 had introduced as secondary branch execution unit in the back-end, however the actual branch unit on the front-end still only resolved a single branch per cycle.

The A78 is now able to concurrently resolve two predictions per cycle, vastly increasing the throughput on this part of the core and better able to recover from branch mispredictions and resulting pipeline bubbles further downstream in the core. Arm claims their microarchitecture is very branch prediction driven so the improvements here add a lot to the generational improvements of the core. Naturally, the branch predictors themselves have also been improved in terms of their accuracy, which is an ongoing effort with every new generation.

Arm focused on a slew of different aspects of the front-end to improve power efficiency. On the part of the L1I cache, we're now seeing the company offer a 32KB implementation option for vendors, allowing customers to reduce area of the core for a small hit on performance, but with gains in efficiency. Other changes were done to some structures of the branch predictors, where the company downsized some of the low return-on-investment blocks which had a larger cost on area and power, but didn’t have an as large impact on performance.

The Mop cache on the Cortex-A78 remained the same as on the A77, housing up to 1500 already decoded macro-ops. The bandwidth from the front-end to the mid-core is the same as on the A77, with an up to 4-wide instruction decoder and fetching up to 6 instructions from the macro-op cache to the rename stage, bypassing the decoder.

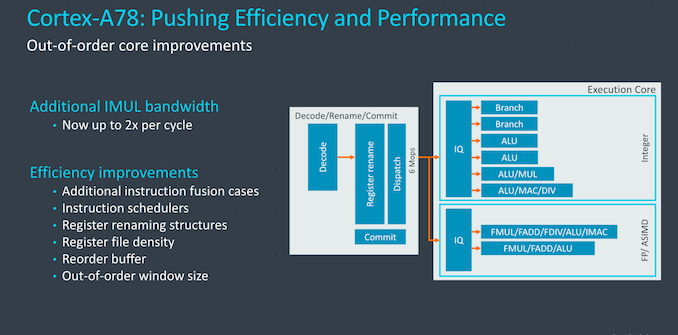

In the mid-core and execution pipelines, most of the work was done in regards to improving the area and power efficiency of the design. We’re now seeing more cases of instruction fusions taking place, which helps not only performance of the core, but also power efficiency as it now uses up less resources for the same amount of work, using less energy.

The issue queues have also seen quite larger changes in their designs. Arm explains that in any OOO-core these are quite power-hungry features, and the designers have made some good power efficiency improvements in these structures, although not detailing any specifics of the changes.

Register renaming structures and register files have also been optimized for efficiency, sometimes seeing a reduction of their sizes. The register files in particular have seen a redesign in the density of the entries they’re able to house, packing in more data in the same amount of space, enabling the designers to reduce the structures’ overall size without reducing their capabilities or performance.

On the re-order-buffer side, although the capacity remains the same at 160 entries, the new A78 improves power efficiency and the density of instructions that can be packed into the buffer, increasing the instructions per unit area of the structure.

Arm has also fine-tuned the out-of-order window size of the A78, actually reducing it in comparison to the A77. The explanation here is that larger window sizes generally do not deliver a good return on investment when scaling up in size, and the goal of the A78 is to maximize efficiency. It’s to be noted that the OOO-window here not solely refers to the ROB which has remained the same size, Arm here employs different buffers, queues, and structures which enable OOO operation, and it’s likely in these blocks where we’re seeing a reduction in capacity.

On the diagram, here we see Arm slightly changing its descriptions on the dispatch stage, disclosing a dispatch bandwidth of 6 macro-ops (Mops) per cycle, whereas last year the company had described the A77 as dispatching 10 µops. The apples-to-apples comparison here is that the new A78 increases the dispatch bandwidth to 12 µops per cycle on the dispatch end, allowing for a wider execution core which houses some new capabilities.

On the integer execution side, the only big addition has been the upgrade of one of the ALUs to a more complex pipeline which now also handles multiplications, essentially doubling the integer MUL bandwidth of the core.

The rest of the execution units have seen very little to no changes this generation, and are pretty much in line with what we’ve already seen in the Cortex-A77. It’s only next year where we expect to see a bigger change in the execution units of Arm’s cores.

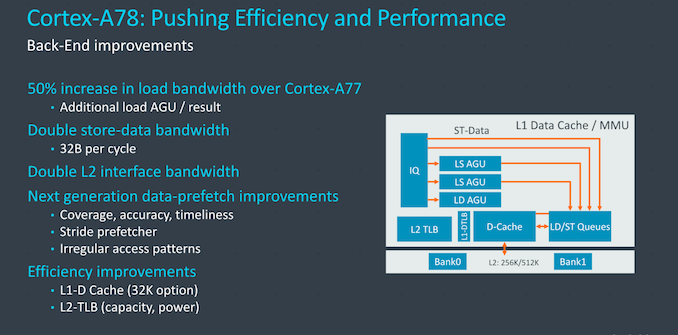

On the back-end of the core and the memory subsystem, we actually find some larger changes for performance improvements. The first big change is the addition of a new load AGU which complements the two-existing load/store AGUs. This doesn’t change the store operations executed per cycle, but gives the core a 50% increase in load bandwidth.

The interface bandwidth from the LD/ST queues to the L1D cache has been doubled from 16 bytes per cycle to 32 bytes per cycle, and the core’s interfaces to the L2 has also been doubled up in terms of both its read and write bandwidth.

Arm seemingly already has some of the most advanced prefetchers in the industry, and here they claim the A78 further improves the designs both in terms of their memory area coverage, accuracy and timeliness. Timeliness here refers to their quick latching on onto emerging patterns and bringing in the data into the lower caches as fast as possible. You also don’t watch the prefetchers to kick in too early or too late, such as needlessly prefetching data that’s not going to be used for some time.

Much like the L1I cache, the A78 now also offers an 32KB L1D option that gives vendors the choice to configure a smaller core setup. The L2 TLB has also been reduced from 1280 to 1024 pages – this essentially improves the power efficiency of the structure whilst still retaining enough entries to allow for complete coverage of a 4MB L3 cache, still minimizing access latency in that regard.

Overall, the Cortex-A78’s microarchitectural disclosures might sound surprising if the core were to be presented in a vacuum, as we’re seeing quite a lot of mentions of reduced structure sizes and overall compromises being made in order to maximize energy efficiency. Naturally this makes sense given that the Cortex-X1 focuses on performance…

192 Comments

View All Comments

Kurosaki - Thursday, June 25, 2020 - link

It's the future!MarcGP - Tuesday, May 26, 2020 - link

You don't make any sense. You say at the same time :1) It's not a problem of the SOCs performance but of the poor emulation

2) The emulation software is not the issue but the weak SOCs

Make up your mind, it's one or the other, but it can't be both.

armchair_architect - Tuesday, May 26, 2020 - link

This looks pretty exciting!What if vendors will go for 2+4 configs (like Apple) with 2 X1 + 4 A78?

Apple has showed that this configuration is really good!

That would be a killer combinations as the littles are practically useless in real scenarios and a slow implementation of a A78 could very well cover the low part of the cluster DVFS curve, for idle.

A55 is super old and doesnt offer any useful performance, I suspect they are only there for the low-power scenarios.

But again, Apple has showed that its out-of-order little cores can be super efficient when implemented at low frequency (I think they run at something like 1.7GHz peak frequency).

I didnt read much information on X1 power, but yes it will for sure be less power efficient than A78 when both of them are running flat out at 3GHz. But, I highly suspect that (like A78 vs A77) on the whole DVFS curve, X1 can be lower power than A78 in the performance regions in which they overlap.

It is simply a matter of being wider and slower, this makes you more efficient. That is the Apple way, wide and slow.

They have the example of iso-performance metric, X1 will need much lower frequencies and voltages to reach a middle-of-the-road performance point (something like ~35 SpecInt, given that projection is 47 SpecInt flat-out). This could easily offset the intrinsic iso-frequency power deficit that the X1 brings.

spaceship9876 - Tuesday, May 26, 2020 - link

I'm surprised they are still using Arm v8.2, that was released in Jan 2016.Kamen Rider Blade - Tuesday, May 26, 2020 - link

I concur.In September 2019, ARMv8.6-A was introduced.

https://en.wikipedia.org/wiki/ARM_architecture#ARM...

There's also these new instructions to add in the future:

In May 2019, ARM announced their upcoming Scalable Vector Extension 2 (SVE2) and Transactional Memory Extension (TME)

eastcoast_pete - Tuesday, May 26, 2020 - link

I might be mistaken, but aren't those wide SVEs a co-development with Fujitsu? ARM might simply not have a blanket license to use that jointly developed tech. I am rooting for wider availability of those for my own reasons (video encoding runs much faster if it can use wide extensions). And there is no such thing as too much oomph for working with videos.SarahKerrigan - Tuesday, May 26, 2020 - link

It's just taking a while for SVE to get in. Future ARM Ltd cores are likely to have it; future server chips from Hisilicon are roadmapped to as well (although at this point, all bets are off due to the ongoing difficulties between the American state and Huawei.)GC2:CS - Tuesday, May 26, 2020 - link

So Apple is finally defeated now ?A11 was 25% A12 15% and A13 just 20% faster than last gen. So A10 is still quite competitive today.

This is higher gain than Apple for a third year in a row.

The question is, how much of that is won by going to the 5nm process ? I heard it is quite advanced compared to 7nm.

tipoo - Tuesday, May 26, 2020 - link

30% faster than the A77 will bring X1 closer to, but probably still under the A13. And A14 will be out by then. Wouldn't call Apple defeated yet, it's easier to make larger gains when you were at half their per core performance a few years ago...MarcGP - Wednesday, May 27, 2020 - link

30% faster in IPC gains alone (iso-process and frequency). The X1 SOCs will be much faster than that 30% over the current A77 SOCs, much closer to 50% than 30%.