Electromigration: Why AMD Ryzen Current Boosting Won't Kill Your CPU

by Dr. Ian Cutress on June 9, 2020 9:10 AM EST- Posted in

- CPUs

- AMD

- AM4

- Ryzen

- Electromigration

Where there is a will to get extra performance out of a CPU, there is often a way. Either through end-user overclocking or motherboard vendors tweaking settings to improve their stock performance, at the end of the day everyone wants better performance, and for a multitude of reasons. This insatiable drive for peak performance, however, means that some of these tweaks and adjustments can start to skirt the lines of what is ‘in specification’. And as a result, we sometimes see methods of increasing processor performance that clearly deliver on their promises, but perhaps at the expense of thermals or longevity.

To this end, it has recently come to light that motherboard vendors have been taking advantage of a setting on AMD motherboards to misrepresent the current delivered to the CPU. By doing so, they are able to increase the processor's power headroom, and ultimately allowing for higher performance at the cost of higher thermals. To be sure, this kind of tweaking isn’t new, but recent events have lead to no shortage of confusion over what exactly is going on, and what the ramifications are for AMD Ryzen processors. So to try to clarify matters, here’s our take on the situation.

The Old Fashioned Way: Spread Spectrum, MultiCore Enhancement, PL2

One of the common themes I've noticed throughout my time at AnandTech as our motherboard editor and now our CPU editor is the lengths to which motherboard vendors will go to in order to get increased performance over the competition. We were the first outlet to break out features such as MultiCore Enhancement, way back in August 2012, which led to higher-than-specified all-core frequencies, or in some cases, outright overclocks. But the history of motherboard vendors adjusting and tweaking features for performance goes further back than that, such as variations with the base frequency from 100 MHz to 104.7 MHz with the Spread Spectrum, leading to increased performance on systems that can support it.

More recently, on Intel platforms, we’ve seen vendors increase their turbo power limits so that the motherboard can sustain the highest turbo for as long as the world remains in existence, just because the motherboard vendors are overengineering the power delivery in order to support it. In the past couple of weeks, we have also found examples of motherboards ignoring Intel’s new Thermal Velocity Boost requirements, which is something we'll be delving into more in a future article.

In short, motherboard vendors want to be the best, and that often means pushing the limits of what is considered the ‘base specification’ of the processor. As we’ve regularly discussed on topics like this with Intel’s turbo power limits, the differentiation between a ‘specification’ and a ‘recommended setting’ can get quite blurred – for Intel, the turbo power listed in the documents is a recommended setting, and any value the motherboard is set to is technically ‘in specification’. The point at which Intel considers it overclocking it seems is if the peak turbo frequency is increased.

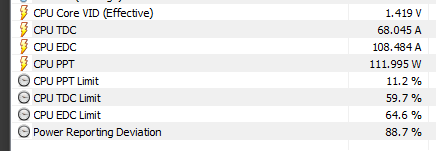

Tweaking AM4 Above and Beyond

So now we move on to the news of the day, with motherboard manufacturers now attempting to tweak AMD based Ryzen motherboards in order to drive higher performance. As thoroughly explained over on the HWiNFO forums by The Stilt and summarized here, AM4 platforms typically have three defined limiters: Package Power Tracking (PPT), which indicates the power threshold that is allowed to be delivered to the socket; Thermal Design Current (TDC), which is the maximum current delivered by the motherboards voltage regulators under thermal limits; and Electrical Design Current (EDC), which is the max current at any time that can be delivered by the voltage regulators. Some of these values are compared to metrics derived internally in the CPU or externally in the power delivery, to see if these limits have been triggered.

In order to calculate the software-based power measurement for which PPT is compared to, the power management co-processor takes the value of current from the voltage regulator management controller. This isn’t an actual value of current, but a dimensionless value (0 to 255) designed to represent 0 = 0 amps, and 255 = peak amps that the VRMs can handle. The power management co-processor on the CPU then performs its power calculation (power in watts = voltage in volts multiplied by current in amps).

The dimensionless value range has to be calibrated on a per-motherboard layout, based on the componentry used (VRMs, Controllers) as well as the tracing, the board layers, and the quality of the design. In order to get an accurate scaler value for this dimensionless range, a motherboard vendor should accurately probe the correct values and then write the firmware to use that look-up table in the system power calculations.

This means that there is a potential way to fiddle with the way that the system interprets the peak power value of the processor. Motherboard vendors can reduce this dimensionless value of current in order to make the processor and the power management co-processor think that there is less power going to the CPU, and as a result, the package power tracking (PPT) limiter has not been yet achieved, and more power can be supplied. This allows the processor to turbo further than was originally intended by AMD.

This has knock-on effects. The processor will be consuming more power, mostly in the form of increased amps, leading to more heat being generated and increased thermals. Because the processor is turboing further (by being allowed to draw more power than what the software is reporting) the processor will also perform better in benchmarks.

As The Stilt points out, if you are running a CPU with a base TDP of 105 W and a PPT value of 142 W, under normal circumstances you should expect to see 142 W power being reported by the CPU at stock settings. However, if the dimensionless current value is only 75% of its real-world current, then the real world power consumption is actually ~190 W, which is the 142 W value divided by the 0.75 factor. Assuming that none of the other limits have been hit (TDC, EDC), the processor will only report 75% of the original PPT power, causing a lot of the confusion.

Is it Out of Specification?

If we are considering PPT, TDC, and EDC to be the be-all and end-all of AMD specifications for power draw and current draw, then yes, this is out of specification. However, PPT by its very nature is going beyond TDP, so we get into this mysterious world of how to define "turbo", similar to what we’ve covered in detail with Intel.

As we’ve previously discussed, in Intel land, the peak power consumed while in a turbo mode is only provided by Intel to motherboard vendors as a ‘recommended value’. As a result, Intel chips will actually accept any value for that peak power limit, including reasonable values like 200 W or 500 W, but even unreasonable values like 4000 W. More often than not (and depending on the processor) a chip might hit other limits first; but for the high-end models, it is certainly worth tracking. Meanwhile the turbo duration, Tau, which defines how big the bucket of energy that Turbo can draw from, can also be extended: instead of the default of between 8 and 56 seconds, Tau can be drawn-out to what's effectively an infinite amount of time. According to Intel, this is all within specification, if the motherboard manufacturers can build boards that can provide it.

What Intel considers out of specification is when the CPU goes beyond the frequencies listed in the turbo tables for Turbo Boost 2.0 (or TBM 3.0, or Thermal Velocity Boost). When the processor runs above the frequency as defined by the turbo tables, then Intel considers this overclocking, and has no obligation to adhere to the chip's warranty.

The problem is that while we can try and transplant the same rules to the AMD situation, AMD doesn’t really use Turbo Tables as such. AMD processors work by attempting to offer the highest possible frequency given the power and current limits at any given time. As more cores are ramped, the power per core decreases, and the overall frequency decreases. We get into the minutiae of frequency envelope tracking, which can get more complex given that AMD can work in 25 MHz steps rather than 100 MHz steps like Intel.

AMD also uses features that push a chip's frequency above the turbo frequency listed on the specifications page. If you wanted to strictly argue about those being overclocking, then judging by the number on the box, it could very well be. AMD purposefully blurs the lines here, but the upside is often more performance.

Is My CPU At Risk?

To answer the big question right off the bat then, no, your CPU is not at risk. For regular users with enough cooling running at stock frequency, there is no issue to any degree that will matter within the expected lifetime of the product.

Most modern x86 processors come with either a three-year warranty for retail boxed parts, or are sold as OEM parts with a one-year warranty. Past those support periods, while AMD or Intel won’t replace the processor in the event of failure, most processors are expected to live well into the 15+ year range. We are still very happily able to test old CPUs in old motherboards, even though they have gone out of service for a long time (and more often than not, it is the old motherboard capacitors that tend to blow up, not the CPU).

When a CPU wafer comes off the manufacturing line, the company get a reliability report about those processors, which helps get a sense of potential avenues for binning those CPUs. This will include elements such as voltage/frequency response, but also as it relates to electromigration.

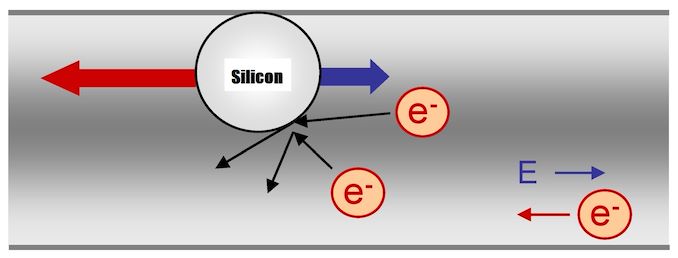

Aside from physical damage, or thermal limits being disabled and the CPU cooking itself, the main way for a modern processor to become non-functional is through electromigration. This is the act of electrons making their way through the wires on a processor and ever so slightly bumping into the silicon (and other elements) in that wire to move them out of the crystal lattice. It is in itself a fairly rare event (how long have your wires been in your house, for example), however at the small scale it can affect change in how a processor works.

Adapted From "Electromigration" by Linear77, License: CC BY 3.0

By moving a metal atom from a wire out of place in a crystal lattice, the cross-section of the wire, at that point, is reduced. This increases the resistance, as resistance is inversely proportional to the cross-sectional area of the wire. If enough silicon atoms are moved out of place, the wire disconnects and the processor is no longer useable. This also happens in trasistors, and is commonly referred to as transitor aging, with the transistor needing a higher voltage over the lifetime of the product (voltage drift).

The amount of electromigration increases under certain conditions – temperature, use, and voltage. One of the main ways to get over the increased resistance is to increase the voltage, which in turn increases the temperature of the processor. It becomes a positive feedback loop, building itself for worse electrical performance, over the lifetime of the processor.

With higher voltage (energy per electron), and higher current density (electrons per unit area), this means that there are more chances for an electron migration event to occur. This can get worse at higher temperatures, and and all these elements act as different factors when it comes down to the amount of electrons that might have enough energy to enable an electromigration event. For anyone studying reaction kinetics, this is a similar principle to concentration but with a variable energy per incident.

So this is bad, right? Well, it used to be. As processor manufacturers and semiconductor fabs have iterated through the design of logic gates in CMOS and FinFET processors, there have been active countermeasures put in place to reduce the levels of electromigration (or reduce the effect of the levels of electromigration). As we shrink process nodes, and voltages decrease, it also becomes less of an issue – the fact that wires also decrease in area has the opposite effect. But as mentioned, the manufacturers now actively take steps to reduce the effect of electromigration inside a processor.

Electromigration has not been an issue for most consumer semiconductor products for a substantial time. The only time I personally have been affected by electromigration issues is when I owned a Sandy Bridge-based 2011 Core i7-2600K, that I used to use for overclocking competitions at 5.1 GHz under some extreme cooling scenarios. It eventually got to a point, after a couple of years, where it needed more voltage to run at stock.

But that was a processor I ran to the ragged edge. Modern day equipment is designed to run for a decade or longer. What we are seeing with these numbers, while there is an increase in thermals due to the increased power, isn’t actually a sizable shift. In The Stilt’s report, because the processor sees that it has extra power headroom, then it raises the voltage slightly in order to get the +75 MHz extra that the budget will allow, which increases the average voltage from 1.32 volts to 1.38 volts during a CineBench R20 run. The peak voltage, which matters a lot for electromigration, only moves from 1.41 volts to 1.42 volts. The overall power was increased 25 W, which makes for around 30A more. Not something on the order of a change in the order of magnitude.

So if I end up with a motherboard that adjusts this perceived current value, will it brick my processor? No. Not unless you have something else seriously wrong with your setup (such as thermals). Within the given lifetime of that product, and the next decade after, it is not likely to make a difference. And as stated previously, even if this did affect electromigration on a large scale, the processor manufacturers have built in mechanisms to deal with it. The only way to actively monitor it, as an end user, would be to observe your average and peak voltage values over the course of years, and see if the processor automatically adjusts itself to compensate.

It is perhaps worth mentioning that the dimensionless current value isn’t adjustable by the end user – it is something the motherboard controls through BIOS updates. If you are a user that overclocks, you are doing more towards electromigration than this adjustment ever will. For those concerned about thermals, then I suspect you are already monitoring and adjusting your BIOS limits as needed for your system.

How To Check if My Motherboard Is Doing It

First, you need to be running a stock system. Changing any of the regular PPT/TDC/EDC already means that the system is being adjusted, so we need to only focus on users dealing with stock systems.

Next, acquire the latest version of HWiNFO, and a test that will cause 100% load on the system, such as CineBench R20.

Inside HWiNFO, there is a metric called “CPU Power Reporting Deviation”. Observe that number while the system is at the full load. A normal motherboard should say ‘100%’, while a motherboard with an adjusted current/VRM reported value will say something below 100%.

Just to clarify, this metric is only valid:

- If your AMD Ryzen CPU is running at full stock settings in the BIOS. No OC, no adjustments to power or current limits.

- When your CPU is running at a full 100% load, such as Cinebench.

If your processor does not match these two requirements, then the value of the Power Reporting Deviation does not mean anything. If it says under 100%, then your motherboard is affected. Please let us know in the comments below.

What Are My Options?

If your motherboard is juicing the processor, but you are happy with the thermal performance of your cooler and the power draw at the wall, then enjoy the extra performance. Even if it is only 75 MHz.

AMD doesn’t necessarily need to comment on the matter, as this is an issue with the motherboard manufacturers. Users might want to probe their motherboard manufacturer, and ask for a BIOS update. Users who want to return their motherboards will have to check on their retailer, as it might depend on where it was purchased.

Given that while it appears to break PPT specifications, it doesn’t actually go beyond any frequency specifications (which are ill defined), it may be similar to how motherboard manufacturers play with power limits on Intel systems, which is to say that it's something that's "just there". Though it would probably be handy to get a BIOS option to enable/disable it.

Related Reading

- The Stilt at HWiNFO Forums

- MultiCore Enhancement (August 2012)

143 Comments

View All Comments

liquid_c - Thursday, June 11, 2020 - link

I don’t know who you are, CiccioB but besides the fact that you seem to have issues using the english language, most of your comments (of which there are many, at least on this article) seem to be nonsensical bullshit. Pardon my french.CiccioB - Thursday, June 11, 2020 - link

Sorry, my native language is not English. Ans the comments cannot be corrected even once you see you made a mistake.And I don't really think to write nonsensical bullshit.

Tell me where my thoughts fail, as I really can see a perfect flow in cause effects that you may miss on your turn.

silverblue - Wednesday, June 10, 2020 - link

The reviewers' BIOS for the X570 Taichi showed a 32% power reporting deviation which would read as about 60W package power in HWINFO when, in actual fact, the load was more like 180W when measured from EPS 12V. BIOS updates have brought this closer to reality (see Gamers Nexus' video on this - https://www.youtube.com/watch?v=10b8CS7wQcM ). Nothing to do with cooling at all.Galcobar - Wednesday, June 10, 2020 - link

Gamers Nexus tested the X570 Taichi with the original reviewers sample BIOS, then again with the most recent BIOS, and discovered the sample BIOS fudging the power draw while the current BIOS reported accurately.It's reasonable to expect the initial BIOS update for X470 boards to allow for Ryzen 3000 processors could follow a similar pattern, if you're not running the most current version.

Khenglish - Tuesday, June 9, 2020 - link

Ian why did you replace the Cu atom in the Linear77 link with an Si atom? In general it's almost impossible to dislodge Si and dopant atoms from their lattice. In fabrication more heat is used to repair a damaged lattice, not cause damage. Electrons are far too light to dislodge Si from a lattice other than from a high power electron beam. Typically when you want to damage an Si lattice you need full atoms for more weight. Metals on the other hand are much easier to get to migrate since you don't really have a lattice to keep atoms stable, and are substantially mobile at much lower temperatures (700C - 1100C used for Si work, with only up to around 400C for metal work).In general electromigration has been less of an issue over time as processes further abandon the use of aluminum. Al is much more susceptible to electromigration than Cu. For many years after Cu was introduced, you still had the bottom 1-2 layers of the metal stack as Al because it takes very little Cu migration into Si to poison it, and Cu diffuses easily into Si. Still having aluminum is why processors were still susceptible to electromigration damage, but with it now mostly gone (replaced by more resistive, but less mobile Cobalt) is why you see higher voltages up to 1.5V returning for standard use on Ryzen CPUs, while this is a voltage that would cause too high of a current density and would slowly kill older processors like 32nm SB.

State of Affairs - Tuesday, June 9, 2020 - link

>Ian why did you replace the Cu atom in the Linear77 link with an Si atom?So I am not the only one who noticed that. One difference between Cu and Si is that the latter is covalently bonded within its diamond-structured lattice. Those covalent bonds are strong and it takes very high energy electrons to initiate displacement.

CiccioB - Wednesday, June 10, 2020 - link

The real difference is that removing wire atoms, be them Al or Cu, creates an avalanche effects as the more you remove the narrower it becomes and higher the resistance which increments temperature that accelerates the removal of other atoms of the wire.Khenglish - Wednesday, June 10, 2020 - link

Yes absolutely. Electromigration happens when there is a high enough current density and temperature to begin pushing metal atoms. As some atoms get pushed away forming a void, current density increases more for even more rapid electromigration.I've been reading up more on electromigration and it's definitely a complicated area. Here's a summary of key points:

1. Smaller processes continually result in higher current density, as a reduced design will have half the wire length, but less than half of the cross-sectional area as width and depth both reduce.

2. FinFETs made the problem worse, as a FinFET occupying the same area as a planar FET will have more width (3d fin) and can push more current, but interconnects are the same size.

3. There's much more than just the abandonment of aluminum to improve electromigration in recent years. A Cobalt sheath performs much better as a surface to wet Copper to than the traditional TaN sheath, reducing electromigration. Another traditional issue was the top of a copper interconnect would be abutted against an insulator, which it would poorly wet to and cause additional migration at this boundary, even more than a TaN sheath. Doping the top of the copper with Manganese improved the bond, and today fully wrapping the copper wire in Cobalt improved the issue even more. An additional improvement was to zig-zag interconnects at regular intervals so that the 90 degree elbow would serve as a backstop against migration, either preventing it from occurring in the first place, or provide increasing back pressure to prevent more from occurring after it had begun.

Spunjji - Monday, June 15, 2020 - link

This was a really helpful addition to the article. Thank you!WaltC - Tuesday, June 9, 2020 - link

Great article, Ian. Thank you for taking the time to put these facts in front of people. I run my 3900X at PPT of 330, 240, 120 (on the other values)--When I run the HWinfo power deviation--it shows a max of 350%, current of value 180%--at idle, doing *nothing* apart from running HWInfo. When I run the little bench in CPU-Z on top of running HWInfo, max and current readings drop to 75%, each...;) As you can see, I'm not stock, so the stat has little meaning for me at all. It seems nonsensical, actually.Last time I experienced eletromigration was on a 130nm Intel CPU years ago. People would overvolt them overclocking and eventually render them useless--made keychains out of the dead ones, IIRC. You are absolutely correct as to why there is almost no chance of that happening today! CPUs--especially Ryzen--are much too advanced in design, as you mentioned. Intel and AMD both use FINFET, and other things.

Glad to see the timeliness of your remarks. Tom's H really should have done a lot more legwork before publicizing such half-baked theories. Instead, Tom's Hardware published the half-baked theory and promised to do the legwork later--to see if it was true...;) Sad.