iBUYPOWER Erebus GT Review: Ivy Bridge and NVIDIA's GeForce GTX 680 in SLI

by Dustin Sklavos on April 27, 2012 2:00 AM ESTGaming Performance

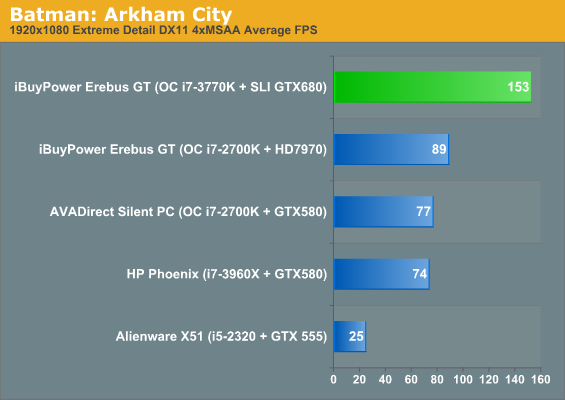

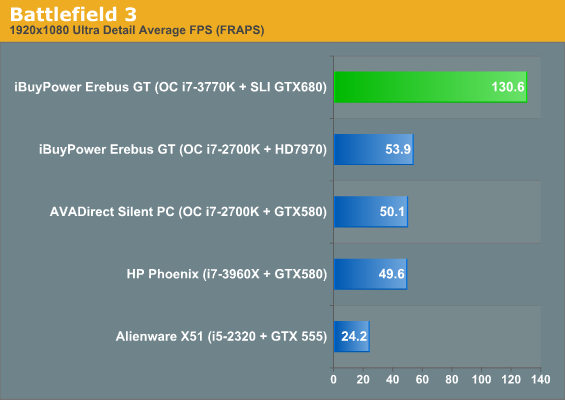

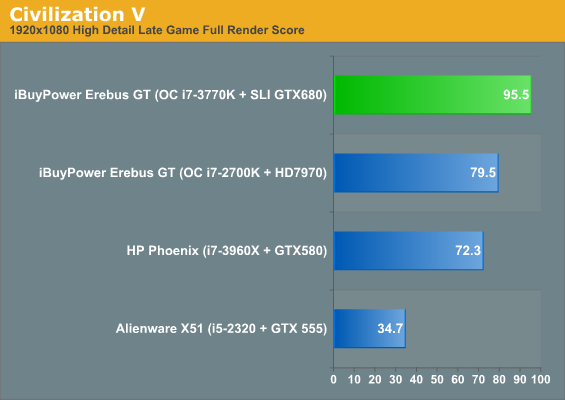

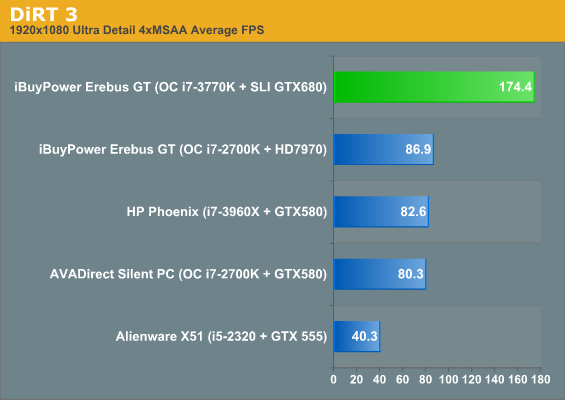

Given what we've seen on the last page of the pair of GeForce GTX 680's in the iBUYPOWER Erebus GT, it's reasonable to assume we'll see them pretty much at the top of every chart. Thankfully we're starting to accumulate a decent amount of data to draw comparisons from with our new gaming suite.

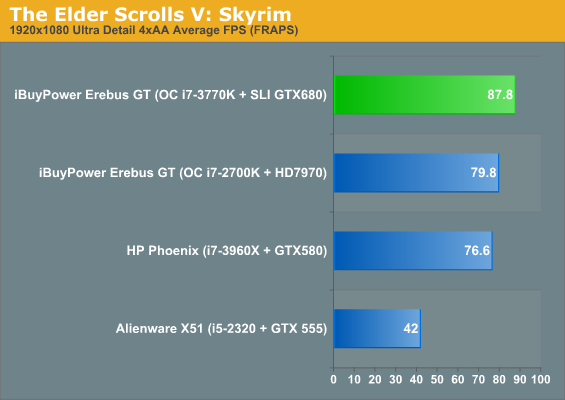

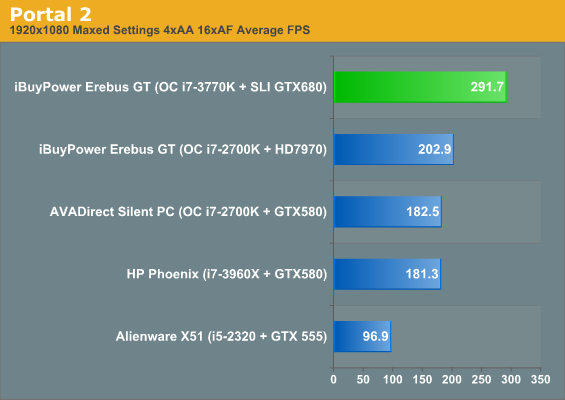

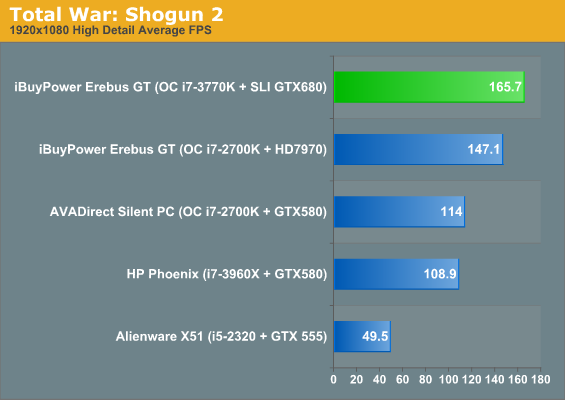

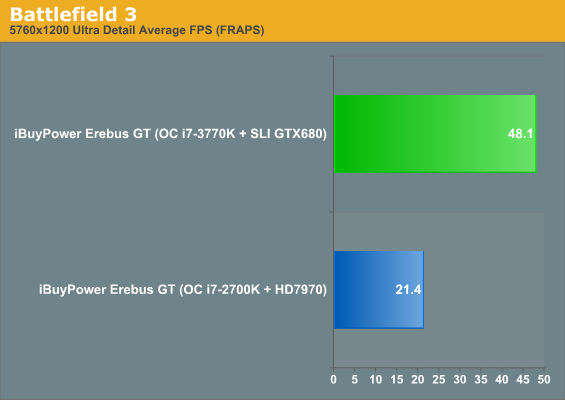

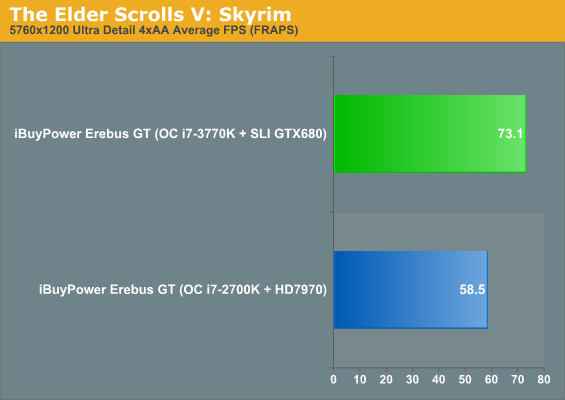

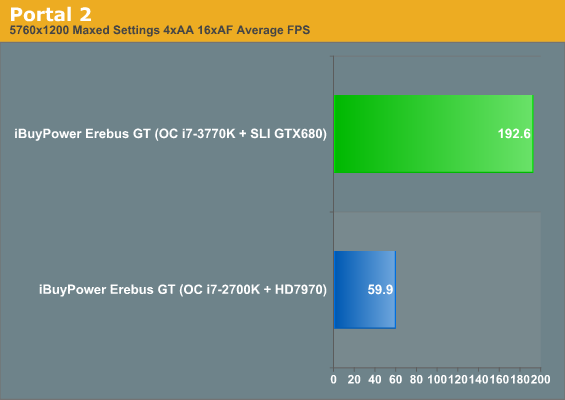

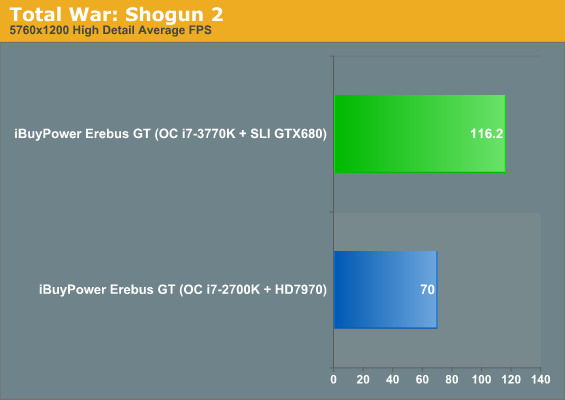

Bottom line, two GTX 680s is essentially excessive for 1080p. That's to be expected, but I was so stunned by the performance in Battlefield 3 that I actually had to double-check my results. Battlefield 3 has been fairly punishing on most of the systems I've tested, but the GTX 680s just brush it off. In other titles, we clearly hit CPU limits before the GPUs can reach their stride—Civilization V, Elder Scrolls: Skyrim, and Total War: Shogun 2 are clearly CPU limited at this point, and Portal 2 is only somewhat less so.

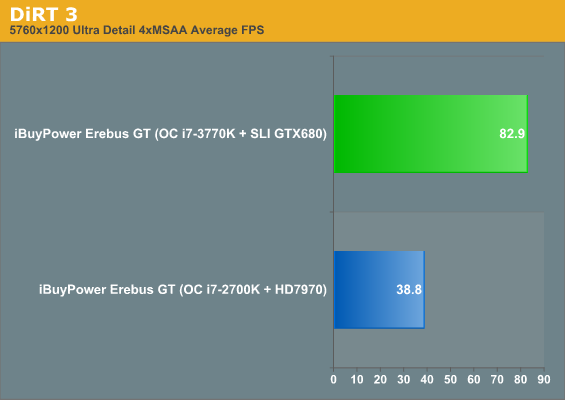

At the same time, everything isn't quite sunny for SLI right now. Since the GTX 680 is fairly new, each driver release from NVIDIA is going to become that much more important. The 301.10 drivers, for example, weren't entirely stable compared to the 301.24 betas, which could run DiRT 3 in surround without issue. I also had trouble actually configuring surround in the first place on the 301.10s, problems that didn't resurface in the 301.24s. The 301.10s also produced substantially lower SLI performance in Portal 2 (still 130+ fps) than the 301.24s.

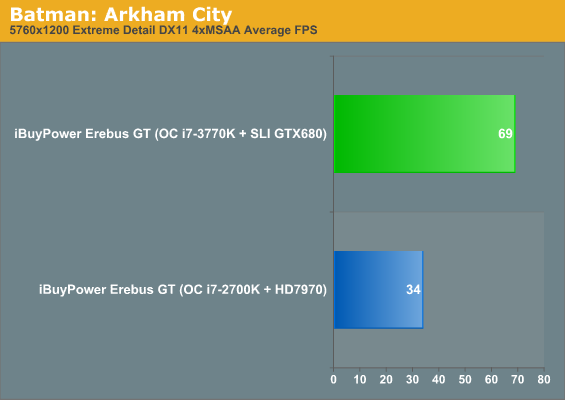

I'm sure it surprises no one that the pair of GTX 680s is able to provide playable experiences across every game at our highest resolution and settings. Battlefield 3 does bring the hammer down, though; triple the resolution and the performance is sliced pretty linearly down to about a third of what it was. If you want to run at surround resolutions with anti-aliasing enabled, though, the GTX 680s can do it. Interestingly, Skyrim is still apparently hitting CPU bottlenecks even at 5760x1200.

64 Comments

View All Comments

JarredWalton - Friday, April 27, 2012 - link

Based on previous pricing by iBUYPOWER, I'd guess they'll end up charging around $3000. However, we can't really print that since it's just a guess. :-pawall13 - Friday, April 27, 2012 - link

Would be nice to see noise measurements for this water cooled setup. Seems to me that it would be an important consideration for a potential buyer compared to building their own (more likely air-cooled) setup.ewood - Friday, April 27, 2012 - link

Normally I would overlook this little slip up but it is the third time I have heard an anandtech article refer to Lynnfield as a tick when in fact it is a tock. It did not use a new fab process and it did employ a new architecture. Lynnfield was a tock. Get it right, you're a hardware site.JarredWalton - Friday, April 27, 2012 - link

Sorry, you're right. I screwed up and looked at Lynnfield. The article has been adjusted, but in my above comment, if you just replace "Lynnfield" with "Clarkdale" not a whole lot changes. Clarkdale was very underwhelming, and Gulftown is highly specialized. I never actually ran either one other than seeing a hex-core Gulftown stuffed into a Clevo X7200. :-\The problem is that Intel made comparisons very messy with Westmere. On the desktop we had Clarkdale (dual-core plus IGP) and Gulftown (hex-core and no IGP). On laptops we had Arrandale (basically just mobile Clarkdale). There were no mainstream quad-core Westmere parts, so you had mainstream dual-core or high-end hex-core and never the twain shall meet.

Anyway, don't feel too superior for catching the error -- try writing about technology and code names for a few years and I can pretty much guarantee you'll make some mistakes. Heck, just read the tech junky posts in hardware forums and even the best people make mistakes.

JarredWalton - Friday, April 27, 2012 - link

And yes, I know that Clarkdale's IGP was actually 45nm on package.web2dot0 - Friday, April 27, 2012 - link

Isn't there something fundamentally wrong with people using 1200W of power to play computer games? Considering that there is a power shortage all over California, it's pretty abusive to hoar all that juice when there are better ways to spent it.Not trying to be judgemental or anything, but there should be regulation on energy consumption for computers that are not work-related. No different than emission standards for cars and such.

UltimateKitchenUtensil - Saturday, April 28, 2012 - link

1200 W is just the power supply's capacity. If the components draw less than that (in this case, much less than that), the supply will only give them what they need. And it will do it very efficiently, since the AX-1200 is 80+ Gold.In this case, even though the power supply is rated 1200 W, the system, under load, only consumes 471 W of power. Some of it is wasted, but over 80% is used by the system.

web2dot0 - Saturday, April 28, 2012 - link

I'm sure having a 80+ Gold PSU is great, but you are still using alot of power. I'm guessing if you plan to have a SLI config, you are going to OC the CPU, and everything else.A regular PC consumes < 150W, this PC is using 471W.

I'm also guessing that people who buys these bad boys aren't exactly casual gamers, so these PCs will be under load way more often than an average PC.

Just saying ....

buzznut - Saturday, April 28, 2012 - link

Its funny, AMD comes out with a product that equals its previous efforts and its a major fail. Why do we find it so easy to forgive Intel?"I feel like a lot of us were hoping for either a bigger performance improvement or better overclocking headroom due to the new process, but what we have instead is a chip that, when pushed to its conventional limits, is essentially still only the equal of its predecessor in terms of performance."

And doesn't overclock as well. Aren't you glad you ran out and bought that Z77 board?

Obviously it has a little to do with power and efficiency. At least BD is much more capable with respect to overclocking. I am very underwhelmed.

Which is what confuses me. Ivy Bridge has been overhyped as much as bulldozer was. People really should be asking, "what happened?"

Dustin Sklavos - Saturday, April 28, 2012 - link

Ivy Bridge is still directly superior to Sandy Bridge in almost every way BUT overclocking headroom. I'm underwhelmed by Ivy Bridge, but it's much more efficient in terms of power consumption than Sandy Bridge. Before overclocking, you get slightly better performance for much less power.Bulldozer was in many ways a step BACK from Deneb and Thuban. The FX-8150 should always have at least equalled the Phenom II X6 1100T, but in certain cases it was actually slower.

That, and everyone wanted and needed Bulldozer to do well for AMD's sake, the sake of the market, the sake of the community. Nobody was really looking at Sandy Bridge and going "boy, if Intel could make this thing faster we'd all be better off."