The ARM vs x86 Wars Have Begun: In-Depth Power Analysis of Atom, Krait & Cortex A15

by Anand Lal Shimpi on January 4, 2013 7:32 AM EST- Posted in

- Tablets

- Intel

- Samsung

- Arm

- Cortex A15

- Smartphones

- Mobile

- SoCs

Late last month, Intel dropped by my office with a power engineer for a rare demonstration of its competitive position versus NVIDIA's Tegra 3 when it came to power consumption. Like most companies in the mobile space, Intel doesn't just rely on device level power testing to determine battery life. In order to ensure that its CPU, GPU, memory controller and even NAND are all as power efficient as possible, most companies will measure power consumption directly on a tablet or smartphone motherboard.

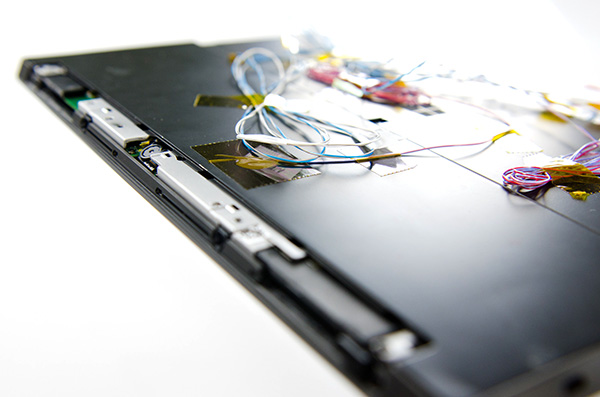

The process would be a piece of cake if you had measurement points already prepared on the board, but in most cases Intel (and its competitors) are taking apart a retail device and hunting for a way to measure CPU or GPU power. I described how it's done in the original article:

Measuring power at the battery gives you an idea of total platform power consumption including display, SoC, memory, network stack and everything else on the motherboard. This approach is useful for understanding how long a device will last on a single charge, but if you're a component vendor you typically care a little more about the specific power consumption of your competitors' components.

What follows is a good mixture of art and science. Intel's power engineers will take apart a competing device and probe whatever looks to be a power delivery or filtering circuit while running various workloads on the device itself. By correlating the type of workload to spikes in voltage in these circuits, you can figure out what components on a smartphone or tablet motherboard are likely responsible for delivering power to individual blocks of an SoC. Despite the high level of integration in modern mobile SoCs, the major players on the chip (e.g. CPU and GPU) tend to operate on their own independent voltage planes.

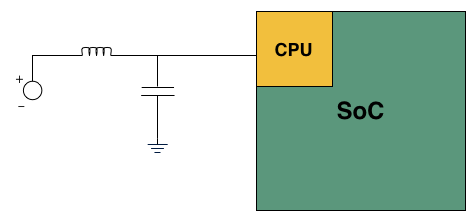

A basic LC filter

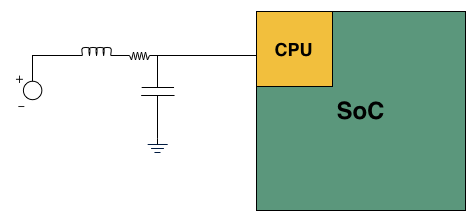

What usually happens is you'll find a standard LC filter (inductor + capacitor) supplying power to a block on the SoC. Once the right LC filter has been identified, all you need to do is lift the inductor, insert a very small resistor (2 - 20 mΩ) and measure the voltage drop across the resistor. With voltage and resistance values known, you can determine current and power. Using good external instruments (NI USB-6289) you can plot power over time and now get a good idea of the power consumption of individual IP blocks within an SoC.

Basic LC filter modified with an inline resistor

The previous article focused on an admittedly not too interesting comparison: Intel's Atom Z2760 (Clover Trail) versus NVIDIA's Tegra 3. After much pleading, Intel returned with two more tablets: a Dell XPS 10 using Qualcomm's APQ8060A SoC (dual-core 28nm Krait) and a Nexus 10 using Samsung's Exynos 5 Dual (dual-core 32nm Cortex A15). What was a walk in the park for Atom all of the sudden became much more challenging. Both of these SoCs are built on very modern, low power manufacturing processes and Intel no longer has a performance advantage compared to Exynos 5.

Just like last time, I ensured all displays were calibrated to our usual 200 nits setting and ensured the software and configurations were as close to equal as possible. Both tablets were purchased at retail by Intel, but I verified their performance against our own samples/data and noticed no meaningful deviation. Since I don't have a Dell XPS 10 of my own, I compared performance to the Samsung ATIV Tab and confirmed that things were at least performing as they should.

We'll start with the Qualcomm based Dell XPS 10...

140 Comments

View All Comments

tahyk - Friday, January 4, 2013 - link

I've read that review. That's a total scam. They comparing the Atom N270, aka the original 2008 Netbook chip to the latest and greatest ARM. This article is about the Atom Z2760, so it compares 2012 x86 to 2012 ARM - a whole lot more fair.mugiebahar - Friday, January 4, 2013 - link

While I always enjoy reading here, I have to admit this article is not 1 of them. I'm not slinging mud or anything but rather I think it's highly subjective to consider @ this point intel is in good standing to make in roads. I agree intel has the technology/money/resources/will power to make a killer chip that sips power better then anyone. But as so many have pointed out and cannot be changed, Intel doesn't have the ability to do it for a cheap competitive price. ARM has always will always be better in that. It's all about how a company is built. ARM doesn't need to finance a foundry much less several like intel. With over head and size comes problems changing business models, especially in manufacturing. The thing is while we don't know yet what the future holds as to the amount of things we will do on a phone, I can guarantee I won't be ripping a DVD, making CAD drawings on it. So fundamentally we will hit a wall that the cost is not worth the money. Am I wrong? While I know what the article is pointing to, which is a strong class leading, watt sipping intel. But they cannot win or be as noteworthy as the article points out. You can't ask a company to devalue their products, Why? Because you lose either because 1) you look desperate or 2) you acknowledge that you where ripping people off before. While they may have had legitimate reasons for pricing, or that technology brought prices down its perception that's the killer. Is Atom bad? No. But ask regular joe, he'll tell you it's crap but why? Price and perception. Intel did something's right and sme wrong. They should have realized a while back desktops were good enough and push mobile chips to the better lower cost production. But now a company so heavy on top can switch just like that. Now ether they will have to restructure to be competitive in the mobile arena or just play second fiddle. But they can't right now (unless they change) be a mobile king as they are on the desk top only because of company structure nothing else.jemima puddle-duck - Friday, January 4, 2013 - link

I'd echo this sentiment. I'm getting less and less interested that so-and-so has made something slightly better than so-and-so. The chances are this Atom will never see the inside of more than 1 or 2 phones. I want to know why! This is the insight Anandtech, with its extensive contacts, can deliver! I guess what I'm asking for is more politics and less technology :-)vngannxx - Friday, January 4, 2013 - link

Anandtech should rerun the nexus 10 benchmark with the aosp browser.mugiebahar - Friday, January 4, 2013 - link

While Intel is the 800lb Gorilla that there is no doubt. Problem is its not stuck in a room with a monkey and a chimpanzee (AMD and VIA) this time it's in a room with a Lion (Apple) Tiger (say Samsung) Siberian Tiger (Qualcomm) mountain lion (TI) baby cub (AMD) and a litter full of Chinese cats. So the Gorilla is the strongest but now he might just have the hair bit of his ass cheeks if he doesn't watch it. Please feel free to reorder the cats to a better resemblance to whoever as I just thought about it quickly, but I think you can agree True?UpSpin - Friday, January 4, 2013 - link

Intel PR, nothing more.This article is misleading, confusing and compares totally different things. It's a shame to see such a bad written article on Anandtech, full with meaningless, misleading, graphs, which just sit there, without any further descriptive text. If a image isn't worth some text, it isn't worth to get shown at all!

1. There's no use in showing and even comparing Total Power Consumption numbers, because the systems are totally different. So don't show them! Everything else is misleading, most probably on purpose because the absolutely low-end Intel device looks good in this comparison, logically. But please, if you can't compare things, don't try to compare them. And if you can't compare them, also don't further use such numbers, like in Task Energy Total Platform. It's useless.

You can't measure Qualcomm chips correctly, thus include Total Platform power draw? Poor excuse. If you can't measure it, don't post it, but don't post false and misleading numbers.

2. What is Average Power Draw? What's the use of it? You don't use those graphs in your Article at all! Do you know what this means? Exactly: Those graphs are useless and meaningless. Why do you post them? They are redundant because of the Energy graphs. So naturally, because of the much shorter run time of the A15 SoC, the Average graph looks disadvantageous for ARM, which is simply misleading. But well Intel is probably happy you posted hit and thanked you with cash, why else should a sane person post such misleading stuff.

3. GPU Power: What game? How did it run? Off-Screen? The same resolution on every tablet? The same API? The same FPS? It's not surprising that a low-end GPU struggling to keep maybe 10FPS consumes less power than a high-end GPU displaying 60FPS at a higher resolution. You haven't said anything about this issue, yet happily compare meaningless numbers.

I'm sorry but this article is, right now, garbage. And the only reason for posting such a poor written article is that Intel must have paid you a lot of money for doing so.

It's nice that you post such semi-scientifical articles, but the way you do in this case isn't great.

This article is very very hard to read, because the reader has to do ALL the interpretation.

You could remove 2/3 of all graphs, and the article would contain the same information.

By just looking at the graphs Intel is the overall winner, which is, if you do some further comparisons based on your article wrong. At most Intel is, according to your graphs, on par with A15, CPU wise, which still is a nice outcome for Intel.

The GPU is awful in the Intel SoC, the CPU competive.

The A15 GPU is perfect, the CPU at least as efficient as the Intel one, but much faster.

Yet, because the article is so confusing and I don't want to waste any further time doing the work a good writer should have done, I see ARM as the clear winner.

Same or more efficient CPU, much faster CPU, much better GPU, overall winner!

Similar argumentation for Tegra and Krait.

Intel has a good CPU, but the SoC looks awful.

powerarmour - Friday, January 4, 2013 - link

"I see ARM as the clear winner.Same or more efficient CPU, much faster CPU, much better GPU, overall winner!

Similar argumentation for Tegra and Krait.

Intel has a good CPU, but the SoC looks awful."

That was exactly my conclusion reading through it, I just couldn't be bothered to be eloquent enough to explain it like that as it seemed obvious to me.

I look at the SoC as a whole, and apart from a 'slight' advantage on the CPU side in a few select (and likely x86 optimized) browser benchmarks, the Clover Trail SoC is really quite lacklustre.

mfergus - Friday, January 4, 2013 - link

The GPU in the clover trail soc isn't even made by Intel. They could swap it out for anything they wanted tho they want it to be an in house gpu.Cold Fussion - Saturday, January 5, 2013 - link

I concur, the article is pretty bad as it stands. Apart from all the poorly presented information, it should have had tests done on an andriod tablet running the same krait SOC as the windows tablet so we establish how the different operating systems affect power draw. Without that I don't see how they can reasonably establishes the differences between A15 and the others.wsw1982 - Friday, January 11, 2013 - link

http://www.phonearena.com/news/Intel-Atom-powered-...check this out... The clove trail+ in smart phone lenovo K900 scores more than 25000 in Antutu on a 1080P display, which just crush snapdragon pro (4 krait) in Optimus G, and the beloved Samsung Exynos 5440 (2 A15) in Nexus 10...

So, what gonna be the next far cry from ARMy: "Intel cannot make low power chips"? Oh, no, that's already busted. Then, I guess it gonna be "Intel cannot sell smartphone chips as cheap as others" or "We don't care performance and we don't care battery life, we just care the compatibility to IOS"