The NVIDIA GeForce GTX 750 Ti and GTX 750 Review: Maxwell Makes Its Move

by Ryan Smith & Ganesh T S on February 18, 2014 9:00 AM ESTMaxwell’s Feature Set: Kepler Refined

To start our look at the Maxwell architecture, we’ll start with a look at the feature set, as this will be the shorter and easier subject to begin with.

In short, Maxwell only offers a handful of new features compared to Kepler. Kepler itself was a natural evolution of Fermi, further building on NVIDIA’s SM design and Direct3D 11 functionality. Maxwell in turn is a smaller evolution yet.

From a graphics/gaming perspective there will not be any changes. Maxwell remains a Direct3D 11.0 compliant design, supporting the base 11.0 functionality along with many (but not all) of the features required for Direct3D 11.1 and 11.2. NVIDIA as a whole has not professed much of an interest in being 11.1/11.2 compliant – they weren’t in a rush on 10.1 either – so this didn’t come as a great surprise to us. Nevertheless it is unfortunate, as NVIDIA carries enough market share that their support (or lack thereof) for a feature is often the deciding factor whether it’s used. Developers can still use cap bits to access the individual features of D3D 11.1/11.2 that Maxwell does support, but we will not be seeing 11.1 or 11.2 becoming a baseline for PC gaming hardware this year.

On the other hand this means that for the purposes of the GeForce family the GTX 750 series will fit in nicely into the current stack, despite the architectural differences. As a consumer perspective is still analogous to a graphics perspective, Maxwell does not have any features that will explicitly set it apart from Kepler. All 700 series parts will support the same features, even NVIDIA ecosystem features such as GameWorks, NVENC, and G-Sync, so Maxwell is fully aligned with Kepler in that respect.

At a lower level the feature set has only changed to a slightly greater degree. I/O functionality is identical to Kepler, with 4 display controllers backing NVIDIA’s capabilities. HDMI 1.4 and DisplayPort 1.2 functionality join the usual DVI support, with Maxwell being a bit early to support any next generation display connectivity standards.

Video Encode & Decode

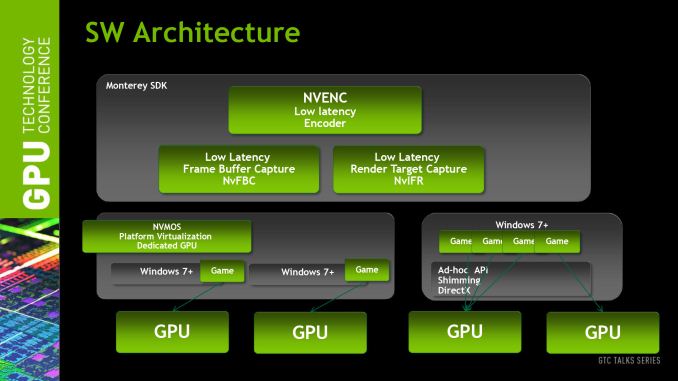

Meanwhile turning our gaze towards video encoding and decoding, we find one of the few areas that has received a feature upgrade on Maxwell. NVENC, NVIDIA’s video encoder, has received an explicit performance boost. NVIDIA tells us that Maxwell’s NVENC should be 1.5x-2x faster than Kepler’s NVENC, or in absolute terms capable of encoding speeds 6x-8x faster than real time.

For the purposes of the GTX 750 series, the impact of this upgrade will heavy depend on how NVENC is being leveraged. For real time applications such as ShadowPlay and GameStream, which by the very definition can’t operate faster than real time, the benefit will primarily be a reduction in encoding latency by upwards of several milliseconds. For offline video transcoding using utilities such as Cyberlink’s MediaEspresso, the greater throughput should directly translate into faster transcoding.

The bigger impact of this will be felt in mobile and server applications, when GM107 makes its introduction in those product lines. In the case of mobile usage the greater performance of Maxwell’s NVENC block directly corresponds with lower power usage, which will reduce the energy costs of using it when operating off of a battery. Meanwhile in server applications the greater performance will allow a sliding scale of latency reductions and an increase in the number of client sessions being streamed off of a single GPU, which for NVIDIA’s purposes means they will get to increase the client density of their GRID products.

Speaking of video, decoding is also receiving a bit of a lift. Maxwell’s VP video decode block won’t feature full H.265 (HEVC) support, but NVIDIA is telling us that they will offer partial hardware acceleration, relying on a mix of software and hardware to decode H.265. We had been hoping for full hardware support on Maxwell, but it looks like it’s a bit premature for that in a discrete GPU. The downside to this is that the long upgrade cycle for video cards – many users are averaging 4 years these days – means there’s a good chance that GTX 750 owners will still be on their GTX 750 cards when H.265 content starts arriving in force, so it will be interesting to see just how much of the process NVIDIA can offload onto their hardware as it stands.

H.265 aside, video decoding overall is getting faster and lower power. NVIDIA tells us that decoding is getting a 8x-10x performance boost due to the implementation of a local decoder cache and an increase in memory efficiency for video decoding. As for power consumption, combined with the aforementioned performance gains, NVIDIA has implemented a new power state called “GC5” specifically for low usage tasks such as video playback. Unfortunately NVIDIA isn’t telling us much about how GC5 works, but as we’ll see in our benchmarks there is a small but distinct improvement in power consumption in the video decode process.

177 Comments

View All Comments

kwrzesien - Tuesday, February 18, 2014 - link

Seems coincidental that Apple is going to use TSMC for all production of the A8 chip with Samsung not ready yet, maybe Apple is getting priority on 20nm? Frankly what nVidia is doing with 28nm is amazing, and if the yields are great on this mature process maybe the price isn't so bad on a big die. Also keep in mind the larger the die the more surface area there is to dissipate heat, Haswell proved that moving to a very dense and small die can create even more thermal limitations.DanNeely - Tuesday, February 18, 2014 - link

Wouldn't surprise me if they are; all the fab companies other than Intel are wailing about the agonizingly high costs of new process transitions and Apple has a history of throwing huge piles of its money into accelerating the build up of supplier production lines in trade for initial access to the output.dylan522p - Tuesday, February 18, 2014 - link

Many rumors point to Apple actually making a huge deal with intel for 14nm on the A8.Mondozai - Wednesday, February 19, 2014 - link

Maybe 14 for the iPhone to get even better power consumption and 20 for the iPad? Or maybe give 14 nm to the premium models of the iPad over the mini to differentiate further and slow/reverse cannibalization.Stargrazer - Tuesday, February 18, 2014 - link

So, what about Unified Virtual Memory?That was supposed to be a major new feature of Maxwell, right? Is it not implemented in the 750s (yet - waiting for drivers?), or is there currently a lack of information about it?

A5 - Tuesday, February 18, 2014 - link

That seems to be a CUDA-focused feature, so they probably aren't talking about it for the 750. I'm guessing it'll come up when the higher-end parts come out.Ryan Smith - Thursday, February 20, 2014 - link

Bingo. This is purely a consumer product; the roadmaps we show are from NV's professional lineup, simply because NV doesn't produce a similar roadmap for their graphics lineup (despite the shared architecture).dragonsqrrl - Tuesday, February 18, 2014 - link

"Meet The Reference GTX 750 Ti & Zotac GTX 750 Series""This is the cooler style that most partners will mimic, as the 60W TDP of the GTX 650 Ti does not require a particularly large cooler"

You mean 750 Ti right?

chizow - Tuesday, February 18, 2014 - link

The performance and efficiency of this chip and Maxwell is nothing short of spectacular given this is still on 28nm. Can't wait to see the rest of the 20nm stack.Ryan, are you going to replace the "Architectural Analysis" at some point? Really looking forward to your deep-dive on that, or is it coming at a later date with the bigger chips?

dgingeri - Tuesday, February 18, 2014 - link

In the conclusion, the writer talks about the advantages of the AMD cards, but after my experiences with my old 4870X2, I'd rather stick with Nvidia, and I know I'm not alone. Has AMD improved their driver quality to a decent level yet?