HGST And Mellanox Show Off SAN Fabric Backed By Phase Change Memory

by Billy Tallis on August 14, 2015 8:00 AM EST- Posted in

- Storage

- HGST

- Mellanox

- PCM

- InfiniBand

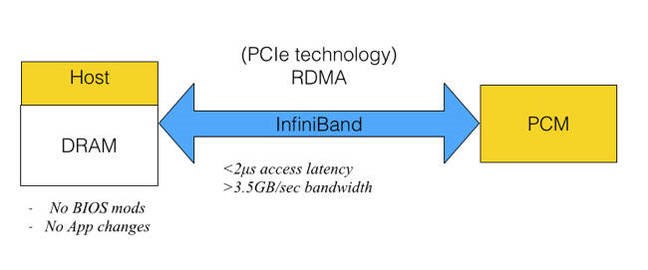

It seems that NAND flash memory just isn't fast enough to show off the full performance of the latest datacenter networking equipment from Mellanox. They teamed up with HGST at Flash Memory Summit to demonstrate a Storage Area Network (SAN) setup that used Phase Change Memory to attain speeds that are well out of reach of any flash-based storage system.

Last year at FMS, HGST showed a PCIe card with 2GB of Micron's Phase Change Memory (PCM). That drive used a custom protocol to achieve lower latency than possible with NVMe: it could complete a 512-byte read in about 1-1.5µs, and delivered about 3M IOPS for queued reads. HGST hasn't said how the PCM device in this year's demo differs, if at all. Instead, they're exploring what kind of performance is possible when accessing the storage remotely. Their demo has latency of less than 2µs for 512-byte reads and throughput of 3.5GB/s using Remote Direct Memory Access (RDMA) over Mellanox InfiniBand equipment. By comparison, NAND flash reads take tens of microseconds without counting any protocol overhead.

This presentation from February 2014 provides a great summary of where HGST is going with this work. It's been hard to tell which non-volatile memory technology is going to replace NAND flash. Just a few weeks ago Intel and Micron announced their 3D XPoint memory, immediately taking the place as one of the most viable alternatives to NAND flash without even officially saying what kind of memory cell it uses. Rather than place a bet on which new memory technology would pan out, HGST is trying to ensure that they're ready to exploit the winner's advantages over NAND flash.

None of the major contenders are suitable for directly replacing DRAM, either due to to limited endurance (even if it is much higher than flash), poor write performance, or vastly insufficient capacity. At the same time, ST-MRAM, CBRAM, PCM, and others are all much faster than NAND flash and none of the current interfaces other than a DRAM interface can keep pace. HGST chose to develop a custom protocol over standard PCIe as more practical than trying to make a PCM SSD that works as a DIMM connected to existing memory controllers.

Last year's demo showed that they were ready to deliver better-than-flash performance as soon as the new memory technology becomes economical. This year's demo shows that they can retain most of that performance while putting their custom technology behind an industry-standard RDMA interface to create an immediately deployable solution, and in principle it can all work just as well for 3D XPoint memory as for Phase Change Memory.

19 Comments

View All Comments

boeush - Friday, August 14, 2015 - link

My understanding of DRAM cache on flash drives (not sure about HDDs), is that it's used to hold the page mapping tables for allication, GC, wear leveling, etc. - i.e. for use by the controller. That's what makes the batteries/supercaps necessary on the enterprise drives: if a power outage wipes the cache in the middle of GC, the entire drive us potentially trashed. Whereas losing data mid-flight on its way to storage is not catastrophic, and can't be prevented even with on-drive power backup since the drive is not entirely in control of what gets written when, and how much at a time (that scenario is better addressed by a UPS.)For the controller, I'd assume it would be important to minimize access latencies to its data structures. The microsecond scale access times on this PCM do not compare favorably to the nanosecond response times of DRAM (never mind SRAM); substituting PCM for DRAM in this case will have a big negative impact on controller performance, therefore. That would particularly matter on high-performance drives...

boeush - Friday, August 14, 2015 - link

Crap... The above was meant as a reply to Billy Tallis. Also, please forgive autocorrect-induced spelling snafus (I'm posting this from my Android phone.)Billy Tallis - Sunday, August 16, 2015 - link

Some SSD controllers have made a point of emphasizing that they don't store user data in the external DRAM. If you don't have battery/capacitor backup, this is a very good idea. It's likely that some controllers have used the DRAM as a write cache in a highly unsafe manner, but I don't know which off the top of my head.The data structures usually used to track what's in the flash are very analogous to what's used by journaling file systems, so unexpected power failure is highly unlikely to result in full drive corruption. Instead, you'll get rolled back to an earlier snapshot of what was on the drive, which will be mostly recoverable.

The 1-2 microsecond numbers refer to access latency for the whole storage stack. For the direct access of the SSD controller to the PCM, read latencies are only about 80 nanoseconds, which is definitely in DRAM territory. It's the multiple PCIe transactions that add the serious latency.

bug77 - Monday, August 17, 2015 - link

Depends on how you use it. With such low latencies, game makers or even Microsoft may decide to host all their code themselves and only make it accessible to you on demand. Yes, I know this is far fetched, but it's technically it is possible. Plus, in the 90s online verification of a game key was far-fetched and today, in some instances, we can't even play single player games without an active connection.Alexvrb - Friday, August 14, 2015 - link

"None of the major contenders are suitable for directly replacing DRAM, either due to to limited endurance (even if it is much higher than flash), poor write performance, or vastly insufficient capacity."Wait, if a "NAND replacement" technology has vastly insufficient capacity to replace DRAM then how is it going to have sufficient capacity to replace NAND?

Anyway even the latest interfaces will be out of date when devices start coming out with these next-gen NVM solutions, even if it's used just initially as a very large (and safe) cache to supplement traditional high-capacity NAND. Something like 2TB of NAND and 8GB of PCM/XPoint cache, for example.

boeush - Saturday, August 15, 2015 - link

Interestingly, PCIe4 (with double the PCIe3 bandwidth) is due to deploy by this time next year. Combined with the NVMe protocol, it just might suffice for the next few years...Peeping Tom - Sunday, August 16, 2015 - link

Sadly they are saying it could take until 2017 before PCIe4 is finalized:http://www.eetimes.com/document.asp?doc_id=1326922

Billy Tallis - Sunday, August 16, 2015 - link

What I had in mind with the "vastly insufficient capacity" comment was stuff like Everspin's ST-MRAM, which checks all the boxes except capacity, where they're just now moving up to 64Mb from the 16Mb chips that are $30+.None of the existing interfaces will be particularly well-suited for these new memory technologies, but what HGST is doing is probably pretty important. People will want to reap as much benefit they can get out of these new memories without requiring a whole new kind of PHY to be added to their CPU die.

SunLord - Monday, August 17, 2015 - link

So we can have three non-volatile stages of storage now? Going from volatile memory aka DRAM to a small super fast PCM cache that acts as a buffer for a for fast medium to large active NAND based storage arrays followed up with a massive mechanical array of archival/cold storage data