Mobile Benchmark Cheating: When a SoC Vendor Provides It As A Service

by Andrei Frumusanu on April 8, 2020 10:00 AM EST- Posted in

- Mobile

- Smartphones

- SoCs

- MediaTek

Mobile benchmark cheating has a long story that goes far back for the industry (well – at least in smartphone industry years), and has also been a controversial coverage topic at AnandTech for several years now.

I remember back in 2013 where I had tipped off Brian and Anand about some of the shenanigans Samsung was doing on the GPU of Exynos chipsets on the Galaxy S4, only for the thing to blow up into a wider analysis of the practice amongst many of the mobile vendors back then – with all of them being found guilty. The Samsung case eventually even ended up with a successful $13.4m class-action lawsuit judgment against the company – with yours truly and AnandTech even being cited in the court filing.

The naming and shaming did work over the following years, as vendors quickly abandoned such methods out of fear of media backlash – the negatives far outweighed the positives.

In recent years however we saw a big resurgence of such methods, particularly from Chinese vendors. Most predominantly for our more western audience this happened to Huawei just a couple of generations ago with mechanisms that essentially disabled thermal throttling the of phones – letting more demanding benchmarks essentially have the SoC burn through to the maximum until thermal shutdowns. The naming and shaming here again helped, as the company had transitioned from employing invisible mechanisms to something that was a lot more honest and transparent, and a lot less problematic for follow-up devices.

The problem is, the Chinese vendor market is still huge, and we’re not able to dissect every single device and vendor out there. Cheating in benchmarks here continued to be a very real problem and commonplace practice. Huawei’s rationale back then was that they felt that they needed to do it because others did it as well – and they didn’t want to lose face to the competition in regards to the marketing power of benchmark numbers.

The one big difference here however is that there’s always been somewhat of a firewall in our coverage between what a device vendor did, and what chip vendors enabled them to do, and that’s where we come to MediaTek’s behavior over the last few years. In most past cases we always blamed the device vendors for cheating as it had been their mechanisms and initiative – we hadn’t had evidence of enablement by chipset vendors, at least until now.

Helio P95 outperforming Dimensity 1000L?!

The whole thing got to my attention when I had first received Oppo’s new Reno3 Pro – the European version with MediaTek’s Helio P95 chipset. The phone surprised me quite a bit at first, as in systems benchmarks such as PCMark it was punching quite above its weight and what I had expected out of a Cortex-A75 class SoC. Things got weirder when I received a Chinese Reno3 with the MediaTek Dimensity 1000L – a much more powerful and recent chip, but which for some reason performed worse than its P95 sibling. It’s when you see such odd results that alarm bells go off as there’s something that is quite amiss.

The whole thing ended up as quite the trip down the rabbit hole.

Real Performance vs Cheated Performance

(Oppo Reno3 Pro P95)

Naturally, and unfortunately, my first thought was that there must be some sort of cheating going on. We had reached out to our friends at UL for a anonymised version of PCMark – the teams there in the past had also been a great help in deterring cheating behaviour in the industry. To no major surprise, the two versions of the benchmark did differ in their scores – but I was still aghast at the magnitude of the score delta: a 30% difference in the overall score, with up to a 75% difference in important subtests such as the writing workload.

A bit of background on PCMark and why we use it: it’s not really a benchmark that’s usually being targeted for detection and cheating, because it’s a system benchmark that tries to be representative of real-world workloads and the responsiveness of a device. Whilst the hardware here certainly plays a role here in the benchmark score, it’s mostly affected by software and mechanisms such as DVFS and schedulers. There’s also the fact that it’s a performance and battery benchmark all in one – if you’re cheating in one aspect of the test by increasing performance, you’re just handicapping yourself on the battery test. It's thus unusual for the benchmark to be manipulated as in one sense you're also shooting yourself in the foot at the same time.

I also have a Snapdragon 765G variant of the Reno3 Pro, the Chinese model of the phone (while they share the same name, they’re still quite different devices). If Oppo were to be the cause of this mechanism, surely this device would also detect and cheat in PCMark. But actually that’s not the case: the device seemingly performs in benchmarks just as well as it does in any other app.

Update April 16th: Oppo had reached out to us regarding the whitelist; the company had removed this in the first public OTA of the phone, however the settings persisted in the cache of the device. Resetting the phone to factory defaults on the new firmware removes the detection, and the abnormal benchmark scores.

Digging a bit more for information on the MediaTek versions of the Reno3, the whole cheating mechanism had seemingly been sitting in plain sight to users for several years:

Reno3 Pro - "Sports Mode" Benchmark Whitelist

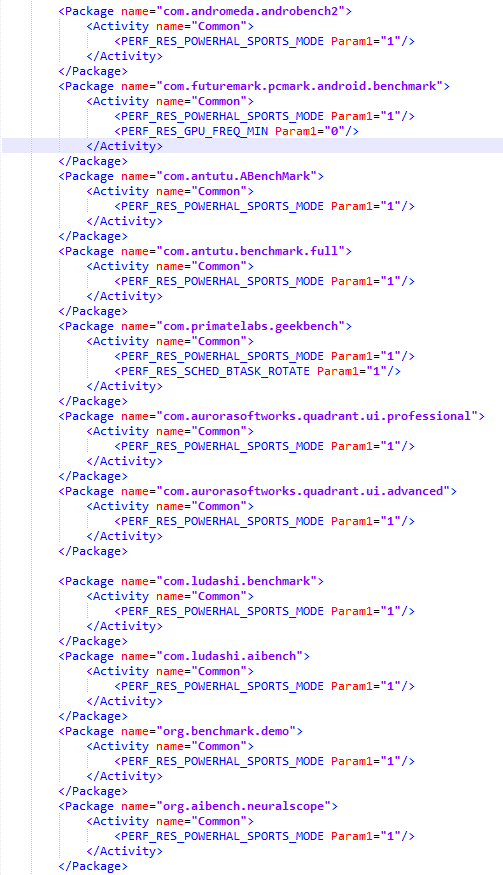

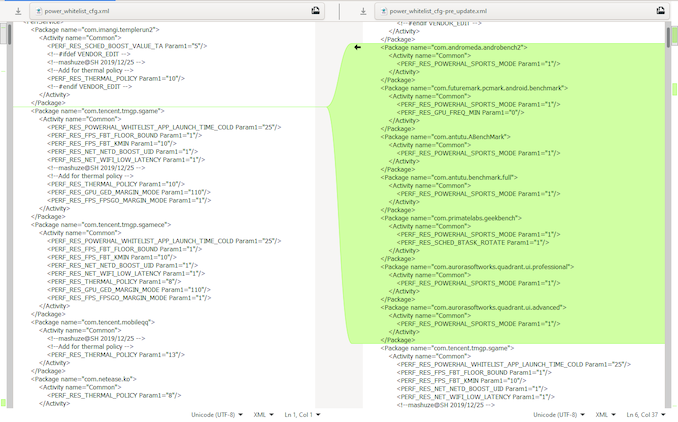

In the device’s firmware files, there’s a power_whitelist_cfg.xml file, most commonly found in the /vendor/etc folders of the phones. Inspect the file, there we find amongst what seems to be a list of popular applications with various power management tweaks applied to them, with lo and behold, also a list of various benchmarks. We find the APK ID for PCMark, and we see that there’s some power management hints being configured for it, one common one being called a “Sports Mode”.

The benchmark list here isn’t very exhaustive but it does contain the most popular benchmarks in the industry today – GeekBench, AnTuTu and 3DBench, PCMark, and some older ones like Quadrant or popular Chinese benchmark 鲁大师 / Master Lu. There’s also a storage benchmark like AndroBench2 which is a bit odd – more details on that later.

The newest additions here are a slew of AI benchmarks including the Master Lu AIBench and the ZTH AI Benchmark test, both of which we actually actively use here at AnandTech to cover those aspects of SoCs and devices.

Reno3 Pro - Non-public Benchmark Targeting

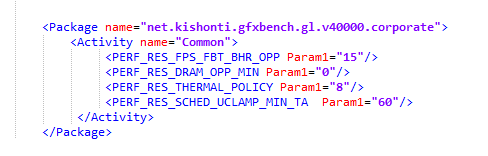

What actually did shock me though was the inclusion of a corporate version of Kishonti’s GFXBench. It didn’t have the sports mode power hint configured in the listing, but obviously it’s altering the default DVFS, thermal and scheduler settings when the app is being used. This is a huge red flag because at this point, we’re not merely talking about the benchmark list targeting general public benchmarks, but also variants that are actually used by only a small group of people – media publications like ourselves included. This is something to keep in mind for later in the piece.

Sports Mode on Reno 3 (Dimensity 1000L)

Sports Mode on Reno 3 Pro (P95)

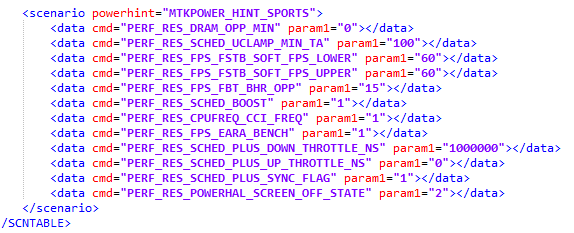

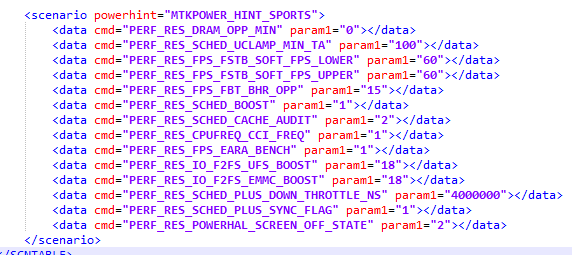

So, what does this “Sports Mode” actually do? For one, it seemingly fixes some DVFS characteristics of the SoC such as running the memory controller at the maximum frequency all the time. The scheduler is also being set up to being a lot more aggressive in its load tracking – meaning it’s easier for workloads to have the CPU cores ramp up in frequency faster and stay there for longer period of time, applying a few familiar boosting mechanisms.

I’m not sure that the _FPS_ entries do, but given their obvious naming they’re altering something to improve benchmark numbers. The oddest thing here are entries that are boosting the filesystem speed on F2FS devices, probably why benchmarks such as AndroBench are also being targeted.

It's (Mostly) All MediaTek Devices

Here’s the real kicker though: those files aren’t just present on OPPO devices, they’re very much present in a whole slew of phones by various vendors across the spectrum. I was able to get my hands on some firmware extracts of various devices out there (I didn’t actually possess every phone here), with each one of them having a similar power_whitelist_cfg.xml present in their vendor partition, with nigh identical entries of the benchmark listings. Here’s a breakdown:

| MediaTek Cheating Devices & Benchmarks | |||||||||

| Vendor | Oppo | Oppo | Oppo | Vivo | Xiaomi | Realme | iVoomi | Sony | |

| Device | Reno Z | F15 | F9 Pro | S1 | Note 8 Pro | C3 | i2 Lite | XA1 | |

| SoC | P95 | P90 | P70 | P60 | P65 | G90 | G70 | A22 | P20 |

| AndroBench2 | ✓ | * | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

| PCMark | ✓ | * | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

| Antutu | ✓ | * | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

| Antutu 3DBench | ✓ | * | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

| GeekBench | ✓ | * | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

| Quadrant | ✓ | * | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

| Quadrant Professional | ✓ | * | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |

| 鲁大师 / Master Lu | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✗ | |

| 鲁大师 / AIMark | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✗ | ✗ | |

| AI Benchmark (ZTH) | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✗ | ✗ | |

| NeuralScope Benchmark | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✗ | ✗ | |

| GFXBench 4 Corporate | ✓ | ✗ | ✗ | ✓ | ✓ | ✓ | ✗ | ✗ | |

* Present but commented out

What’s shocking here is just the wide variety of devices that this is present on. The oldest device here being a Sony XA1 with a P20 from 2016, pointing out that this possibly has been around for some time. That device also had seemingly the least “complete” list of benchmarks, notably lacking the newer AI tests.

The fact that the Sony had this in the files is most concerning as it should be a vendor that’s “clean” and avoiding such practices. What clear here is that this mechanism isn’t stemming from the individual vendors, but originates from MediaTek and is integrated into the SoC’s BSP (Board Support Package).

Oppo Reno3 Pro (P95) - New Firmware vs Initial Firmware (Listings gone)

What’s actually even more suspicious and we’re very lucky here in terms of catching this, is that these listings are seemingly in the process of being hidden. I had extracted the files out of my Reno3 Pro on its initial out-of-the-box firmware. Over the last few weeks OPPO had pushed a firmware update to the phone – and when at some point when I had checked something again in the file, I was surprised to see the benchmark entries disappear.

Did the mechanism get disabled? Did they stop cheating? Unfortunately, no. I don’t know where the entries have been moved to now, but the phone still very much still triggered its Sports Mode in the benchmarks with the same large performance boost. The entries weren’t merely removed, they were just hidden away somewhere else.

Update April 16th: Oppo had reached out to us regarding the whitelist; the company had removed this in the first public OTA of the phone, however the settings persisted in the cache of the device. Resetting the phone to factory defaults on the new firmware removes the detection, and the abnormal benchmark scores are gone.

It's to be noted that seemingly Oppo wasn't fully aware of the mechanism - and there was confusion as to how properly disable it. It points out that MediaTek has this mechanism enabled by default in their BSP.

Reaching Out To MediaTek & Their Response

We were extremely concerned about all these findings, and we reached out to MediaTek several weeks ago. We explained our findings, and the concerns we had of a SoC vendors actually providing such a mechanism. We recently finally got an official response from them, quoted as follows:

MediaTek Statement for AnandTech

MediaTek follows accepted industry standards and is confident that benchmarking tests accurately represent the capabilities of our chipsets. We work closely with global device makers when it comes to testing and benchmarking devices powered by our chipsets, but ultimately brands have the flexibility to configure their own devices as they see fit. Many companies design devices to run on the highest possible performance levels when benchmarking tests are running in order to show the full capabilities of the chipset. This reveals what the upper end of performance capabilities are on any given chipset.

Of course, in real world scenarios there are a multitude of factors that will determine how chipsets perform. MediaTek’s chipsets are designed to optimize power and performance to provide the best user experience possible while maximizing battery life. If someone is running a compute-intensive program like a demanding game, the chipset will intelligently adapt to computing patterns to deliver sustained performance. This means that a user will see different levels of performance from different apps as the chipset dynamically manages the CPU, GPU and memory resources according to the power and performance that is required for a great user experience. Additionally, some brands have different types of modes turned on in different regions so device performance can vary based on regional market requirements.

We believe that showcasing the full capabilities of a chipset in benchmarking tests is in line with the practices of other companies and gives consumers an accurate picture of device performance.

The statement is generally disappointing, but let’s go over a few key points that the company is trying to make.

The statement tries to say that by forcing the various configurable knobs, the benchmark figures will better represent the hardware capabilities of the SoC. In a sense, this is actually true and it’s been a contentious talking point regarding the whole benchmark cheating debacle over the years with various vendors. It’s only when a benchmark vendor suddenly opens up otherwise unattainable performance states in these benchmarks where the argument isn't valid anymore. At least at first glance, it doesn’t appear to be the case for MediaTek – although I don’t have more detailed technical information as to what some of the "Sports Mode" configuration options do.

The problem with that argument though, is that it falls apart in the face of cheating benchmarks that not only target the actual hardware components of a SoC – like how GeekBench is testing the CPU speeds or how GFXBench checks out the how fast a GPU can be, but also benchmarks which actively try to be user experience benchmarks, such as PCMark. This is a real-world mimicking workload that tries to convey the responsiveness of a phone as a whole, not just the chipset.

The fact that MediaTek cheats such a test goes directly against their second paragraph notion of the chipsets offering optimized performance in the real-world. If that were the case, then wouldn’t it be better to actually let the chipset and software honestly demonstrate this? What does cheating storage benchmarks and filesystems have anything to do with the chipset’s capabilities?

MediaTek’s claim of vendors offering dedicated performance modes is correct. Most notably this had been introduced, at least for vendors such as Huawei – as a direct result of us calling them out on the default opaque cheating behavior of their devices.

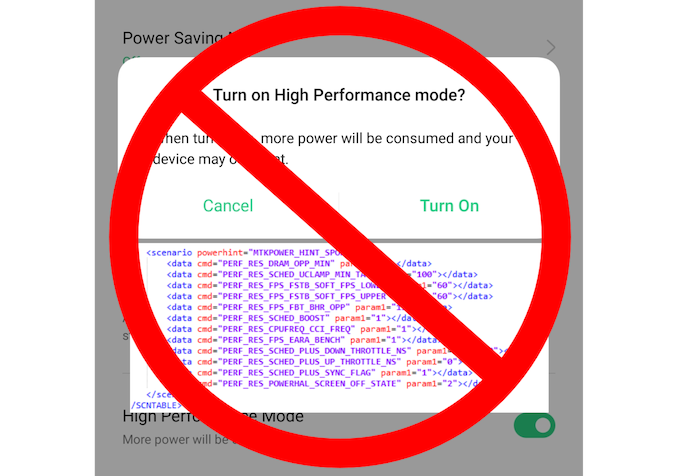

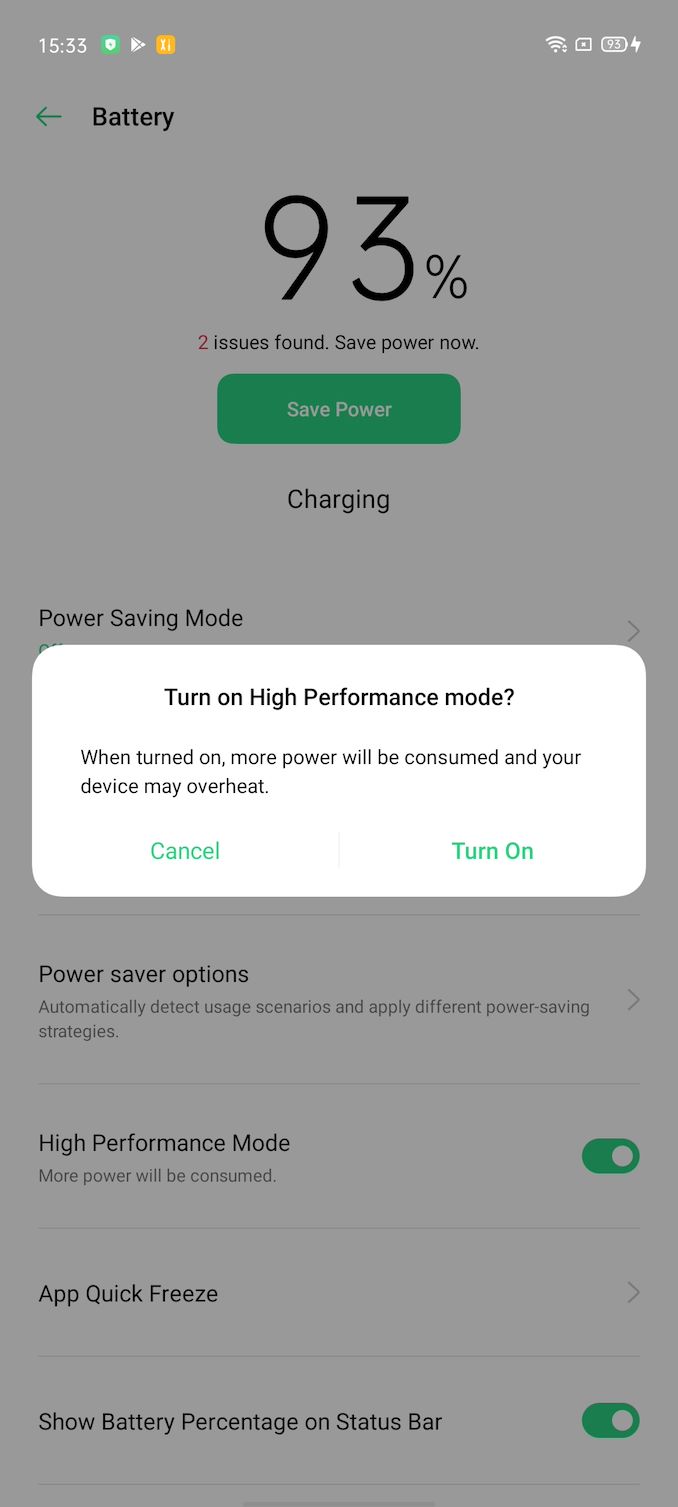

High Performance Mode Prompt on OPPO devices.

On the Oppo devices, and many other Chinese vendor devices, they put on a “High Performance Mode” option in the settings. This actually differs quite a bit from the usual “High Performance” modes we’re used from vendors such as Samsung or more lately Huawei, in that this is essentially just a switch to have the DVFS and performance tuneable go bonkers. It’s present also in Snapdragon phones, and we had talked about it in our review of the Reno 10x last year. The phone essentially goes into a high-power mode throwing away any attempt to be efficient; it’s a nonsensical mode that is unusable in every-day use-cases beyond getting high benchmark scores.

The thing is – we as hopefully educated users, and MediaTek as a SoC vendor – should not care about these operating modes.

I still view it as a good compromise between delivering the phones in an honest “default” state, and still giving the option for people (and reviewers) out there to achieve unrestricted, super high benchmark figures if they so desire. The difference here it’s the transparency of the mechanism – Oppo for example outright tells you your device will overheat. MediaTek’s benchmark detection on the other hand is hidden.

MediaTek also refers to “market requirements” making them do this and it being an “industry standard”, and unfortunately that’s again true and addresses the core of the issue.

These mechanisms wouldn’t exist if there weren’t a demand by vendors for MediaTek to provide such solutions. From MTK’s perspective, they’re just trying to satisfy a customer’s needs and make them happy. There’s the question of whom actually came first – was it MTK developing the detection on their own, or was it some customer that demanded it from them at some point in the past?

Lacking evidence of other SoC vendors out there enabling similar mechanisms for the device vendors, what’s clear is that MediaTek should just have stayed out of the mess, as they have more to lose than there is to gain.

All that’s been achieved now is the impression that the company’s chipset software isn’t optimized enough to be able to deliver consistent performance and efficiency by default, with it instead needing a manual push to be able to properly match their benchmark expectations of the chipsets.

I’ve certainly lost a lot of confidence in the figures and in general just being more skeptical of the benchmark figures I’m running – particularly at a time where I was excited to see MediaTek come back to the high end with the Dimensity 1000 (which is seemingly a very good chipset – review to follow up in the future).

With the cat out of the bag and with the evidence out there, I’m sure other media with access to more MediaTek devices will be able to check whether they’re cheating or not. Pointing and shaming has worked in the past for Samsung and other vendors, and it worked for Huawei’s misjudgments a few years back – both being on a more correct path now. I just hope MediaTek is able to also correct their trajectory here, take the high road, remove the mechanisms – and say "no" to their customers when they request such a feature again.

111 Comments

View All Comments

FreckledTrout - Wednesday, April 8, 2020 - link

Is there some way to sandbox the benches so that the vendors can't detect the package names ot then change performance settings?Stuka87 - Wednesday, April 8, 2020 - link

If the tool is open source, one could create a custom build with enough changes to prevent their cheat software from detecting it. In the case of PCMark, thats basically what the anonymised version is that was used here.NateR100 - Wednesday, April 8, 2020 - link

This isn't even necessary, it just detects it based off the package name. Doesn't sweetest any of the actual workload or tests or anythingNateR100 - Wednesday, April 8, 2020 - link

*test*tau_neutrino - Friday, April 10, 2020 - link

You can use something like App Cloner that will create a copy of the app with a different package namepsychobriggsy - Wednesday, April 8, 2020 - link

I think one way to change benchmarking phones isn't just to run the benchmark a few times, but to run it until battery is drained, and also publish the battery life. Obviously there isn't time to do that for every benchmark, but maybe a couple would suffice to show there is a cheating issue.I.e., a phone bumping performance for benchmarks will have a lower battery life than one that isn't, and this will expose it.

Andrei Frumusanu - Wednesday, April 8, 2020 - link

PCMark already is a battery test in itself so they've already shot themselves in the foot here in this instance, and we've already hammered devices which cheat GPU with thermals, either by the phones getting incredibly hot or having issues with long-term performance.Kangal - Thursday, April 9, 2020 - link

True, but pychobriggsy has a great idea.For the benchmarks that are not all-encompassing, like GeekBench, we could fully charge the phone and then run the benchmark repeatedly. Then at the end, tally up how many runs it managed. What the average score was. How much the performance declined. The standard deviation. And even add all the bechmark results up for a Total Score.

Obviously, the devices which don't overclock much, they're going to have a much higher Total Score, as they would last much longer and have more benchmarks completed. Not to mention, the standard deviation and the performance drop will be much less, showing a nicer consistent result.

Food for thought.

eldakka - Friday, April 10, 2020 - link

I would also record device temperatures and chart that during this period. In many ways the temperature of the device could be more important than battery longevity in these sorts of tests, to see if the phone becomes too hot to physically hold it in the hand while using it, which sorta defeats the point of a phone if it becomes uncomfortable to hold it in operation.Stuka87 - Wednesday, April 8, 2020 - link

Thanks for digging into things like this guys. This will most likely not be the last of these cases that you run into. MediaTek's response was certainly disappointing.