Microchip’s New PCIe 4.0 PCIe Switches: 100 lanes, 174 GBps

by Dr. Ian Cutress on June 1, 2020 12:00 PM EST

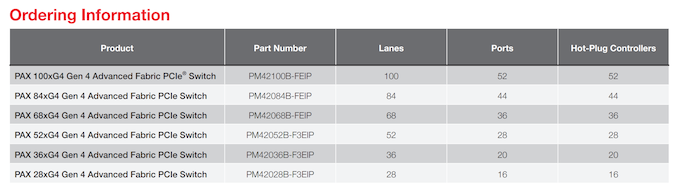

There are multiple reasons to need a PCIe switch. These can include expanding PCIe connectivity to more devices than the CPU is capable, to extend a PCIe fabric across multiple hosts, to generate failover support, or to increase device-to-device communication bandwidth in limited scenarios. With the advent of PCIe 4.0 processors and devices such as graphics, SSDs and FPGAs, an upgrade from the range of PCIe 3.0 switches to PCIe 4.0 was needed. Microchip has recently announced its new Switchtec PAX line of PCIe Switches, offering up to 100 lane variants supporting 52 devices and 174 GBps switching capabilities.

For readers not embedded in the enterprise world, you may remember in the past we have had a number of PCIe switches enter the consumer market. Initially we saw devices like the nF200 appear on high-end motherboards like the EVGA SR2, and then the PLX PEX switches on Z77 motherboards allowing 16-lane CPUs to offer 32 lanes of connectivity. Some vendors even went a bit overboard, offering dual switches and up to 22 SATA ports with an add-in LSI Raid controller with four-way SLI connectivity, all through a 16-lane CPU.

Recently, we haven’t seen much consumer use of these big PCIe switches. This is due to a couple of main factors – PLX was acquired by Avago in 2014 in a deal that valued the company at $300m, and seemingly overnight the cost of these switches increased three-fold according to my sources at the time, making them unpalatable for consumer use. The next generation of PEX 9000 switches were, by contrast to the PEX 8000 we saw in the consumer space, feature laden with switch-to-switch fabric connectivity and failover support. Avago then purchased Broadcom, and renamed themselves Broadcom, but the situation is still the same, with the switches focused in the server space and making the market ripe for competition. Enter Microchip.

Microchip has been on my radar for a while, and I met with them at Supercomputing 2019. At the time, when asked about PCIe 4.0 switches, I was told ‘soon’. The new Switchtec PAX switches are that line.

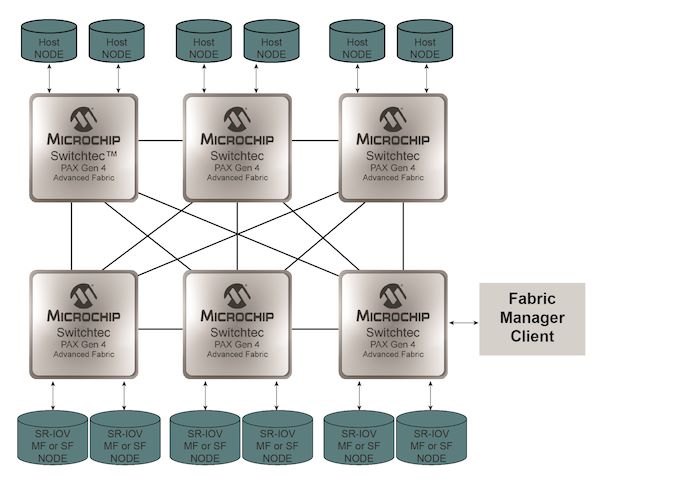

There will be six products, varying from 28-lane to 100-lane support, and bifurcation down to x1. These switches operate in an all-to-all capacity, meaning any lane can be upstream or downstream supported. Thus if a customer wanted a 1-to-99 conversion, despite the potential bottleneck, it would be possible. The new switches support hot-plug per-port, operate low-power Serdes connections, support OCuLink, and can be used with passive, managed, or optical cabling.

Customers for these switches will have access to real-time diagnostics for signaling, as well as fabric management software for the end-point systems. The all-to-all connectivity supports partial chip failure and bypass, along with partial reset features. This makes building a fabric across multiple hosts and devices fairly straightforward, with a variety of topologies supported.

When asked, pricing was given, which means it will depend on the customer and volume. We can imagine a vendor like Dell or Supermicro if they haven’t got fixed contracts with Broadcom switches to perhaps look into these solutions for distributed implementations or storage devices. Some of the second/third tier server vendors I spoke to at Computex were only just deploying PEX 9000-series switches, so perhaps deployment of Gen 4 switches might be more of a 2021/2022 target.

Those interested in Microchip are advised to contact their local representative.

Users looking for a PCIe switch enabled consumer motherboard should look at Supermicro’s new Z490 motherboards. Both are using PEX 8747 chips to expand the PCIe offering on Intel’s Comet Lake from 16 lanes to 32 lanes.

Source: Microchip

Related Reading

- Microsemi Announces PCIe 4.0 Switches And NVMe SSD Controller

- ASMedia Preps ASM2824 PCIe 3.0 Switch

- Avago Announces PLX PEX9700 Series PCIe Switches: Focusing on Data Center and Racks

- Supermicro C9Z490-PGW and X9Z490-PG Motherboard Examined

- The Supermicro C9Z390-PGW Motherboard Review: The Z390 Board With PLX and 10GbE

- The ASRock X99 Extreme11 Review: Eighteen SATA Ports with Haswell-E

- ASUS P9X79-E WS Review: Xeon meets PLX for 7x

- MSI Z87 XPower Review: Our First Z87 with PLX8747

- Four Multi-GPU Z77 Boards from $280-$350 - PLX PEX 8747 featuring Gigabyte, ASRock, ECS and EVGA

36 Comments

View All Comments

jeremyshaw - Monday, June 1, 2020 - link

So these have been on their site for a while now (maybe not spec sheets, but most of the other material, iirc). Is there any change? Availability?Ian Cutress - Monday, June 1, 2020 - link

It looks like they'll now be available. Microchip put up the press release last week.lightningz71 - Monday, June 1, 2020 - link

It would be interesting to see an AM4 board that was outfitted with a PAX 52xG4 and an X570 chipset. IT would be interesting to see how well a 3950 could handle a multi-GPU professional workload with the x16 link connected to the switch and 4 GPUs attached via 4 X8 links from the PAX. You'd still have good IO numbers through the X570, and the CPU connected x4 link for a boot and scratch drive.brucethemoose - Monday, June 1, 2020 - link

I dont think the small cost savings (and performance penalty) over HEDT would be worth it.More extreme scenarios are interesting. One could, for example, hang a boatload of GPUs off a single EPYC or Ice Lake board, which is way cheaper (and possibly faster?) than wiring a bunch of 4-8 GPU nodes together.

Jorgp2 - Tuesday, June 2, 2020 - link

HEDT is cheaper than using switches.The current NVMe 4.0 switch cards are like $400 just for the card, a little more than 3.0 switch cards.

Buying an X299 HEDT platform gives you 50% more lanes and bandwidth, for only ~$400 over a consumer platform. TR4 starts a little higher at ~$800 but gives you 100% more lanes and 200% more bandwidth.

HEDT also has the memory bandwidth to actually saturate all the links at once.

MenhirMike - Monday, June 1, 2020 - link

Yeah, maybe it's a niche of a niche, but currently it's kinda weird trying to build a small-ish storage server: Threadripper 3 would be neat with it's 64 PCIe lanes and ECC support, but $1400 for the "lowest-end" 3960X is a tough sale. Ryzen has ECC support (though not validated by AMD), but only 24 PCIe lanes. Threadripper 1 is great since it's dirt cheap now, but you're dealing with a dead-end platform. EPYC seems to be the go-to-choice, but even that is expensive.Of course, an NVMe card with a PCIe Switch on it is the probable solution here, but having a "storage server" board/cpu combination for hundreds instead of thousands of dollars would be nice.

supdawgwtfd - Monday, June 1, 2020 - link

What's the need for so many lanes in a storage server?An 8x slot is plenty for basic storage.

SAS card + expander and away you go.if you are talking SSD then you want more actual proper lanes and not a switch ideally so Threadripper/Epyc would be the requirement.

Jorgp2 - Tuesday, June 2, 2020 - link

Or the need for high performance CPUs.Atom C3000, Xeon D, or Ryzen embedded have enough performance and IO while using much less power.

Slash3 - Monday, June 1, 2020 - link

Look into the Asus Pro WS X570-Ace. It has the two CPU connected slots (x16/0, x8/x8) in addition to a unique third x8 chipset connected slot. Install a 16, 24 or 32-port SATA HBA (eg, Adaptec ASR-72405 or an LSI 8/16 port + Intel Expander) and you're off to the races. This still leaves you with available onboard NVMe M.2 slots and PCH sourced SATA ports, too, in addition to the two primary PCI Express slots. You could just as easily drop in another HBA and run the GPU at x8, a minimal performance hit at this time.I've got my system set up to do a bit of everything, but part of that is VMs and file/Plex server duty. I have an 3950X + X570 Taichi which is populated with a GPU, LSI 9211-8i SAS HBA, discrete audio card, three NVMe M.2 drives and 12 SATA drives - four SSDs configured in RAID from the motherboard's ports, and the remaining eight are HDDs attached to the HBA. Works great.

Jorgp2 - Tuesday, June 2, 2020 - link

According to AMD fanboys, every AM4 motherboard already has one of these. To magically convert the 16 lanes from the CPU into 32.As for your suggestion, I think using 4x4 bifurcation you could get most of the performance without the cost of a switch.