Intel Launches Xe-LP Server GPU: First Product Is H3C’s Quad GPU XG310 For Cloud Gaming

by Ryan Smith on November 11, 2020 9:01 AM EST

Following the formal launch of Intel’s first discrete GPU in over a generation, the DG1, this morning Intel is launching the server counterpart to that chip, the very plainly named “Intel Server GPU”. Previously referred to as SG1, the Intel Server GPU is based on the same Xe-LP architecture design as the DG1, but aimed at the server market. And like the consumer DG1, Intel is planning on taking an interesting, somewhat conservative tack with their new silicon, chasing after specific markets that are well suited for Xe-LP’s significant investment into video encode hardware.

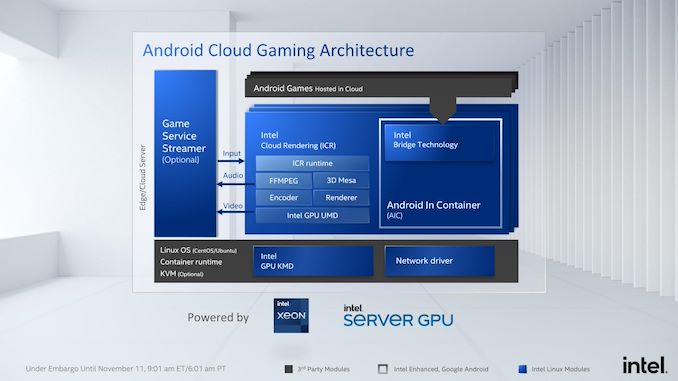

One such market that Intel has decided to chase with their new silicon is the Android game streaming market. The company sees both gaming and video as growth markets, and game streaming is the perfect intersection of the two. So, with an expectation that Android cloud gaming is going to greatly grow in the coming years – especially in China – the company is positioning the GPU the hardware and developing a suitable software stack to use the Server GPU for hosting Android games in the cloud.

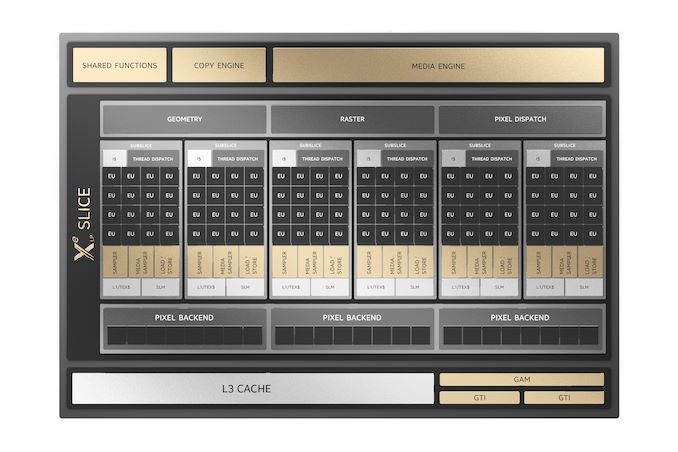

As for the Server GPU itself, it is virtually identical to the DG1 that was unveiled barely two weeks ago. That is to say that it’s essentially a discrete version of Tiger Lake’s integrated GPU, offering 96 EUs and 24 ROPs, and bound to a 128-bit LPDDR4X memory bus. The one notable difference here is that while DG1 laptop implementations are all using 4GB of VRAM (thus far), Intel expects Server GPU installations to get 8GB per GPU.

In any case, the mobile versions of this silicon get what Intel estimates to be around NVIDIA MX350-levels of performance, which is admittedly nothing to write home about. Server GPU should do better than this thanks to its higher clockspeeds and TDPs, but not extensively so. As a result, the best use cases for Intel’s first discrete GPU are not big, shader-bottlenecked games or other rendering tasks, but rather tasks that take advantage of the dGPU’s compute capabilities and/or extensive media encode hardware.

This brings us back to Android cloud gaming, which by its very nature relies heavily on video encoding. The name of the game, as Intel sees it, is all about enabling high-density server installations for game streaming at a lower total cost of ownership (read: cheap), with Intel taking a particular pot-shot at rival NVIDIA over their software licensing costs. Ultimately, with their Server GPU they believe they can meet those specific demands.

And while the Server GPU isn’t going to be setting any performance records, it should still be well ahead of most mobile SoCs – and particularly the kind of lower-performance SoCs that go into the bulk of Android phones. So even at its modest performance levels, Intel is estimating that a single Server GPU can power between 10 and 20 game instances, and that number scales up significantly when you start talking about multiple GPUs.

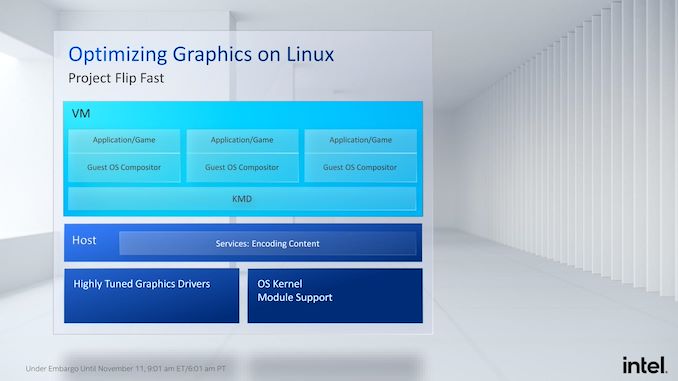

To that end, Intel has even worked on developing the necessary Linux drivers and modules to support Android game streaming workloads, in order to bootstrap the market. Which for Intel means not only developing the base kernel drivers, but also the necessary tools for gaming-focused virtual machines, and even some internal projects to improve game streaming performance. Of particular note here is what Intel is calling Project Flip Fast, which is intel’s optimized client game streaming stack. Among other things, the company has enabled zero copy transfers between the host and the guest in order to avoid the performance hit from copying data between the two levels.

But Intel can only take their Server GPU so far on their own. Not unlike their CPU market, Intel isn’t interested in selling whole systems – or in this case video cards. So the company is working with third parties to bring the Server GPU to market in commercial products.

In conjunction with today’s launch of the Server GPU, Intel is also announcing their first customer and the first product using the GPU: H3C’s new XG310 game streaming card. The pathfinder product for the Server GPU, the XG310 is a quad GPU card aimed specifically at the Android game streaming market. The card offers 8GB of LPDDR4X per GPU, for a total of 32GB of on-board VRAM. Meanwhile connectivity is provided via a PCIe 3.0 x16 connection, which from the looks of the card is being fed into a PLX PCIe switch before being distributed out to the individual GPUs.

The XG310 is intended to be sold to server OEMs and game service providers, and Intel has already enrolled Tencent to show off the card. According to the company, a single XG310 card is able to run 60 streams of Arena of Valor at 720p30, and doubling the number of cards doubles the number of streams, which feeds back into Intel’s overall TCO argument.

Ultimately, as things stand, H3C’s Intel-based card is being validated with servers now. And, according to Intel, it’s already shipping worldwide with orders having already come in. The first customer for it is likely to be Tencent, who will be introducing their own cloud gaming service. Otherwise, thought Intel isn’t talking about any other customers at this time, it’s clear that they’re eager to line up more Android game streaming providers in the future. As well, these style of multi-GPU cards would also be a good fit for Intel’s more traditional high-density media encode customers, whom were previously buying things like Intel’s Visual Compute Accelerator cards.

23 Comments

View All Comments

brunosalezze - Wednesday, November 11, 2020 - link

As AdoredTV leaked months ago, the xe gpus are full of streaming stuff.yeeeeman - Wednesday, November 11, 2020 - link

this is just proof of concept. xe-hpg is the real deal and the one that will proper intel as a clear contender in the gpu market or another fail.hallstein - Wednesday, November 11, 2020 - link

Just to clarify, when Intel says a single SG1 can “power between 10 and 20 game instances”, they’re talking simultaneously, and the SG1 is undertaking the video encode only, and not the game graphics?Or are they taking about running extremely simple games?

Because something akin an nvidia mx360 is certainly not going to be capable of running 20 separate instances of Shadow of the Tomb Raider.

Ryan Smith - Wednesday, November 11, 2020 - link

"Or are they taking about running extremely simple games?"They're talking about running extremely simple games. Arena of Valor, for example, can be run at 15 instances per GPU.

robbro9 - Wednesday, November 11, 2020 - link

Are such simple games really not playable "as-is" on older/basic/low end android smartphones without streaming????Seems like tons of extra trouble to render a "simple" game at one place, encode, transmit, receive decode and stream it to a screen, when it could be locally processed?

lmcd - Wednesday, November 11, 2020 - link

Even the 765G is a mediocre performer in mobile games, before even considering performance per watt. The most optimized aspects of most mobile phones are video decode and network transfers, so it might actually be more power efficient from the end user's perspective to do this.Spunjji - Thursday, November 12, 2020 - link

Indeed, and the average device chipset isn't even a patch on the 765G.That said... I'm not entirely sold that Xe is *15x* more powerful than the average mobile phone GPU. 10x seems more reasonable. I feel like Intel might be fudging the numbers a little to make this look more revolutionary than it really is.

robbro9 - Thursday, November 12, 2020 - link

Makes sense. Catch #2 I see is that who is paying for the streaming service? How is it being funded? By someone with a low end phone? Whats the business model for this?Sharma_Ji - Thursday, November 12, 2020 - link

Le pubgM: can't keep proper 60fps on 2080Ti.DanNeely - Wednesday, November 11, 2020 - link

Is it just an artifact of camera angle, or are the different aspect ratios of the GPUs and ram chips in the left/right vs top/bottom oriented GPUs the result of a really lazy photoshop of the card?