Ashes of the Singularity Revisited: A Beta Look at DirectX 12 & Asynchronous Shading

by Daniel Williams & Ryan Smith on February 24, 2016 1:00 PM ESTDirectX 12 Single-GPU Performance

We’ll start things off with a look at single-GPU performance. For this, we’ve grabbed a collection of RTG and NVIDIA GPUs covering the entire DX12 generation, from GCN 1.0 and Kepler to GCN 1.2 and Maxwell. This will give us a good idea of how the game performs both across a wide span of GPU performance levels, and how (if at all) the various GPU generational changes play a role.

Meanwhile unless otherwise noted, we’re using Ashes’ High quality setting, which turns up a number of graphical features and also utilizes 2x MSAA. It’s also worth mentioning that while Ashes does allow async shading to be turned off and on, this option is on by default unless turned off in the game’s INI file.

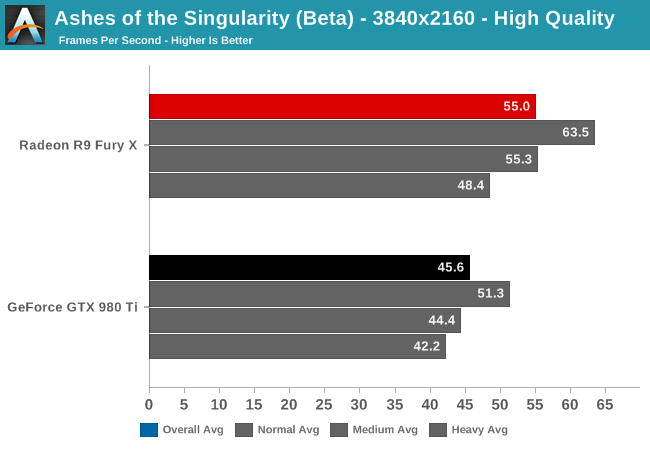

Starting at 4K, we have the GeForce GTX 980 Ti and Radeon R9 Fury X. On the latest beta the Fury X has a strong lead over the normally faster GTX 980 Ti, beating it by 20% and coming close to hitting 60fps.

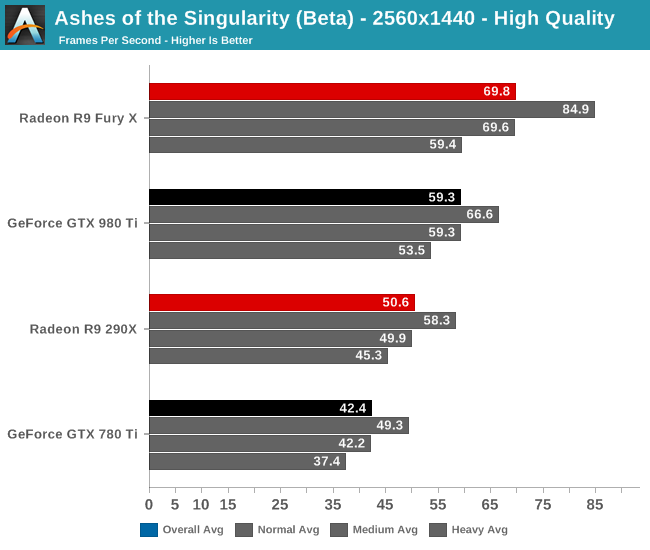

When we drop down to 1440p and introduce last-generation’s flagship video cards, the GeForce GTX 780 Ti and Radeon R9 290X, the story is much the same. The Fury X continues to hold a 10fps lead over the GTX 980 Ti, giving it an 18% lead. Similarly, the R9 290X has an 8fps lead over the 780 Ti, translating into a 19% performance lead. This is a significant turnabout from where we normally see these cards, as 780 Ti traditionally holds a lead over the 290X.

Meanwhile looking at the average framerates with different batch count intensities, there admittedly isn’t much remarkable here. All cards take roughly the same performance hit with increasingly larger batch counts.

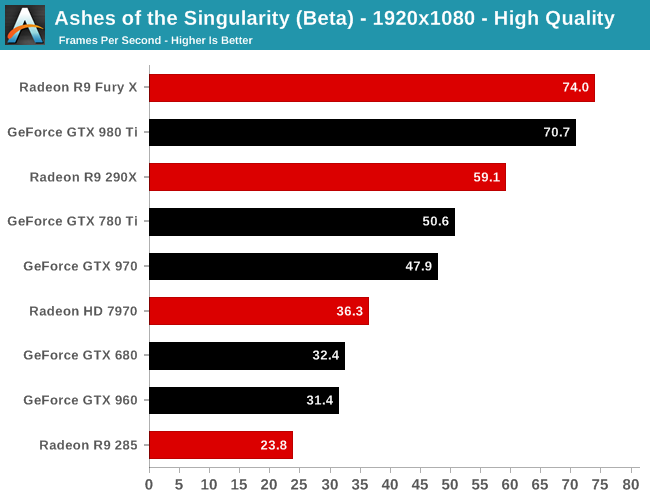

Finally at 1080p, with our full lineup of cards we can see that RTG’s lead in this latest beta is nearly absolute. The 2012 flagship battle between the 7970 and the GTX 680 puts the 7970 in the lead by 12%, or just shy of 4fps. Elsewhere the GTX 980 Ti does close on the Fury X, but RTG’s current-gen flagship remains in the lead.

The one outlier here is the Radeon R9 285, which is the only 2GB RTG card in our collection. At this point we suspect it’s VRAM limited, but it would require further investigation.

153 Comments

View All Comments

permastoned - Sunday, February 28, 2016 - link

Wasn't trolling - there are other metrics that show the case; for you to imply that 3dmark isn't valid is just silly: http://wccftech.com/amd-r9-290x-fast-titan-dx12-en...Another thing; what's the deal with all these fanboys? There is no benefit to being a fanboy of either AMD or Nvidia, it is just going to cause you problems because it may cause you to buy based on brand, rather than on performance per dollar, which is the factor that actually matters. At different price ranges different brands are better - e.g top end, a 980Ti is better than a fury X, however if you are looking in the price bracket below, and want buy a 980, you will get better performance and performance per dollar from a standard fury.

Being a fanboy will blind you from accepting the truth when the tides shift and the tables eventually turn. It helps you in no way at all, it disadvantages you in many. It also causes you to get angry on forums for no reason, and call people 'trolls' when they are stating facts.

Soulwager - Sunday, March 20, 2016 - link

Poorly how, exactly? It looks to me like DX12 is just removing a bottleneck for AMD that Nvidia already fixed in DX11. It would be more correct to say that AMD has poor DX11 performance compared to Maxwell, and neither are constrained by driver overhead in DX12.SunLord - Wednesday, February 24, 2016 - link

DX12 by desing will slightly favor older AMD designs simply because of the design decisions that AMD made compared to Nvidia with regards DX11 that are paying off with Dx12 while Nvidia benefited from it with DX11 games which is why they own around 80% or so of the gaming GPU market. How much of an impact this will be depends on the game just like how it is with DX11 games some do better on AMD some will be better on Nvidia.anubis44 - Thursday, February 25, 2016 - link

If results like these continue with other DX12 games, nVidia's going to be the one with only 20% in a matter of months.althaz - Thursday, February 25, 2016 - link

Even in generation where AMD/ATI have been dominant in terms of performance and value, they've still not really dominated in sales.Just like even when AMD's CPUs were offering twice the performance per watt and cheaper performance per dollar, they still sold less than Intel.

Doing it for a short time isn't enough, you have to do it for *years* to get a lead like nVidia has.

Firstly you have to overturn brand-loyalty from complete morons (aka everybody with any brand loyalty to any company, these are corporations that only care about the contents of your wallet, make rational choices). That will happen only a small percentage of people at a time. So you have to maintain a pretty serious lead for a long time to do it.

AMD did manage to do it in the enthusiast space with CPUs, but (arguably due to Intel being dodgy pricks) they didn't quite turn that into mainstream market dominance. Which sucks for them, because they absolutely deserved it.

So even if AMD maintains this DX12 lead for the rest of the year and all of the next, they'll still sell less GPUs than nVidia will in that time. But if they can do it for another year after that, *then* would they be likely to start winning the GPU war.

Personally, I don't care a lot. I hope AMD do better because they are losing and competition is good. However, I will make my next purchasing decision on performance and price, nothing else.

permastoned - Sunday, February 28, 2016 - link

Wasn't trolling - there are other metrics that show the case; for you to imply that 3dmark isn't valid is just silly: http://wccftech.com/amd-r9-290x-fast-titan-dx12-en...2 points = trend.

Another thing; what's the deal with all these fanboys? There is no benefit to being a fanboy of either AMD or Nvidia, it is just going to cause you problems because it may cause you to buy based on brand, rather than on performance per dollar, which is the factor that actually matters. At different price ranges different brands are better - e.g top end, a 980Ti is better than a fury X, however if you are looking in the price bracket below, and want buy a 980, you will get better performance and performance per dollar from a standard fury.

Being a fanboy will blind you from accepting the truth when the tides shift and the tables eventually turn. It helps you in no way at all, it disadvantages you in many. It also causes you to get angry on forums for no reason, and call people 'trolls' when they are stating facts.

Continuity28 - Wednesday, February 24, 2016 - link

By the time DX12 becomes commonplace, I'm sure they will have cards that were built for DX12.It makes a lot of sense to design your cards around what will be most useful today, not years in the future when people are replacing their cards anyways. Does it really matter if AMD's DX12 performance is better when it isn't relevant, when their DX11 performance is worse when it is relevant?

Senti - Wednesday, February 24, 2016 - link

Indeed it makes much sense to build cards exactly for today so people would be forced to buy new hardware next year to have decent performance. From certain green point of view. But many people are actually hoping that their brand new mid-top card would last with decent performance at least some years.cmdrdredd - Wednesday, February 24, 2016 - link

Hardware performance for new APIs is always weak with first gen products. That isn't changing here. When there are many DX12 titles out and new cards are out there, you'll see that people don't want to try playing with their old cards and will be buying new. That's how it works.ToTTenTranz - Wednesday, February 24, 2016 - link

"Hardware performance for new APIs is always weak with first gen products."Except that doesn't seem to be the case with 2012's Radeon line.