Microchip’s New PCIe 4.0 PCIe Switches: 100 lanes, 174 GBps

by Dr. Ian Cutress on June 1, 2020 12:00 PM EST

There are multiple reasons to need a PCIe switch. These can include expanding PCIe connectivity to more devices than the CPU is capable, to extend a PCIe fabric across multiple hosts, to generate failover support, or to increase device-to-device communication bandwidth in limited scenarios. With the advent of PCIe 4.0 processors and devices such as graphics, SSDs and FPGAs, an upgrade from the range of PCIe 3.0 switches to PCIe 4.0 was needed. Microchip has recently announced its new Switchtec PAX line of PCIe Switches, offering up to 100 lane variants supporting 52 devices and 174 GBps switching capabilities.

For readers not embedded in the enterprise world, you may remember in the past we have had a number of PCIe switches enter the consumer market. Initially we saw devices like the nF200 appear on high-end motherboards like the EVGA SR2, and then the PLX PEX switches on Z77 motherboards allowing 16-lane CPUs to offer 32 lanes of connectivity. Some vendors even went a bit overboard, offering dual switches and up to 22 SATA ports with an add-in LSI Raid controller with four-way SLI connectivity, all through a 16-lane CPU.

Recently, we haven’t seen much consumer use of these big PCIe switches. This is due to a couple of main factors – PLX was acquired by Avago in 2014 in a deal that valued the company at $300m, and seemingly overnight the cost of these switches increased three-fold according to my sources at the time, making them unpalatable for consumer use. The next generation of PEX 9000 switches were, by contrast to the PEX 8000 we saw in the consumer space, feature laden with switch-to-switch fabric connectivity and failover support. Avago then purchased Broadcom, and renamed themselves Broadcom, but the situation is still the same, with the switches focused in the server space and making the market ripe for competition. Enter Microchip.

Microchip has been on my radar for a while, and I met with them at Supercomputing 2019. At the time, when asked about PCIe 4.0 switches, I was told ‘soon’. The new Switchtec PAX switches are that line.

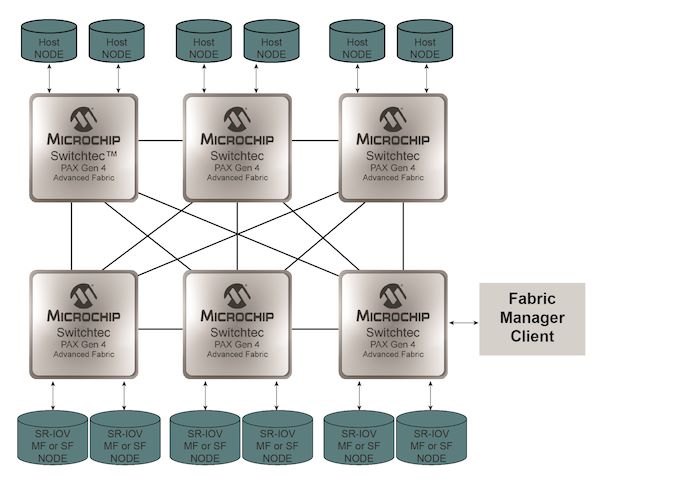

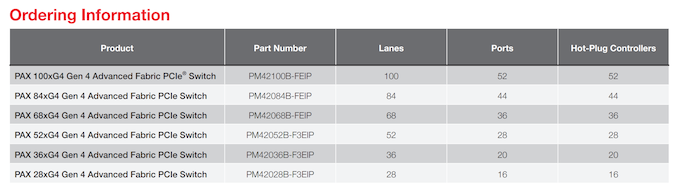

There will be six products, varying from 28-lane to 100-lane support, and bifurcation down to x1. These switches operate in an all-to-all capacity, meaning any lane can be upstream or downstream supported. Thus if a customer wanted a 1-to-99 conversion, despite the potential bottleneck, it would be possible. The new switches support hot-plug per-port, operate low-power Serdes connections, support OCuLink, and can be used with passive, managed, or optical cabling.

Customers for these switches will have access to real-time diagnostics for signaling, as well as fabric management software for the end-point systems. The all-to-all connectivity supports partial chip failure and bypass, along with partial reset features. This makes building a fabric across multiple hosts and devices fairly straightforward, with a variety of topologies supported.

When asked, pricing was given, which means it will depend on the customer and volume. We can imagine a vendor like Dell or Supermicro if they haven’t got fixed contracts with Broadcom switches to perhaps look into these solutions for distributed implementations or storage devices. Some of the second/third tier server vendors I spoke to at Computex were only just deploying PEX 9000-series switches, so perhaps deployment of Gen 4 switches might be more of a 2021/2022 target.

Those interested in Microchip are advised to contact their local representative.

Users looking for a PCIe switch enabled consumer motherboard should look at Supermicro’s new Z490 motherboards. Both are using PEX 8747 chips to expand the PCIe offering on Intel’s Comet Lake from 16 lanes to 32 lanes.

Source: Microchip

Related Reading

- Microsemi Announces PCIe 4.0 Switches And NVMe SSD Controller

- ASMedia Preps ASM2824 PCIe 3.0 Switch

- Avago Announces PLX PEX9700 Series PCIe Switches: Focusing on Data Center and Racks

- Supermicro C9Z490-PGW and X9Z490-PG Motherboard Examined

- The Supermicro C9Z390-PGW Motherboard Review: The Z390 Board With PLX and 10GbE

- The ASRock X99 Extreme11 Review: Eighteen SATA Ports with Haswell-E

- ASUS P9X79-E WS Review: Xeon meets PLX for 7x

- MSI Z87 XPower Review: Our First Z87 with PLX8747

- Four Multi-GPU Z77 Boards from $280-$350 - PLX PEX 8747 featuring Gigabyte, ASRock, ECS and EVGA

36 Comments

View All Comments

brucethemoose - Monday, June 1, 2020 - link

I not so sure about that right now.Either way, PS5/XBX ports will absolutely change that in the near future.

dotjaz - Tuesday, June 2, 2020 - link

You've never seen new cases have you? I don't use screws for HDD/SSD, haven't use one for years. Also there's no discernable difference between SATA and NVMe for daily use. Unless all you do is copy huge files.willis936 - Monday, June 1, 2020 - link

It’s a thing of the past... for now.It’s a thing that is possible and likely in the future. AMD’s multi-die approach has large implications for GPUs, which have the largest monolithic consumer dies in history. Larger dies have much lower yield and cost much more. If you split your GPU across dies then you might also look at splitting it across cards too. On paper there is no reason SIMD can’t perfectly scale across cards. Hell, it does in HPC.

sftech - Monday, June 1, 2020 - link

Now I can design a 16x pcie4 to #12 m.2 slot board, yee-hawdrzzz - Tuesday, June 2, 2020 - link

Mistake in this line:"Those interested in Microchip are advised to content their local representative."

I think it was supposed to be:

"Those interested in Microchip are advised to contact their local representative."

abufrejoval - Tuesday, June 2, 2020 - link

Having grown up with Lego, these things hit home in a territory where fact checking remains de-activated: I immediately imagine a switched backplane, that could be filled with the type of AMD/Subor FireFlight SoCs in 1-8 increments to create a stackable performance console.Thinking about the BIOS initialization code and teaching the OS and game engine schedulers how to operate the topology turns it into more of a nightmare.

And then I can't help to think that I'd really prefer these being Infinity Fabric switch chips, not just PCIe 4: Not that I really know, but I'd imagine that remote memory access over PCIe could cost quite a bit of latency vs. IF configured for memory (when dealing with memory) and PCIe (when dealing with I/O).

But with 12 or even 16 cores in an AM4 socket at current prices, stacking has prettey much happened for any CPU workload I might personally require, while GPUs don't take kindly even to the memory latency of HBM or GDDR6. PCIe? Might as well be using punch cards!

With the adult slowly creaking back in, I can't help but think that these are rather late, even in the worlds of all-flash storage arrays and 400Gbit/s Ethernet.

Somehow, I still think there used to be times when a PLX or IDT switch on a standard ATX mainboard was just €50 extra for "Pro"... Could be some misfiring neurons, but I sure never appreciated how Avago killed a lot of consumer accessible innovation with these crazy price hikes.